Mark S. Seidenberg

Learning to Read through Machine Teaching

Jul 02, 2020

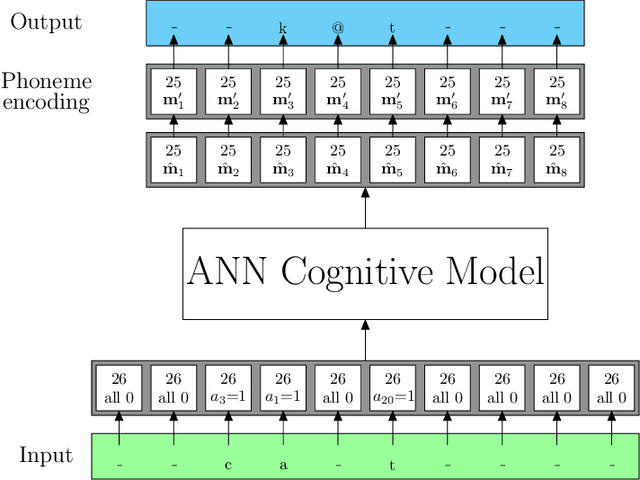

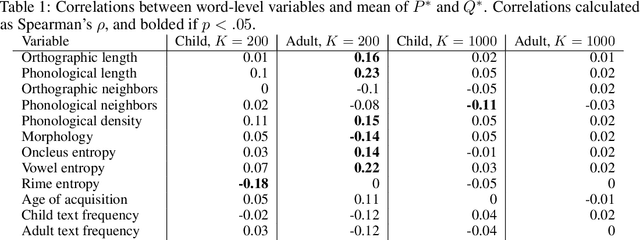

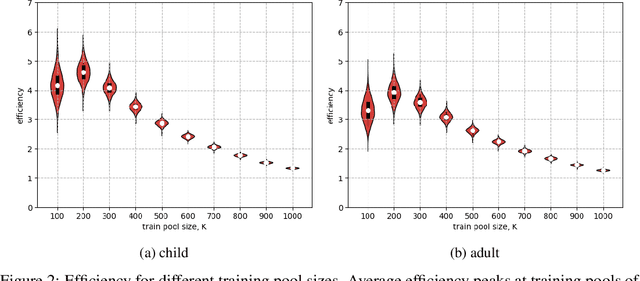

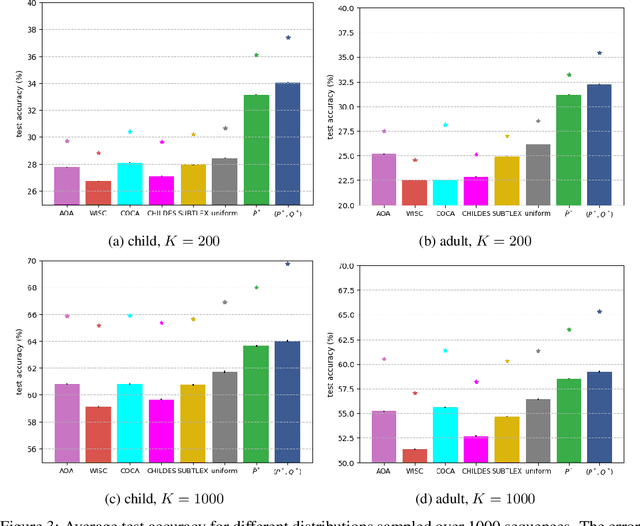

Abstract:Learning to read words aloud is a major step towards becoming a reader. Many children struggle with the task because of the inconsistencies of English spelling-sound correspondences. Curricula vary enormously in how these patterns are taught. Children are nonetheless expected to master the system in limited time (by grade 4). We used a cognitively interesting neural network architecture to examine whether the sequence of learning trials could be structured to facilitate learning. This is a hard combinatorial optimization problem even for a modest number of learning trials (e.g., 10K). We show how this sequence optimization problem can be posed as optimizing over a time varying distribution i.e., defining probability distributions over words at different steps in training. We then use stochastic gradient descent to find an optimal time-varying distribution and a corresponding optimal training sequence. We observed significant improvement on generalization accuracy compared to baseline conditions (random sequences; sequences biased by word frequency). These findings suggest an approach to improving learning outcomes in domains where performance depends on ability to generalize beyond limited training experience.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge