Mario Montagud

Experimental Assessment of Neural 3D Reconstruction for Small UAV-based Applications

Jun 24, 2025Abstract:The increasing miniaturization of Unmanned Aerial Vehicles (UAVs) has expanded their deployment potential to indoor and hard-to-reach areas. However, this trend introduces distinct challenges, particularly in terms of flight dynamics and power consumption, which limit the UAVs' autonomy and mission capabilities. This paper presents a novel approach to overcoming these limitations by integrating Neural 3D Reconstruction (N3DR) with small UAV systems for fine-grained 3-Dimensional (3D) digital reconstruction of small static objects. Specifically, we design, implement, and evaluate an N3DR-based pipeline that leverages advanced models, i.e., Instant-ngp, Nerfacto, and Splatfacto, to improve the quality of 3D reconstructions using images of the object captured by a fleet of small UAVs. We assess the performance of the considered models using various imagery and pointcloud metrics, comparing them against the baseline Structure from Motion (SfM) algorithm. The experimental results demonstrate that the N3DR-enhanced pipeline significantly improves reconstruction quality, making it feasible for small UAVs to support high-precision 3D mapping and anomaly detection in constrained environments. In more general terms, our results highlight the potential of N3DR in advancing the capabilities of miniaturized UAV systems.

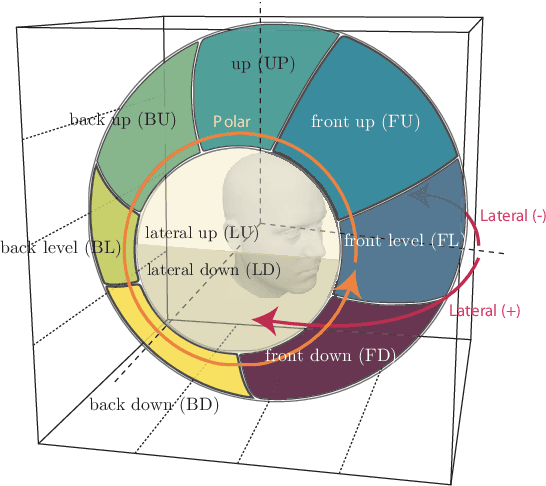

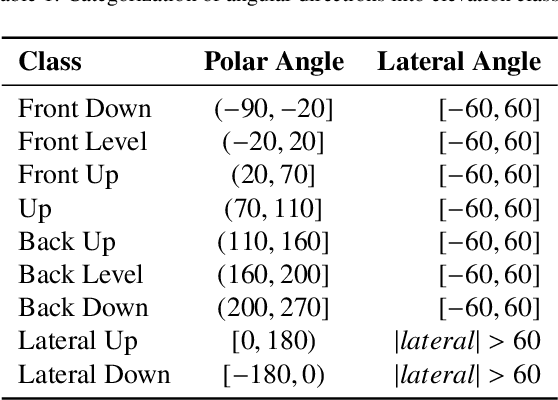

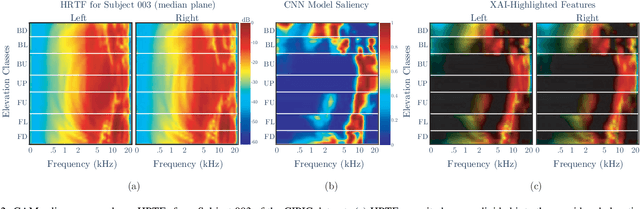

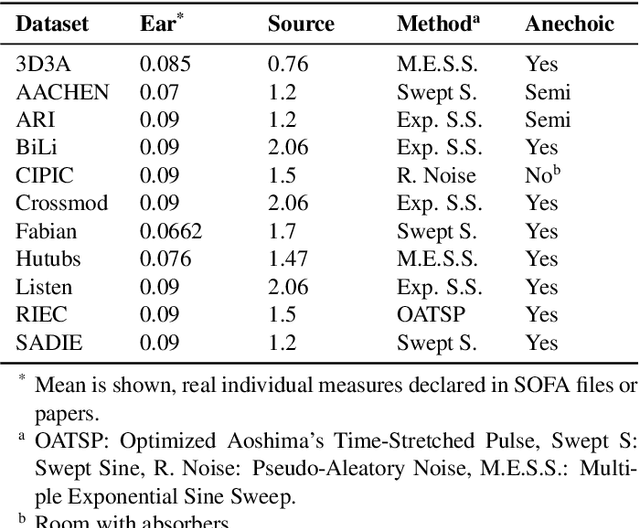

A Data-Driven Exploration of Elevation Cues in HRTFs: An Explainable AI Perspective Across Multiple Datasets

Mar 14, 2025

Abstract:Precise elevation perception in binaural audio remains a challenge, despite extensive research on head-related transfer functions (HRTFs) and spectral cues. While prior studies have advanced our understanding of sound localization cues, the interplay between spectral features and elevation perception is still not fully understood. This paper presents a comprehensive analysis of over 600 subjects from 11 diverse public HRTF datasets, employing a convolutional neural network (CNN) model combined with explainable artificial intelligence (XAI) techniques to investigate elevation cues. In addition to testing various HRTF pre-processing methods, we focus on both within-dataset and inter-dataset generalization and explainability, assessing the model's robustness across different HRTF variations stemming from subjects and measurement setups. By leveraging class activation mapping (CAM) saliency maps, we identify key frequency bands that may contribute to elevation perception, providing deeper insights into the spectral features that drive elevation-specific classification. This study offers new perspectives on HRTF modeling and elevation perception by analyzing diverse datasets and pre-processing techniques, expanding our understanding of these cues across a wide range of conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge