Marco Schorlemmer

Can Interpretability Layouts Influence Human Perception of Offensive Sentences?

Mar 01, 2024Abstract:This paper conducts a user study to assess whether three machine learning (ML) interpretability layouts can influence participants' views when evaluating sentences containing hate speech, focusing on the "Misogyny" and "Racism" classes. Given the existence of divergent conclusions in the literature, we provide empirical evidence on using ML interpretability in online communities through statistical and qualitative analyses of questionnaire responses. The Generalized Additive Model estimates participants' ratings, incorporating within-subject and between-subject designs. While our statistical analysis indicates that none of the interpretability layouts significantly influences participants' views, our qualitative analysis demonstrates the advantages of ML interpretability: 1) triggering participants to provide corrective feedback in case of discrepancies between their views and the model, and 2) providing insights to evaluate a model's behavior beyond traditional performance metrics.

uHelp: intelligent volunteer search for mutual help communities

Jan 26, 2023

Abstract:When people need help with their day-to-day activities, they turn to family, friends or neighbours. But despite an increasingly networked world, technology falls short in finding suitable volunteers. In this paper, we propose uHelp, a platform for building a community of helpful people and supporting community members find the appropriate help within their social network. Lately, applications that focus on finding volunteers have started to appear, such as Helpin or Facebook's Community Help. However, what distinguishes uHelp from existing applications is its trust-based intelligent search for volunteers. Although trust is crucial to these innovative social applications, none of them have seriously achieved yet a trust-building solution such as that of uHelp. uHelp's intelligent search for volunteers is based on a number of AI technologies: (1) a novel trust-based flooding algorithm that navigates one's social network looking for appropriate trustworthy volunteers; (2) a novel trust model that maintains the trustworthiness of peers by learning from their similar past experiences; and (3) a semantic similarity model that assesses the similarity of experiences. This article presents the uHelp application, describes the underlying AI technologies that allow uHelp find trustworthy volunteers efficiently, and illustrates the implementation details. uHelp's initial prototype has been tested with a community of single parents in Barcelona, and the app is available online at both Apple Store and Google Play.

Learning for Detecting Norm Violation in Online Communities

Apr 30, 2021

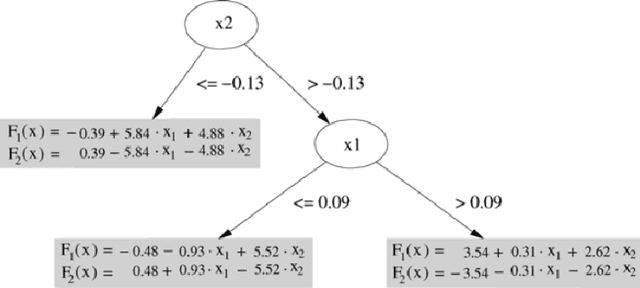

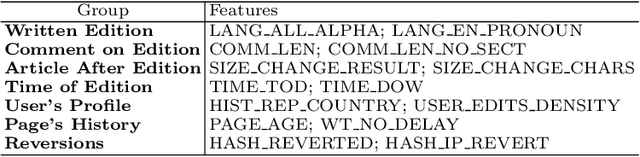

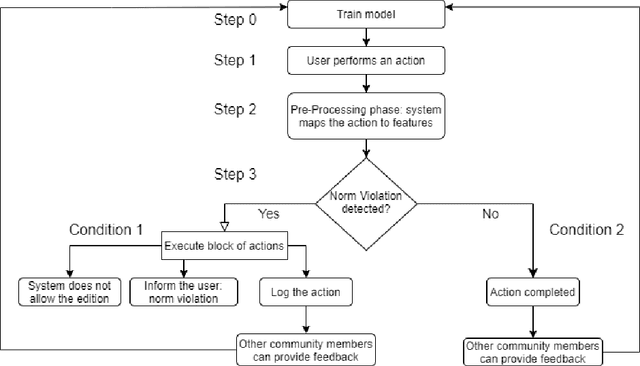

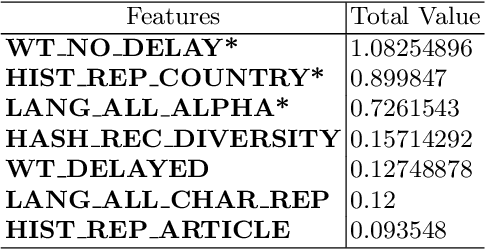

Abstract:In this paper, we focus on normative systems for online communities. The paper addresses the issue that arises when different community members interpret these norms in different ways, possibly leading to unexpected behavior in interactions, usually with norm violations that affect the individual and community experiences. To address this issue, we propose a framework capable of detecting norm violations and providing the violator with information about the features of their action that makes this action violate a norm. We build our framework using Machine Learning, with Logistic Model Trees as the classification algorithm. Since norm violations can be highly contextual, we train our model using data from the Wikipedia online community, namely data on Wikipedia edits. Our work is then evaluated with the Wikipedia use case where we focus on the norm that prohibits vandalism in Wikipedia edits.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge