Mano Vikash Janardhanan

Greenback Bears and Fiscal Hawks: Finance is a Jungle and Text Embeddings Must Adapt

Nov 11, 2024Abstract:Financial documents are filled with specialized terminology, arcane jargon, and curious acronyms that pose challenges for general-purpose text embeddings. Yet, few text embeddings specialized for finance have been reported in the literature, perhaps in part due to a lack of public datasets and benchmarks. We present BAM embeddings, a set of text embeddings finetuned on a carefully constructed dataset of 14.3M query-passage pairs. Demonstrating the benefits of domain-specific training, BAM embeddings achieve Recall@1 of 62.8% on a held-out test set, vs. only 39.2% for the best general-purpose text embedding from OpenAI. Further, BAM embeddings increase question answering accuracy by 8% on FinanceBench and show increased sensitivity to the finance-specific elements that are found in detailed, forward-looking and company and date-specific queries. To support further research we describe our approach in detail, quantify the importance of hard negative mining and dataset scale.

On Learning a Hidden Directed Graph with Path Queries

Feb 26, 2020

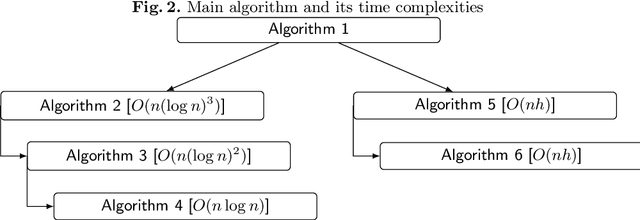

Abstract:In this paper, we consider the problem of reconstructing a directed graph using path queries. In this query model of learning, a graph is hidden from the learner, and the learner can access information about it with path queries. For a source and destination node, a path query returns whether there is a directed path from the source to the destination node in the hidden graph. In this paper we first give bounds for learning graphs on $n$ vertices and $k$ strongly connected components. We then study the case of bounded degree directed trees and give new algorithms for learning "almost-trees" -- directed trees to which extra edges have been added. We also give some lower bound constructions justifying our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge