Mégane Millan

Explaining Regression Based Neural Network Model

Apr 15, 2020

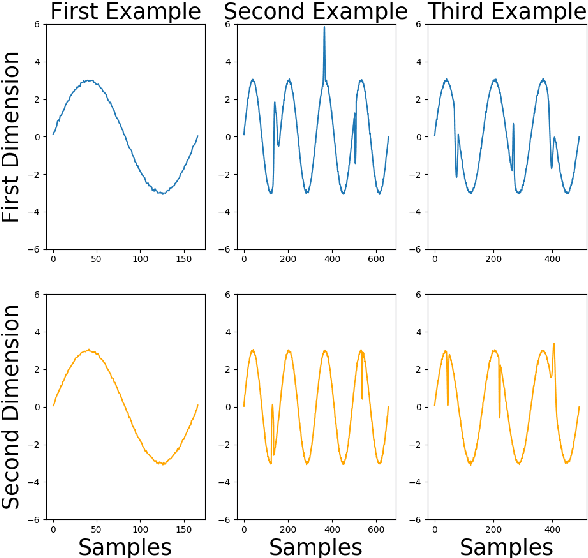

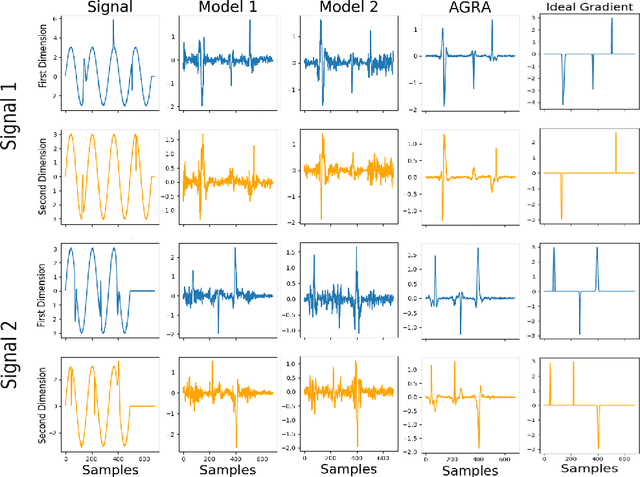

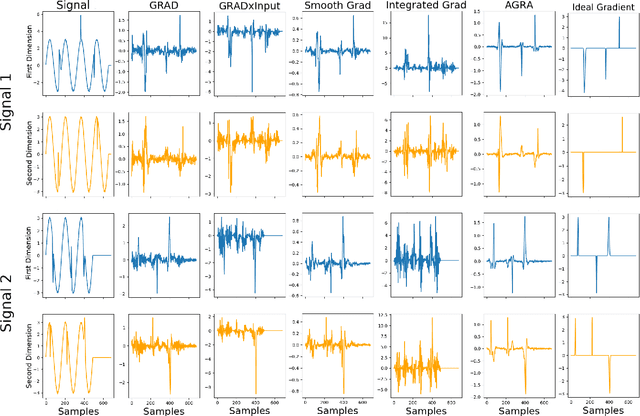

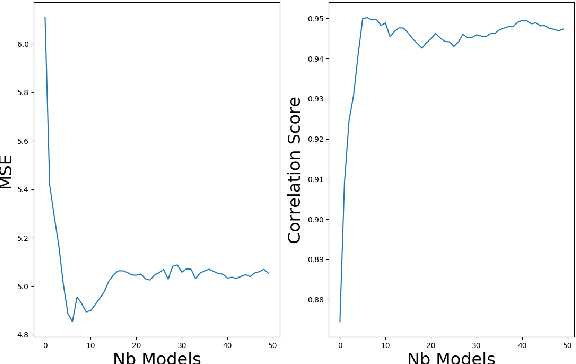

Abstract:Several methods have been proposed to explain Deep Neural Network (DNN). However, to our knowledge, only classification networks have been studied to try to determine which input dimensions motivated the decision. Furthermore, as there is no ground truth to this problem, results are only assessed qualitatively in regards to what would be meaningful for a human. In this work, we design an experimental settings where the ground truth can been established: we generate ideal signals and disrupted signals with errors and learn a neural network that determines the quality of the signals. This quality is simply a score based on the distance between the disrupted signals and the corresponding ideal signal. We then try to find out how the network estimated this score and hope to find the time-step and dimensions of the signal where errors are present. This experimental setting enables us to compare several methods for network explanation and to propose a new method, named AGRA for Accurate Gradient, based on several trainings that decrease the noise present in most state-of-the-art results. Comparative results show that the proposed method outperforms state-of-the-art methods for locating time-steps where errors occur in the signal.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge