Lluís Arola-Fernández

Intuition emerges in Maximum Caliber models at criticality

Aug 08, 2025Abstract:Whether large predictive models merely parrot their training data or produce genuine insight lacks a physical explanation. This work reports a primitive form of intuition that emerges as a metastable phase of learning that critically balances next-token prediction against future path-entropy. The intuition mechanism is discovered via mind-tuning, the minimal principle that imposes Maximum Caliber in predictive models with a control temperature-like parameter $\lambda$. Training on random walks in deterministic mazes reveals a rich phase diagram: imitation (low $\lambda$), rule-breaking hallucination (high $\lambda$), and a fragile in-between window exhibiting strong protocol-dependence (hysteresis) and multistability, where models spontaneously discover novel goal-directed strategies. These results are captured by an effective low-dimensional theory and frame intuition as an emergent property at the critical balance between memorizing what is and wondering what could be.

An effective theory of collective deep learning

Oct 19, 2023

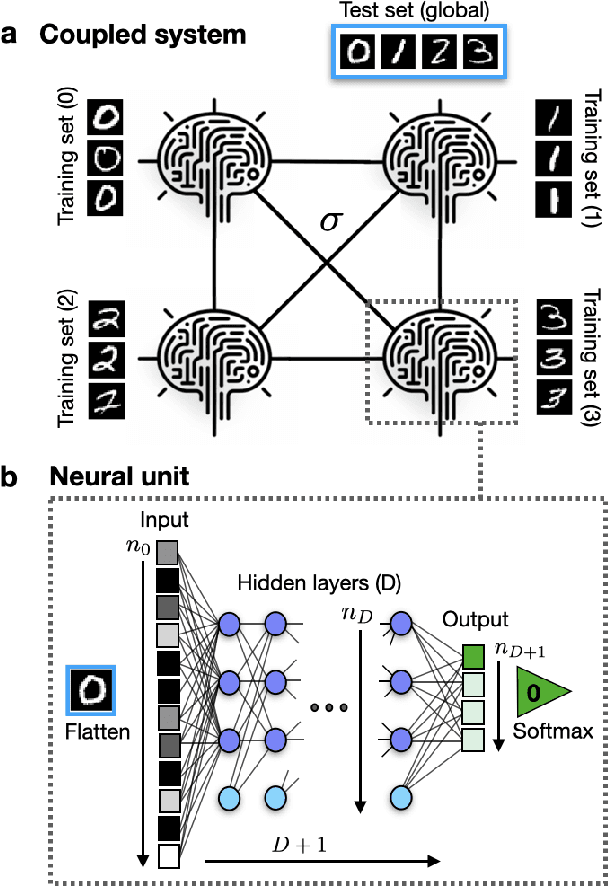

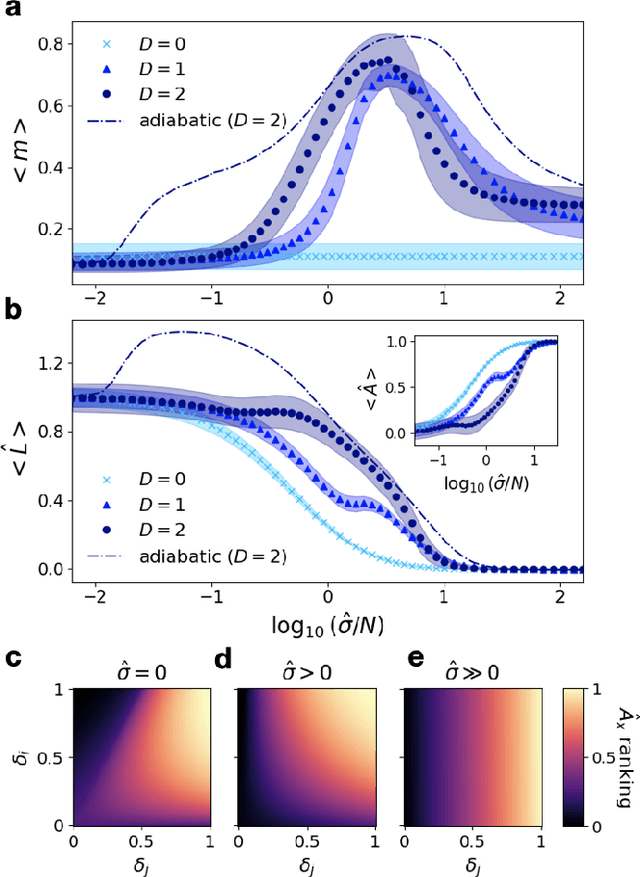

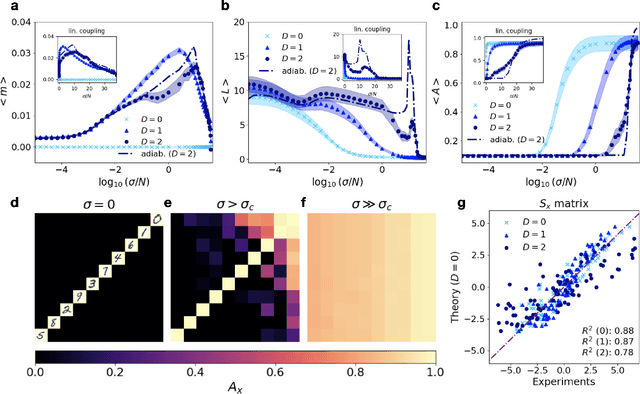

Abstract:Unraveling the emergence of collective learning in systems of coupled artificial neural networks is an endeavor with broader implications for physics, machine learning, neuroscience and society. Here we introduce a minimal model that condenses several recent decentralized algorithms by considering a competition between two terms: the local learning dynamics in the parameters of each neural network unit, and a diffusive coupling among units that tends to homogenize the parameters of the ensemble. We derive the coarse-grained behavior of our model via an effective theory for linear networks that we show is analogous to a deformed Ginzburg-Landau model with quenched disorder. This framework predicts (depth-dependent) disorder-order-disorder phase transitions in the parameters' solutions that reveal the onset of a collective learning phase, along with a depth-induced delay of the critical point and a robust shape of the microscopic learning path. We validate our theory in realistic ensembles of coupled nonlinear networks trained in the MNIST dataset under privacy constraints. Interestingly, experiments confirm that individual networks -- trained only with private data -- can fully generalize to unseen data classes when the collective learning phase emerges. Our work elucidates the physics of collective learning and contributes to the mechanistic interpretability of deep learning in decentralized settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge