Lingbo Huang

Spectral-Spatial Mamba for Hyperspectral Image Classification

Apr 29, 2024

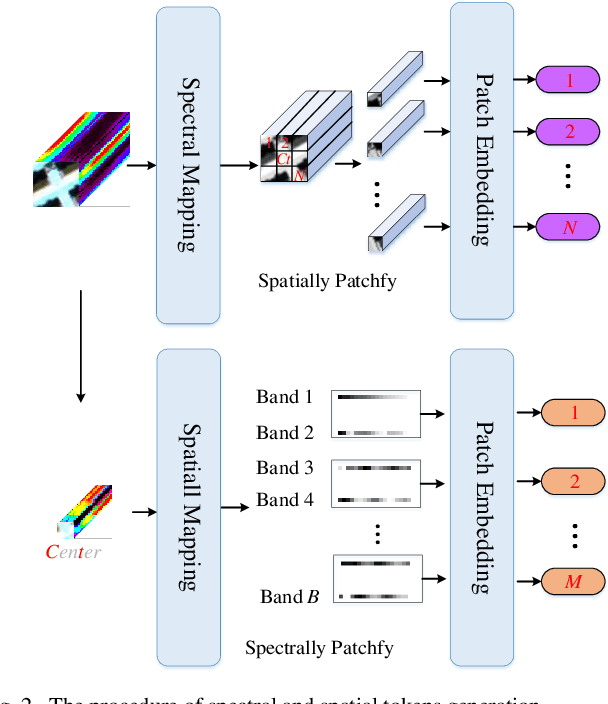

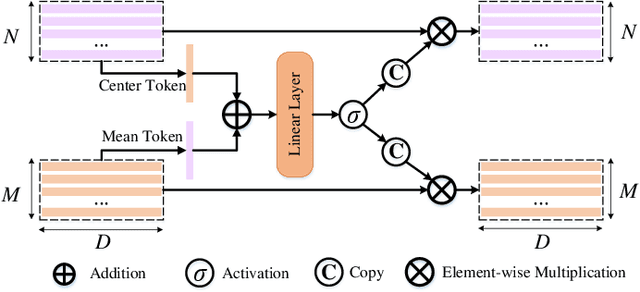

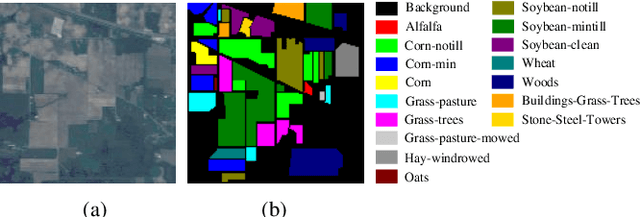

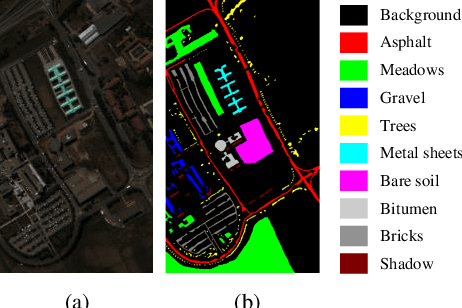

Abstract:Recently, deep learning models have achieved excellent performance in hyperspectral image (HSI) classification. Among the many deep models, Transformer has gradually attracted interest for its excellence in modeling the long-range dependencies of spatial-spectral features in HSI. However, Transformer has the problem of quadratic computational complexity due to the self-attention mechanism, which is heavier than other models and thus has limited adoption in HSI processing. Fortunately, the recently emerging state space model-based Mamba shows great computational efficiency while achieving the modeling power of Transformers. Therefore, in this paper, we make a preliminary attempt to apply the Mamba to HSI classification, leading to the proposed spectral-spatial Mamba (SS-Mamba). Specifically, the proposed SS-Mamba mainly consists of spectral-spatial token generation module and several stacked spectral-spatial Mamba blocks. Firstly, the token generation module converts any given HSI cube to spatial and spectral tokens as sequences. And then these tokens are sent to stacked spectral-spatial mamba blocks (SS-MB). Each SS-MB block consists of two basic mamba blocks and a spectral-spatial feature enhancement module. The spatial and spectral tokens are processed separately by the two basic mamba blocks, respectively. Besides, the feature enhancement module modulates spatial and spectral tokens using HSI sample's center region information. In this way, the spectral and spatial tokens cooperate with each other and achieve information fusion within each block. The experimental results conducted on widely used HSI datasets reveal that the proposed model achieves competitive results compared with the state-of-the-art methods. The Mamba-based method opens a new window for HSI classification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge