Leila Khalili

BabyBear: Cheap inference triage for expensive language models

May 24, 2022

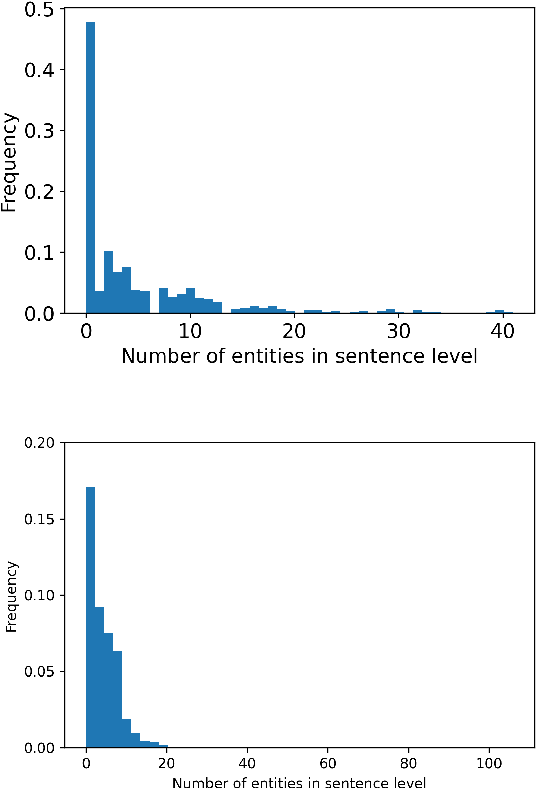

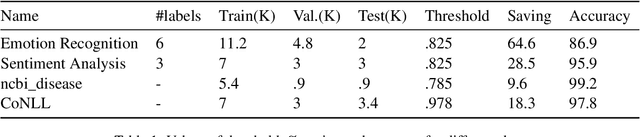

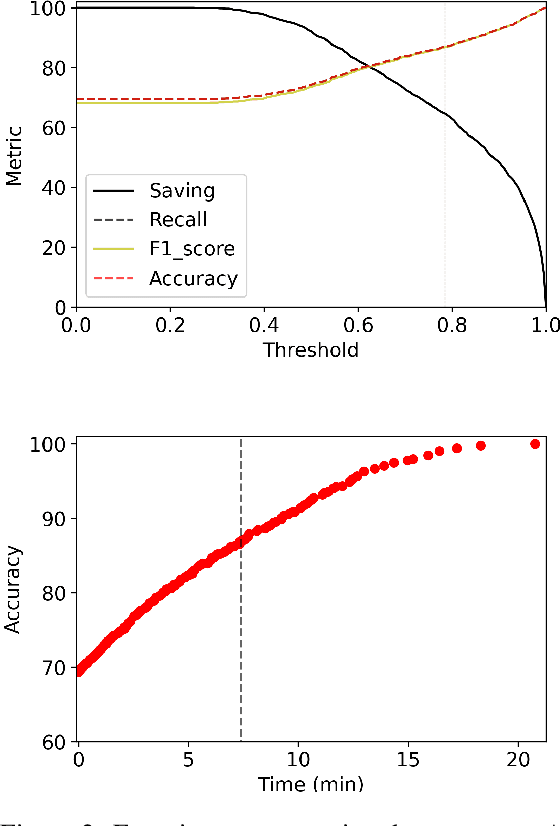

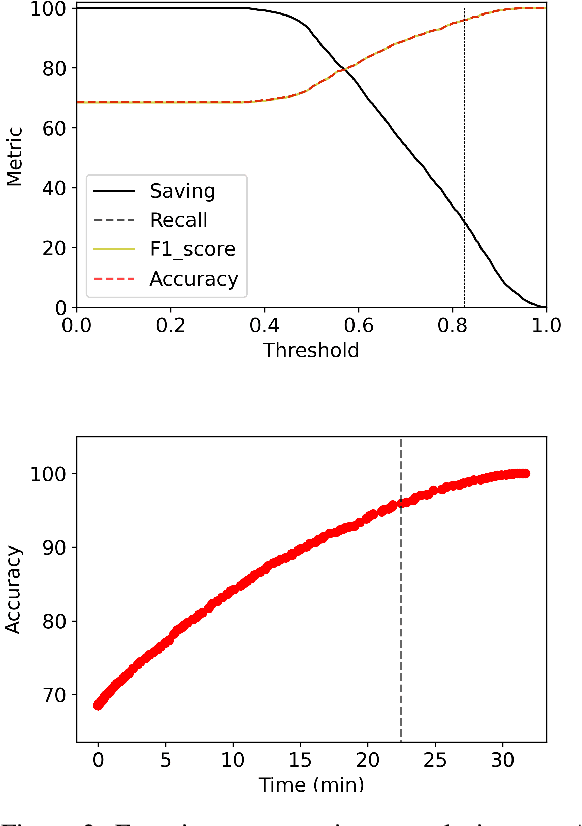

Abstract:Transformer language models provide superior accuracy over previous models but they are computationally and environmentally expensive. Borrowing the concept of model cascading from computer vision, we introduce BabyBear, a framework for cascading models for natural language processing (NLP) tasks to minimize cost. The core strategy is inference triage, exiting early when the least expensive model in the cascade achieves a sufficiently high-confidence prediction. We test BabyBear on several open source data sets related to document classification and entity recognition. We find that for common NLP tasks a high proportion of the inference load can be accomplished with cheap, fast models that have learned by observing a deep learning model. This allows us to reduce the compute cost of large-scale classification jobs by more than 50% while retaining overall accuracy. For named entity recognition, we save 33% of the deep learning compute while maintaining an F1 score higher than 95% on the CoNLL benchmark.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge