Krishan Rajaratnam

Noise Flooding for Detecting Audio Adversarial Examples Against Automatic Speech Recognition

Dec 25, 2018

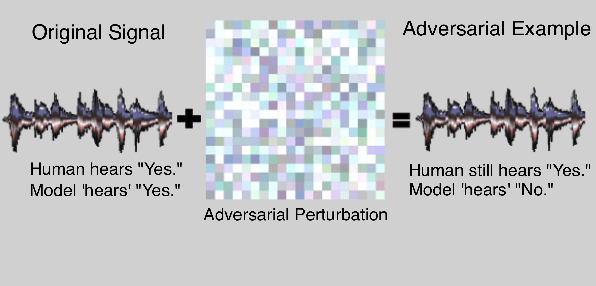

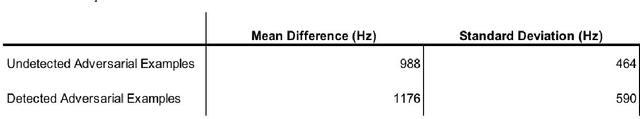

Abstract:Neural models enjoy widespread use across a variety of tasks and have grown to become crucial components of many industrial systems. Despite their effectiveness and extensive popularity, they are not without their exploitable flaws. Initially applied to computer vision systems, the generation of adversarial examples is a process in which seemingly imperceptible perturbations are made to an image, with the purpose of inducing a deep learning based classifier to misclassify the image. Due to recent trends in speech processing, this has become a noticeable issue in speech recognition models. In late 2017, an attack was shown to be quite effective against the Speech Commands classification model. Limited-vocabulary speech classifiers, such as the Speech Commands model, are used quite frequently in a variety of applications, particularly in managing automated attendants in telephony contexts. As such, adversarial examples produced by this attack could have real-world consequences. While previous work in defending against these adversarial examples has investigated using audio preprocessing to reduce or distort adversarial noise, this work explores the idea of flooding particular frequency bands of an audio signal with random noise in order to detect adversarial examples. This technique of flooding, which does not require retraining or modifying the model, is inspired by work done in computer vision and builds on the idea that speech classifiers are relatively robust to natural noise. A combined defense incorporating 5 different frequency bands for flooding the signal with noise outperformed other existing defenses in the audio space, detecting adversarial examples with 91.8% precision and 93.5% recall.

Isolated and Ensemble Audio Preprocessing Methods for Detecting Adversarial Examples against Automatic Speech Recognition

Sep 11, 2018

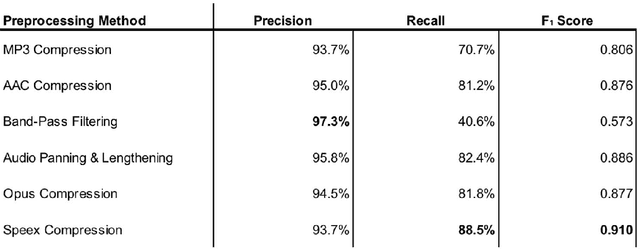

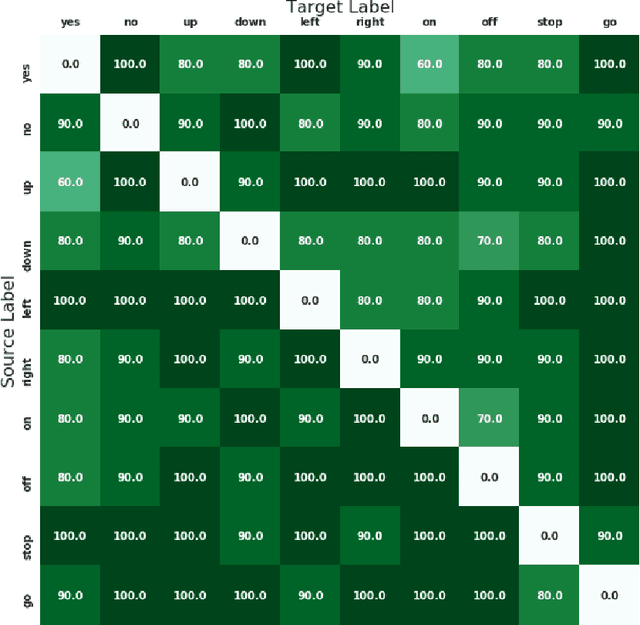

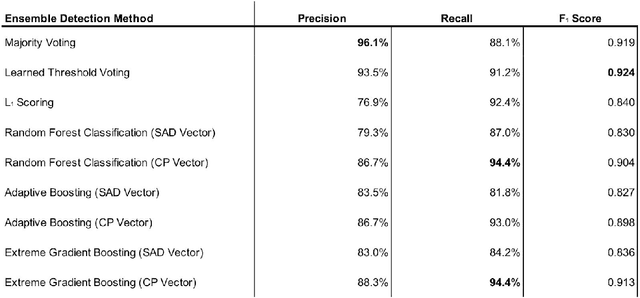

Abstract:An adversarial attack is an exploitative process in which minute alterations are made to natural inputs, causing the inputs to be misclassified by neural models. In the field of speech recognition, this has become an issue of increasing significance. Although adversarial attacks were originally introduced in computer vision, they have since infiltrated the realm of speech recognition. In 2017, a genetic attack was shown to be quite potent against the Speech Commands Model. Limited-vocabulary speech classifiers, such as the Speech Commands Model, are used in a variety of applications, particularly in telephony; as such, adversarial examples produced by this attack pose as a major security threat. This paper explores various methods of detecting these adversarial examples with combinations of audio preprocessing. One particular combined defense incorporating compressions, speech coding, filtering, and audio panning was shown to be quite effective against the attack on the Speech Commands Model, detecting audio adversarial examples with 93.5% precision and 91.2% recall.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge