Kostas Katsaros

Computing Within Limits: An Empirical Study of Energy Consumption in ML Training and Inference

Jun 20, 2024

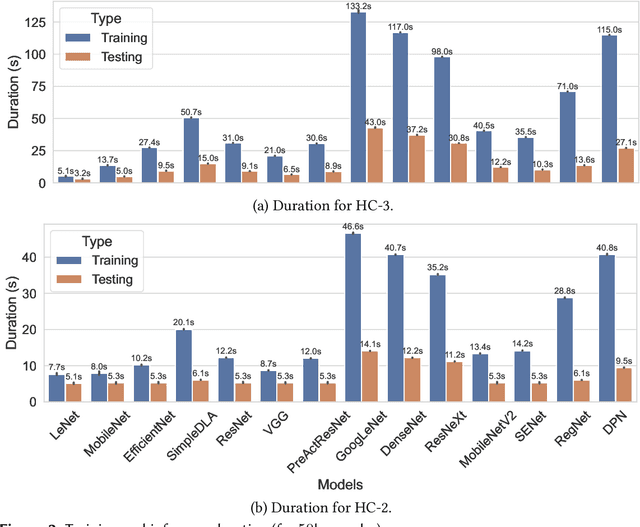

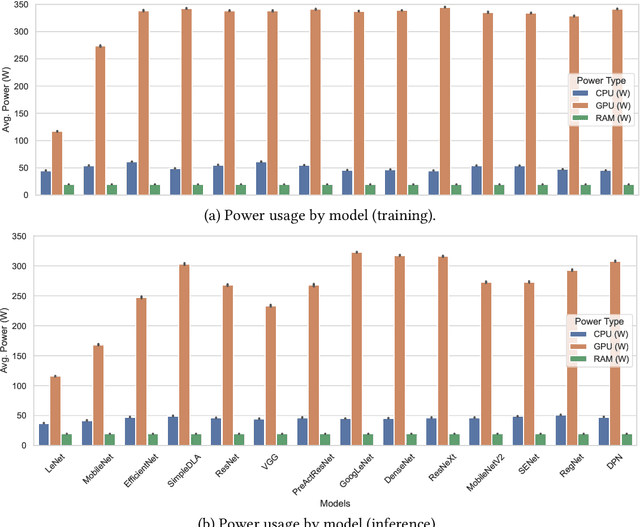

Abstract:Machine learning (ML) has seen tremendous advancements, but its environmental footprint remains a concern. Acknowledging the growing environmental impact of ML this paper investigates Green ML, examining various model architectures and hyperparameters in both training and inference phases to identify energy-efficient practices. Our study leverages software-based power measurements for ease of replication across diverse configurations, models and datasets. In this paper, we examine multiple models and hardware configurations to identify correlations across the various measurements and metrics and key contributors to energy reduction. Our analysis offers practical guidelines for constructing sustainable ML operations, emphasising energy consumption and carbon footprint reductions while maintaining performance. As identified, short-lived profiling can quantify the long-term expected energy consumption. Moreover, model parameters can also be used to accurately estimate the expected total energy without the need for extensive experimentation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge