Konrad Ahlin

Canonical mapping as a general-purpose object descriptor for robotic manipulation

Mar 02, 2023Abstract:Perception is an essential part of robotic manipulation in a semi-structured environment. Traditional approaches produce a narrow task-specific prediction (e.g., object's 6D pose), that cannot be adapted to other tasks and is ill-suited for deformable objects. In this paper, we propose using canonical mapping as a near-universal and flexible object descriptor. We demonstrate that common object representations can be derived from a single pre-trained canonical mapping model, which in turn can be generated with minimal manual effort using an automated data generation and training pipeline. We perform a multi-stage experiment using two robot arms that demonstrate the robustness of the perception approach and the ways it can inform the manipulation strategy, thus serving as a powerful foundation for general-purpose robotic manipulation.

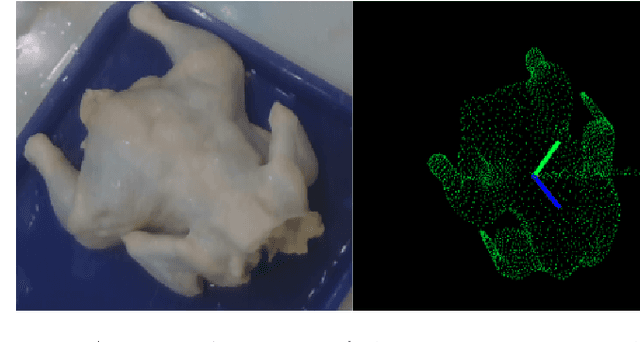

Pose estimation and bin picking for deformable products

Nov 12, 2019

Abstract:Robotic systems in manufacturing applications commonly assume known object geometry and appearance. This simplifies the task for the 3D perception algorithms and allows the manipulation to be more deterministic. However, those approaches are not easily transferable to the agricultural and food domains due to the variability and deformability of natural food. We demonstrate an approach applied to poultry products that allows picking up a whole chicken from an unordered bin using a suction cup gripper, estimating its pose using a Deep Learning approach, and placing it in a canonical orientation where it can be further processed. Our robotic system was experimentally evaluated and is able to generalize to object variations and achieves high accuracy on bin picking and pose estimation tasks in a real-world environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge