Kepa Bengoetxea

MultiAzterTest: a Multilingual Analyzer on Multiple Levels of Language for Readability Assessment

Sep 10, 2021

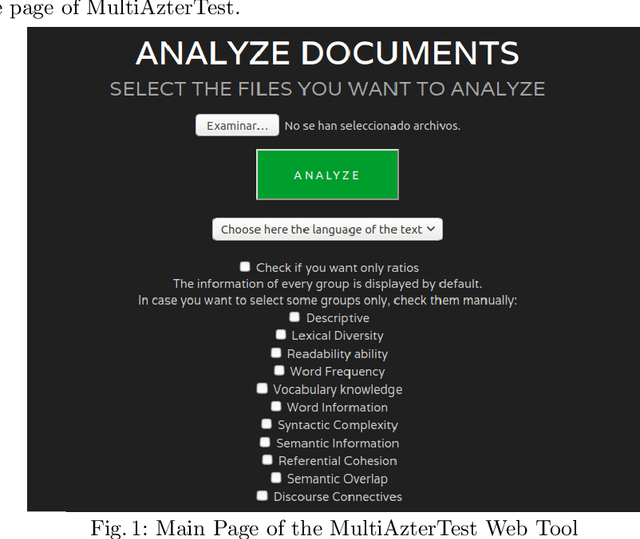

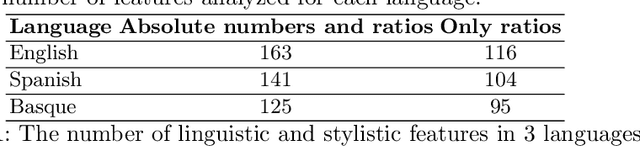

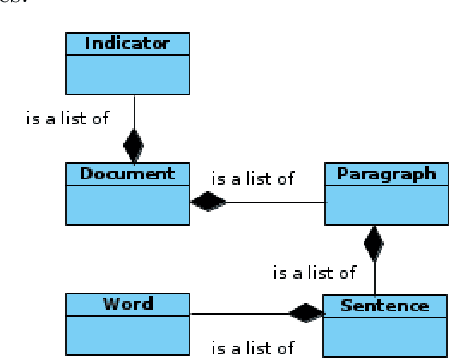

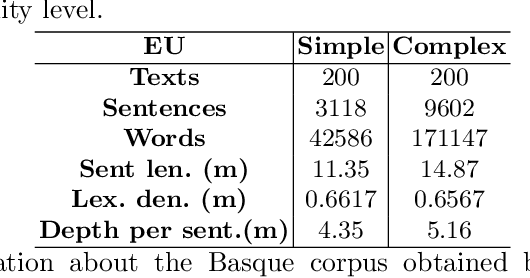

Abstract:Readability assessment is the task of determining how difficult or easy a text is or which level/grade it has. Traditionally, language dependent readability formula have been used, but these formulae take few text characteristics into account. However, Natural Language Processing (NLP) tools that assess the complexity of texts are able to measure more different features and can be adapted to different languages. In this paper, we present the MultiAzterTest tool: (i) an open source NLP tool which analyzes texts on over 125 measures of cohesion,language, and readability for English, Spanish and Basque, but whose architecture is designed to easily adapt other languages; (ii) readability assessment classifiers that improve the performance of Coh-Metrix in English, Coh-Metrix-Esp in Spanish and ErreXail in Basque; iii) a web tool. MultiAzterTest obtains 90.09 % in accuracy when classifying into three reading levels (elementary, intermediate, and advanced) in English and 95.50 % in Basque and 90 % in Spanish when classifying into two reading levels (simple and complex) using a SMO classifier. Using cross-lingual features, MultiAzterTest also obtains competitive results above all in a complex vs simple distinction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge