K. V. Arya

MultiFusionNet: Multilayer Multimodal Fusion of Deep Neural Networks for Chest X-Ray Image Classification

Jan 01, 2024

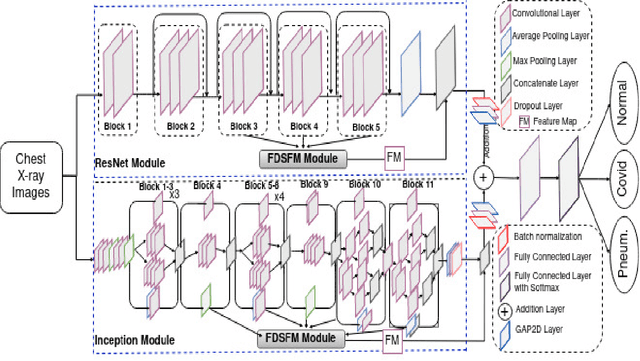

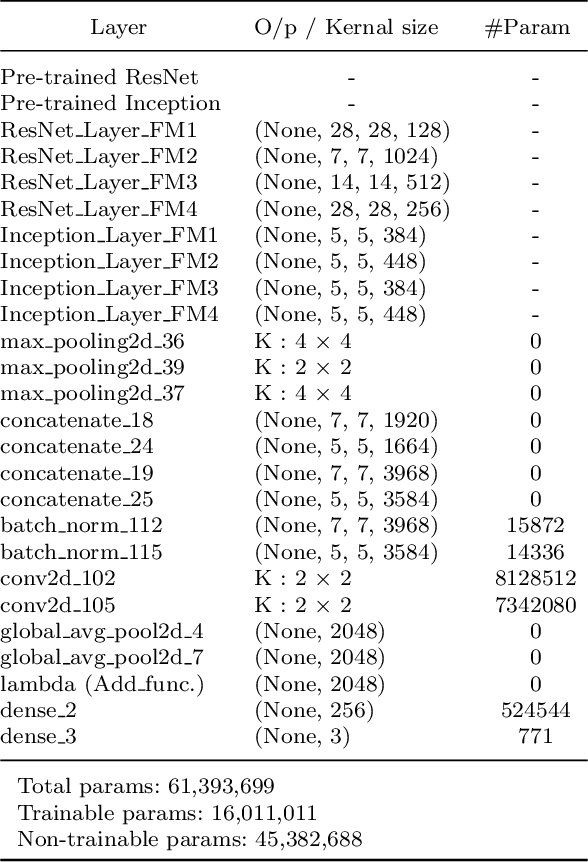

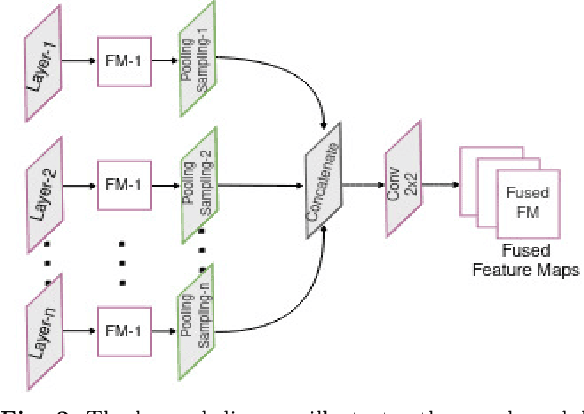

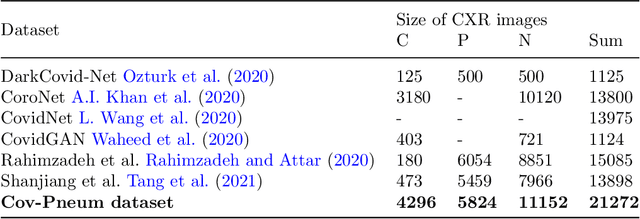

Abstract:Chest X-ray imaging is a critical diagnostic tool for identifying pulmonary diseases. However, manual interpretation of these images is time-consuming and error-prone. Automated systems utilizing convolutional neural networks (CNNs) have shown promise in improving the accuracy and efficiency of chest X-ray image classification. While previous work has mainly focused on using feature maps from the final convolution layer, there is a need to explore the benefits of leveraging additional layers for improved disease classification. Extracting robust features from limited medical image datasets remains a critical challenge. In this paper, we propose a novel deep learning-based multilayer multimodal fusion model that emphasizes extracting features from different layers and fusing them. Our disease detection model considers the discriminatory information captured by each layer. Furthermore, we propose the fusion of different-sized feature maps (FDSFM) module to effectively merge feature maps from diverse layers. The proposed model achieves a significantly higher accuracy of 97.21% and 99.60% for both three-class and two-class classifications, respectively. The proposed multilayer multimodal fusion model, along with the FDSFM module, holds promise for accurate disease classification and can also be extended to other disease classifications in chest X-ray images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge