Junjie Kang

Region-controlled Style Transfer

Oct 24, 2023

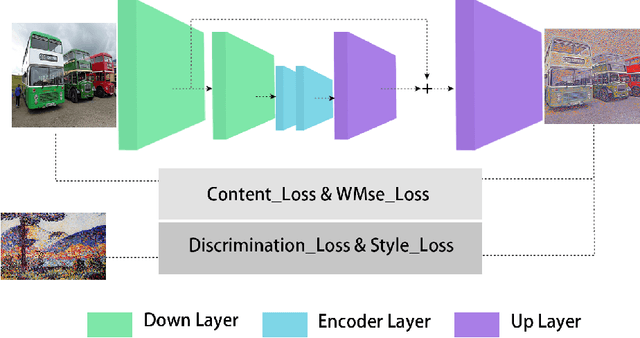

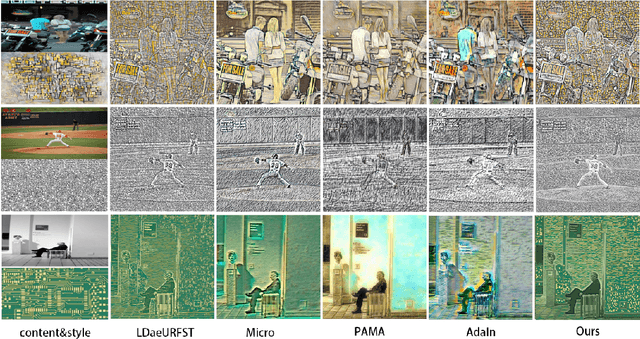

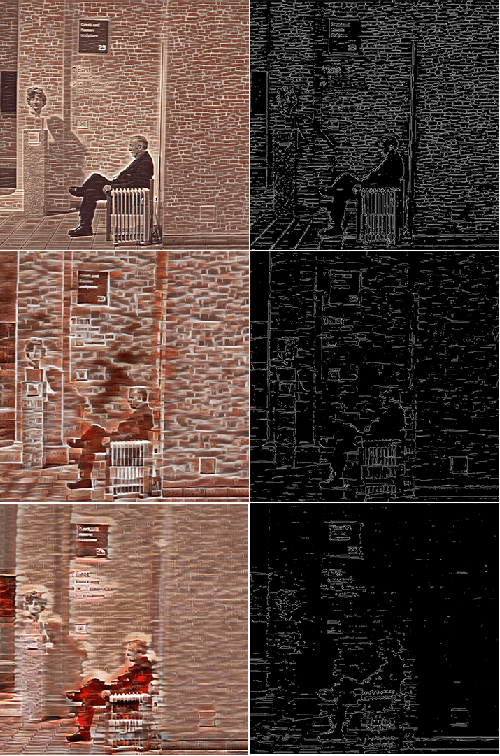

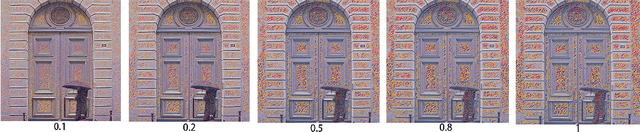

Abstract:Image style transfer is a challenging task in computational vision. Existing algorithms transfer the color and texture of style images by controlling the neural network's feature layers. However, they fail to control the strength of textures in different regions of the content image. To address this issue, we propose a training method that uses a loss function to constrain the style intensity in different regions. This method guides the transfer strength of style features in different regions based on the gradient relationship between style and content images. Additionally, we introduce a novel feature fusion method that linearly transforms content features to resemble style features while preserving their semantic relationships. Extensive experiments have demonstrated the effectiveness of our proposed approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge