Jung-Hsien Chiang

SGD-Mix: Enhancing Domain-Specific Image Classification with Label-Preserving Data Augmentation

May 17, 2025Abstract:Data augmentation for domain-specific image classification tasks often struggles to simultaneously address diversity, faithfulness, and label clarity of generated data, leading to suboptimal performance in downstream tasks. While existing generative diffusion model-based methods aim to enhance augmentation, they fail to cohesively tackle these three critical aspects and often overlook intrinsic challenges of diffusion models, such as sensitivity to model characteristics and stochasticity under strong transformations. In this paper, we propose a novel framework that explicitly integrates diversity, faithfulness, and label clarity into the augmentation process. Our approach employs saliency-guided mixing and a fine-tuned diffusion model to preserve foreground semantics, enrich background diversity, and ensure label consistency, while mitigating diffusion model limitations. Extensive experiments across fine-grained, long-tail, few-shot, and background robustness tasks demonstrate our method's superior performance over state-of-the-art approaches.

Debiasing Diffusion Model: Enhancing Fairness through Latent Representation Learning in Stable Diffusion Model

Mar 16, 2025Abstract:Image generative models, particularly diffusion-based models, have surged in popularity due to their remarkable ability to synthesize highly realistic images. However, since these models are data-driven, they inherit biases from the training datasets, frequently leading to disproportionate group representations that exacerbate societal inequities. Traditionally, efforts to debiase these models have relied on predefined sensitive attributes, classifiers trained on such attributes, or large language models to steer outputs toward fairness. However, these approaches face notable drawbacks: predefined attributes do not adequately capture complex and continuous variations among groups. To address these issues, we introduce the Debiasing Diffusion Model (DDM), which leverages an indicator to learn latent representations during training, promoting fairness through balanced representations without requiring predefined sensitive attributes. This approach not only demonstrates its effectiveness in scenarios previously addressed by conventional techniques but also enhances fairness without relying on predefined sensitive attributes as conditions. In this paper, we discuss the limitations of prior bias mitigation techniques in diffusion-based models, elaborate on the architecture of the DDM, and validate the effectiveness of our approach through experiments.

A Deep Learning Based Workflow for Detection of Lung Nodules With Chest Radiograph

Dec 21, 2021

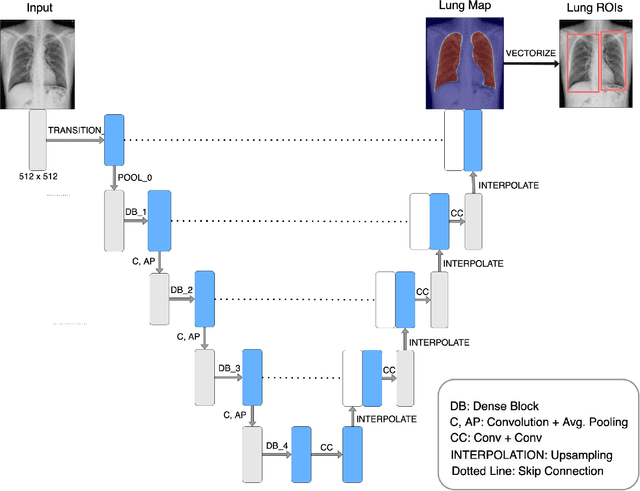

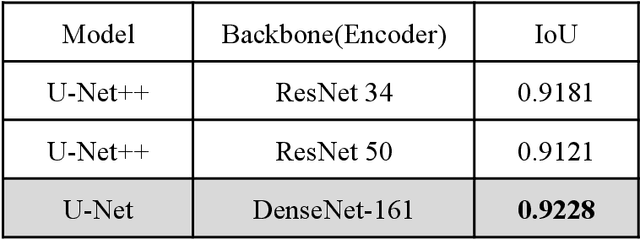

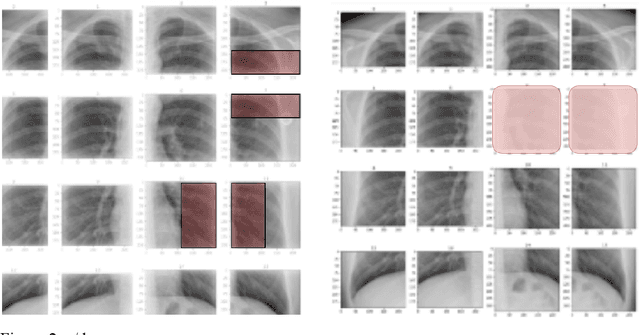

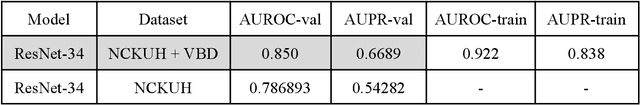

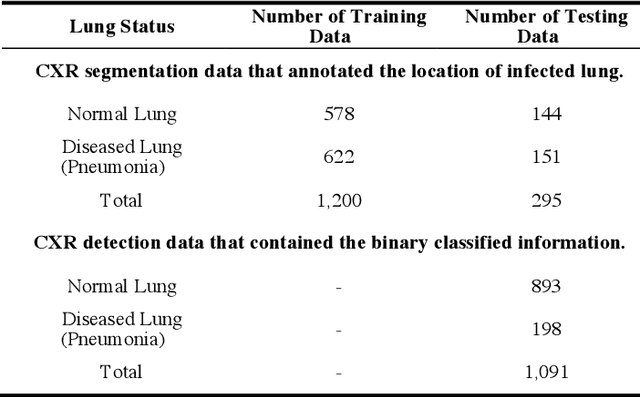

Abstract:PURPOSE: This study aimed to develop a deep learning-based tool to detect and localize lung nodules with chest radiographs(CXRs). We expected it to enhance the efficiency of interpreting CXRs and reduce the possibilities of delayed diagnosis of lung cancer. MATERIALS AND METHODS: We collected CXRs from NCKUH database and VBD, an open-source medical image dataset, as our training and validation data. A number of CXRs from the Ministry of Health and Welfare(MOHW) database served as our test data. We built a segmentation model to identify lung areas from CXRs, and sliced them into 16 patches. Physicians labeled the CXRs by clicking the patches. These labeled patches were then used to train and fine-tune a deep neural network(DNN) model, classifying the patches as positive or negative. Finally, we test the DNN model with the lung patches of CXRs from MOHW. RESULTS: Our segmentation model identified the lung regions well from the whole CXR. The Intersection over Union(IoU) between the ground truth and the segmentation result was 0.9228. In addition, our DNN model achieved a sensitivity of 0.81, specificity of 0.82, and AUROC of 0.869 in 98 of 125 cases. For the other 27 difficult cases, the sensitivity was 0.54, specificity 0.494, and AUROC 0.682. Overall, we obtained a sensitivity of 0.78, specificity of 0.79, and AUROC 0.837. CONCLUSIONS: Our two-step workflow is comparable to state-of-the-art algorithms in the sensitivity and specificity of localizing lung nodules from CXRs. Notably, our workflow provides an efficient way for specialists to label the data, which is valuable for relevant researches because of the relative rarity of labeled medical image data.

CUAB: Convolutional Uncertainty Attention Block Enhanced the Chest X-ray Image Analysis

May 05, 2021

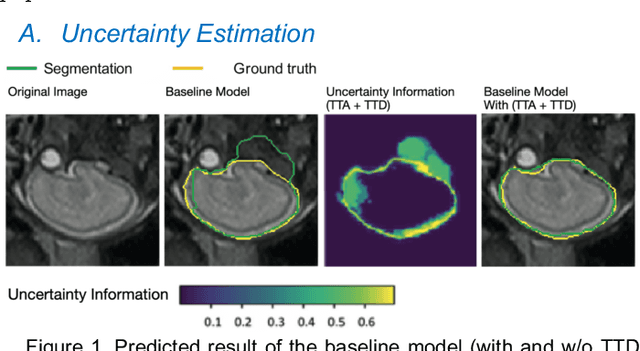

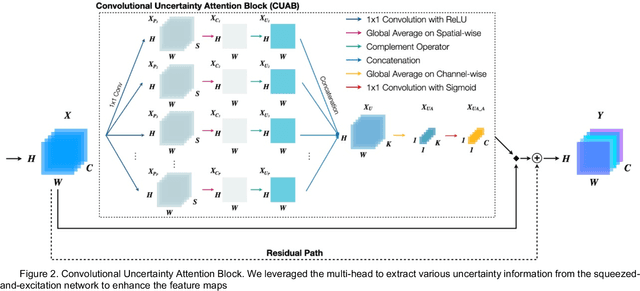

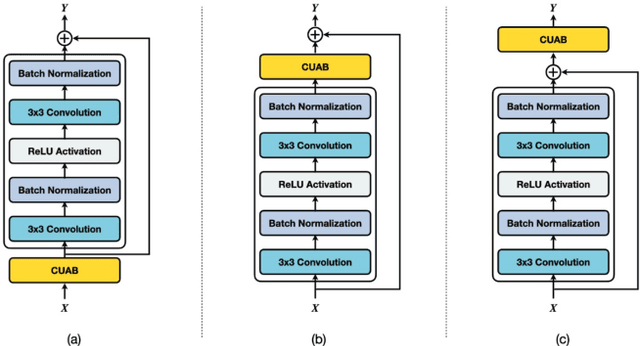

Abstract:In recent years, convolutional neural networks (CNNs) have been successfully implemented to various image recognition applications, such as medical image analysis, object detection, and image segmentation. Many studies and applications have been working on improving the performance of CNN algorithms and models. The strategies that aim to improve the performance of CNNs can be grouped into three major approaches: (1) deeper and wider network architecture, (2) automatic architecture search, and (3) convolutional attention block. Unlike approaches (1) and (2), the convolutional attention block approach is more flexible with lower cost. It enhances the CNN performance by extracting more efficient features. However, the existing attention blocks focus on enhancing the significant features, which lose some potential features in the uncertainty information. Inspired by the test time augmentation and test-time dropout approaches, we developed a novel convolutional uncertainty attention block (CUAB) that can leverage the uncertainty information to improve CNN-based models. The proposed module discovers potential information from the uncertain regions on feature maps in computer vision tasks. It is a flexible functional attention block that can be applied to any position in the convolutional block in CNN models. We evaluated the CUAB with notable backbone models, ResNet and ResNeXt, on a medical image segmentation task. The CUAB achieved a dice score of 73% and 84% in pneumonia and pneumothorax segmentation, respectively, thereby outperforming the original model and other notable attention approaches. The results demonstrated that the CUAB can efficiently utilize the uncertainty information to improve the model performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge