Josh Pritchard

Guided Weak Supervision for Action Recognition with Scarce Data to Assess Skills of Children with Autism

Dec 02, 2019

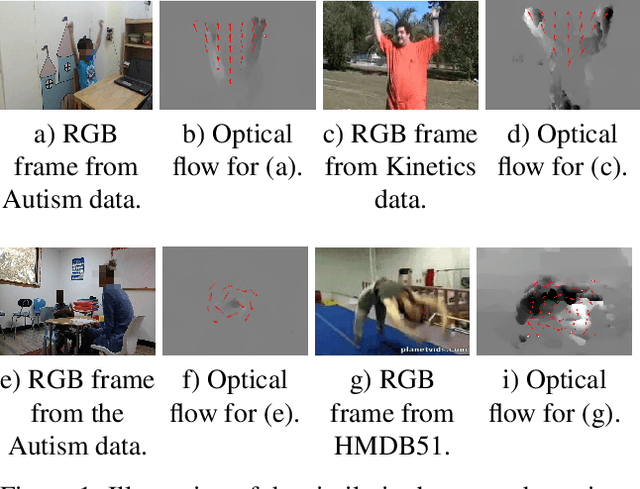

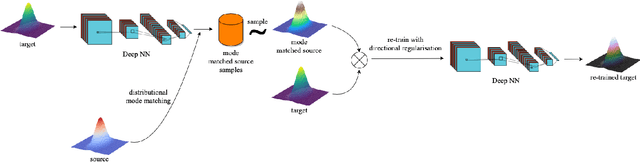

Abstract:Diagnostic and intervention methodologies for skill assessment of autism typically requires a clinician repetitively initiating several stimuli and recording the child's response. In this paper, we propose to automate the response measurement through video recording of the scene following the use of Deep Neural models for human action recognition from videos. However, supervised learning of neural networks demand large amounts of annotated data that are hard to come by. This issue is addressed by leveraging the `similarities' between the action categories in publicly available large-scale video action (source) datasets and the dataset of interest. A technique called guided weak supervision is proposed, where every class in the target data is matched to a class in the source data using the principle of posterior likelihood maximization. Subsequently, classifier on the target data is re-trained by augmenting samples from the matched source classes, along with a new loss encouraging inter-class separability. The proposed method is evaluated on two skill assessment autism datasets, SSBD and a real world Autism dataset comprising 37 children of different ages and ethnicity who are diagnosed with autism. Our proposed method is found to improve the performance of the state-of-the-art multi-class human action recognition models in-spite of supervision with scarce data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge