Jose C Principe

Exploiting Spatio-Temporal Structure with Recurrent Winner-Take-All Networks

Mar 15, 2017

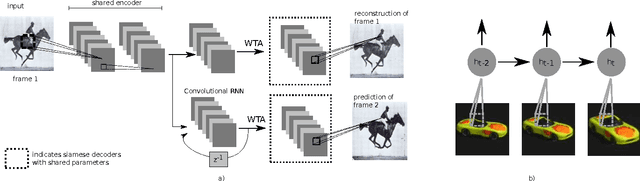

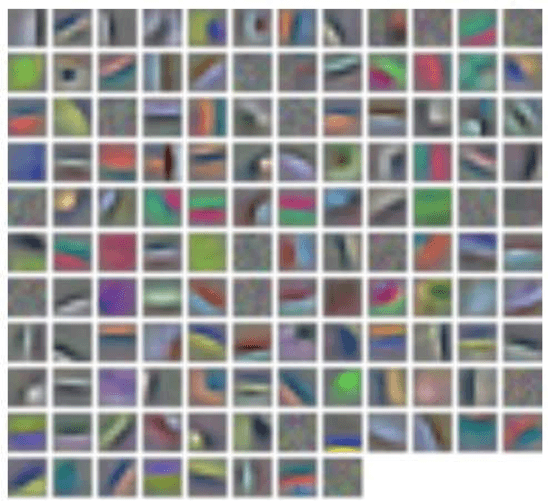

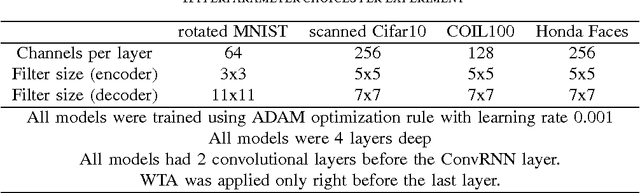

Abstract:We propose a convolutional recurrent neural network, with Winner-Take-All dropout for high dimensional unsupervised feature learning in multi-dimensional time series. We apply the proposedmethod for object recognition with temporal context in videos and obtain better results than comparable methods in the literature, including the Deep Predictive Coding Networks previously proposed by Chalasani and Principe.Our contributions can be summarized as a scalable reinterpretation of the Deep Predictive Coding Networks trained end-to-end with backpropagation through time, an extension of the previously proposed Winner-Take-All Autoencoders to sequences in time, and a new technique for initializing and regularizing convolutional-recurrent neural networks.

Information Theoretic-Learning Auto-Encoder

Mar 22, 2016

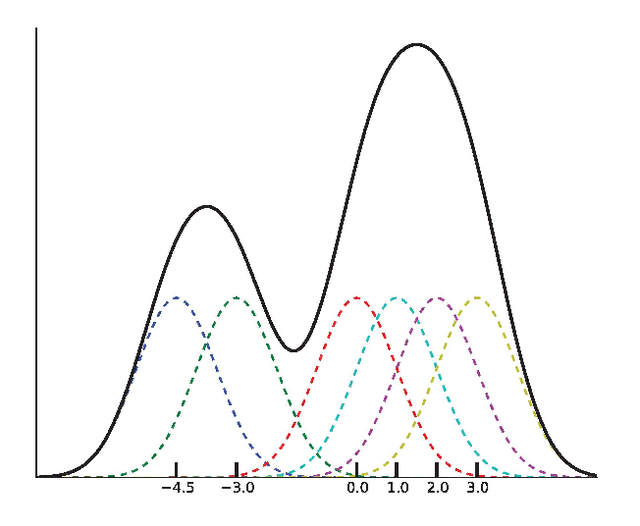

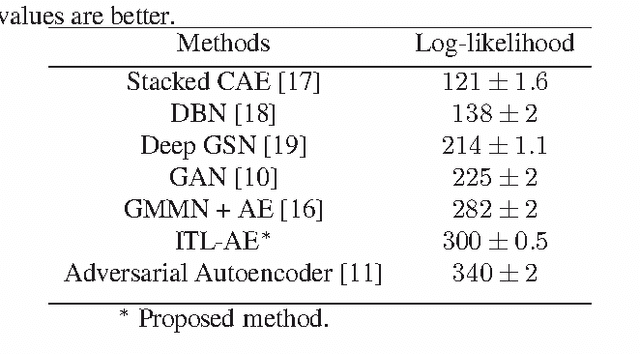

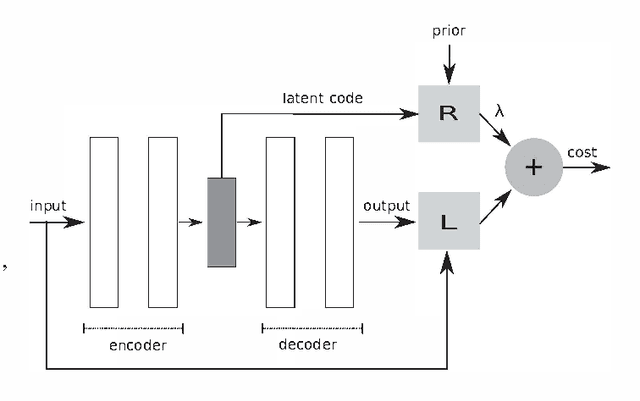

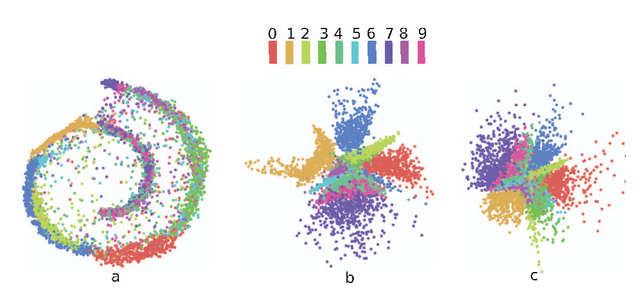

Abstract:We propose Information Theoretic-Learning (ITL) divergence measures for variational regularization of neural networks. We also explore ITL-regularized autoencoders as an alternative to variational autoencoding bayes, adversarial autoencoders and generative adversarial networks for randomly generating sample data without explicitly defining a partition function. This paper also formalizes, generative moment matching networks under the ITL framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge