Jonathan P Williams

Model-free generalized fiducial inference

Jul 24, 2023

Abstract:Motivated by the need for the development of safe and reliable methods for uncertainty quantification in machine learning, I propose and develop ideas for a model-free statistical framework for imprecise probabilistic prediction inference. This framework facilitates uncertainty quantification in the form of prediction sets that offer finite sample control of type 1 errors, a property shared with conformal prediction sets, but this new approach also offers more versatile tools for imprecise probabilistic reasoning. Furthermore, I propose and consider the theoretical and empirical properties of a precise probabilistic approximation to the model-free imprecise framework. Approximating a belief/plausibility measure pair by an [optimal in some sense] probability measure in the credal set is a critical resolution needed for the broader adoption of imprecise probabilistic approaches to inference in statistical and machine learning communities. It is largely undetermined in the statistical and machine learning literatures, more generally, how to properly quantify uncertainty in that there is no generally accepted standard of accountability of stated uncertainties. The research I present in this manuscript is aimed at motivating a framework for statistical inference with reliability and accountability as the guiding principles.

Conformal prediction for text infilling and part-of-speech prediction

Nov 04, 2021

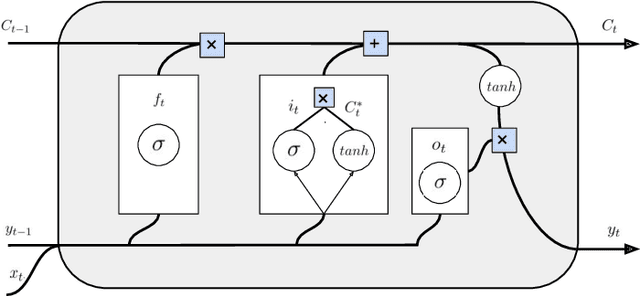

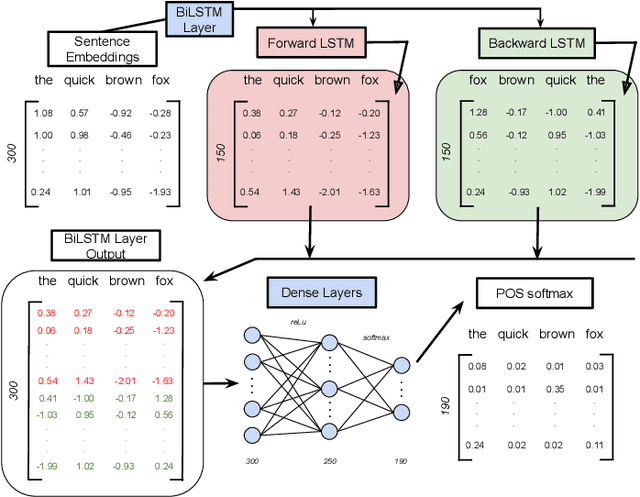

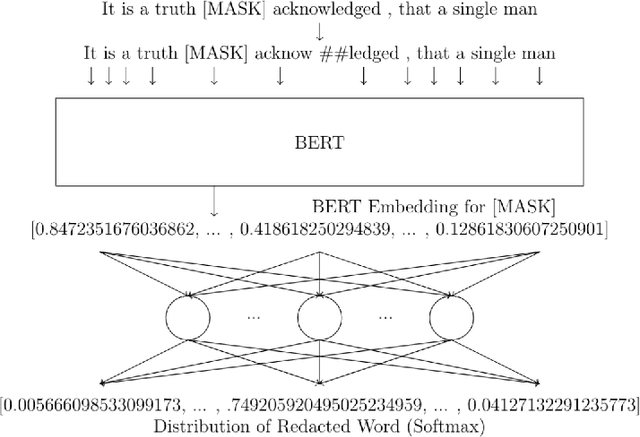

Abstract:Modern machine learning algorithms are capable of providing remarkably accurate point-predictions; however, questions remain about their statistical reliability. Unlike conventional machine learning methods, conformal prediction algorithms return confidence sets (i.e., set-valued predictions) that correspond to a given significance level. Moreover, these confidence sets are valid in the sense that they guarantee finite sample control over type 1 error probabilities, allowing the practitioner to choose an acceptable error rate. In our paper, we propose inductive conformal prediction (ICP) algorithms for the tasks of text infilling and part-of-speech (POS) prediction for natural language data. We construct new conformal prediction-enhanced bidirectional encoder representations from transformers (BERT) and bidirectional long short-term memory (BiLSTM) algorithms for POS tagging and a new conformal prediction-enhanced BERT algorithm for text infilling. We analyze the performance of the algorithms in simulations using the Brown Corpus, which contains over 57,000 sentences. Our results demonstrate that the ICP algorithms are able to produce valid set-valued predictions that are small enough to be applicable in real-world applications. We also provide a real data example for how our proposed set-valued predictions can improve machine generated audio transcriptions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge