John Thangarajah

SAGE: Generating Symbolic Goals for Myopic Models in Deep Reinforcement Learning

Mar 09, 2022

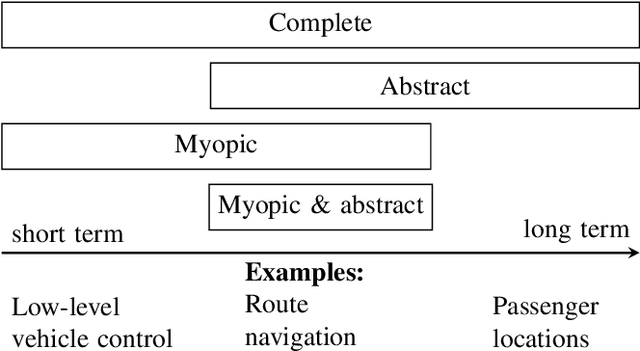

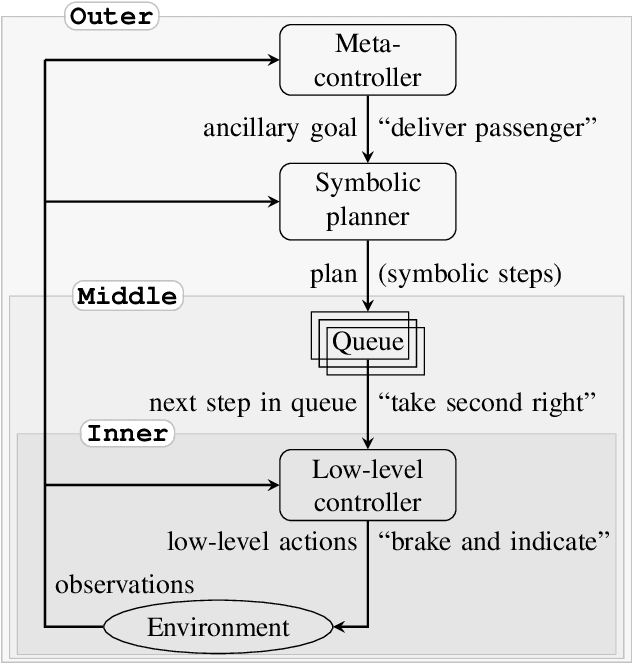

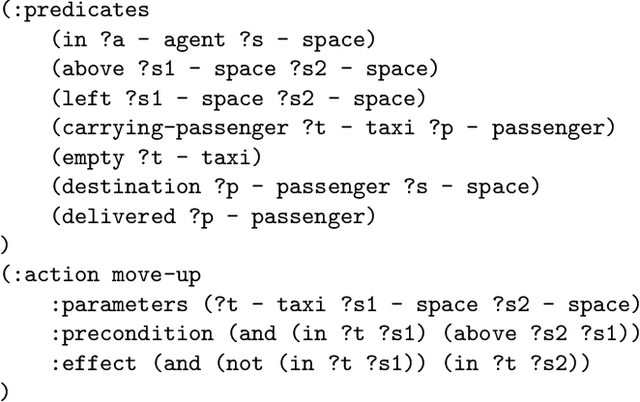

Abstract:Model-based reinforcement learning algorithms are typically more sample efficient than their model-free counterparts, especially in sparse reward problems. Unfortunately, many interesting domains are too complex to specify the complete models required by traditional model-based approaches. Learning a model takes a large number of environment samples, and may not capture critical information if the environment is hard to explore. If we could specify an incomplete model and allow the agent to learn how best to use it, we could take advantage of our partial understanding of many domains. Existing hybrid planning and learning systems which address this problem often impose highly restrictive assumptions on the sorts of models which can be used, limiting their applicability to a wide range of domains. In this work we propose SAGE, an algorithm combining learning and planning to exploit a previously unusable class of incomplete models. This combines the strengths of symbolic planning and neural learning approaches in a novel way that outperforms competing methods on variations of taxi world and Minecraft.

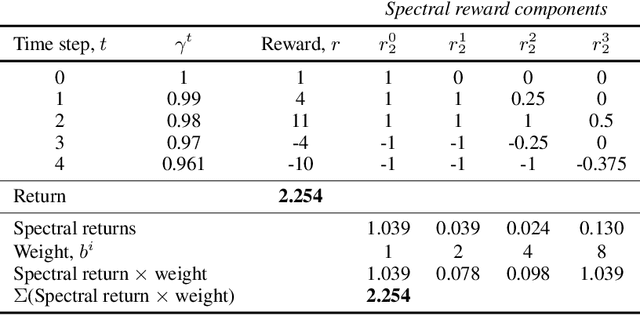

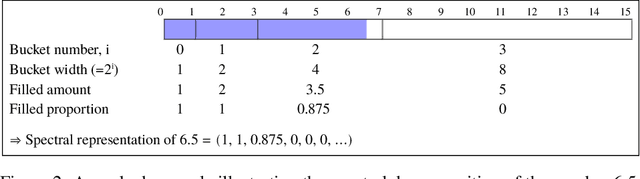

Adapting to Reward Progressivity via Spectral Reinforcement Learning

Apr 29, 2021

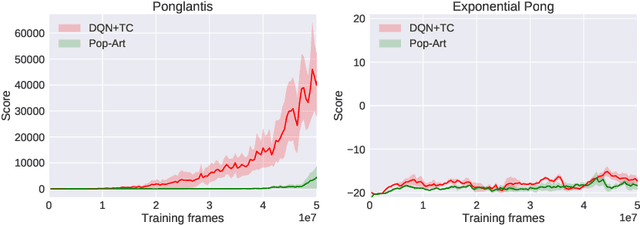

Abstract:In this paper we consider reinforcement learning tasks with progressive rewards; that is, tasks where the rewards tend to increase in magnitude over time. We hypothesise that this property may be problematic for value-based deep reinforcement learning agents, particularly if the agent must first succeed in relatively unrewarding regions of the task in order to reach more rewarding regions. To address this issue, we propose Spectral DQN, which decomposes the reward into frequencies such that the high frequencies only activate when large rewards are found. This allows the training loss to be balanced so that it gives more even weighting across small and large reward regions. In two domains with extreme reward progressivity, where standard value-based methods struggle significantly, Spectral DQN is able to make much farther progress. Moreover, when evaluated on a set of six standard Atari games that do not overtly favour the approach, Spectral DQN remains more than competitive: While it underperforms one of the benchmarks in a single game, it comfortably surpasses the benchmarks in three games. These results demonstrate that the approach is not overfit to its target problem, and suggest that Spectral DQN may have advantages beyond addressing reward progressivity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge