Joe McCalmon

Deep Reinforcement Learning for Adaptive Exploration of Unknown Environments

May 04, 2021

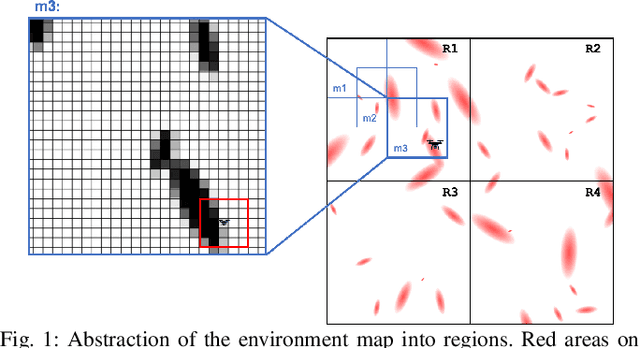

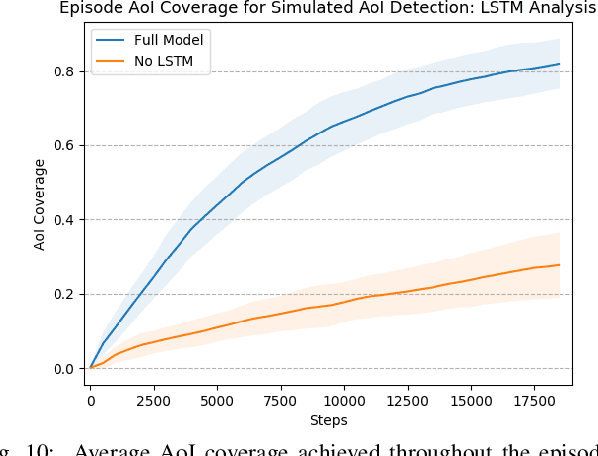

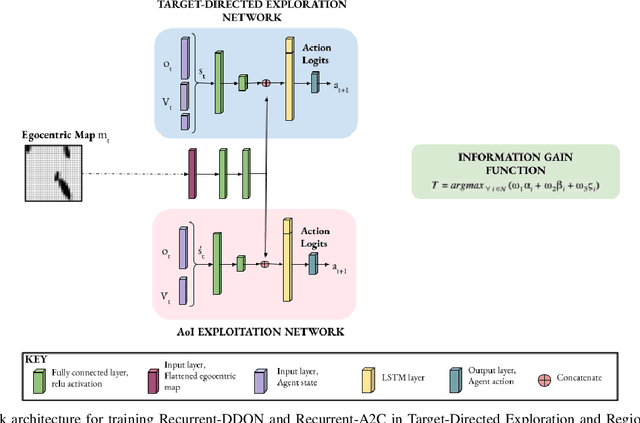

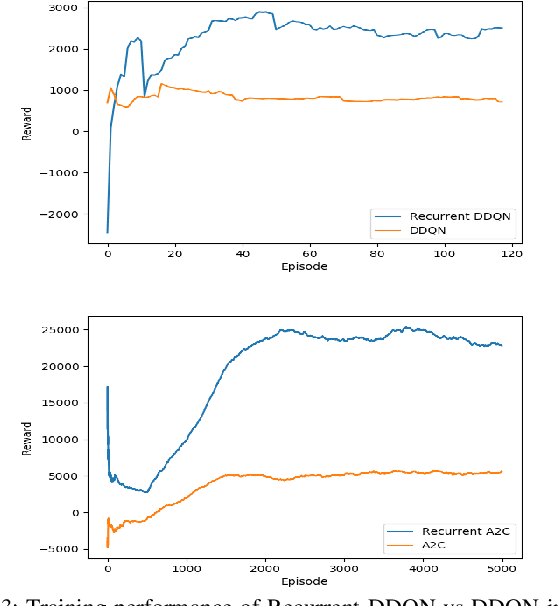

Abstract:Performing autonomous exploration is essential for unmanned aerial vehicles (UAVs) operating in unknown environments. Often, these missions start with building a map for the environment via pure exploration and subsequently using (i.e. exploiting) the generated map for downstream navigation tasks. Accomplishing these navigation tasks in two separate steps is not always possible or even disadvantageous for UAVs deployed in outdoor and dynamically changing environments. Current exploration approaches either use a priori human-generated maps or use heuristics such as frontier-based exploration. Other approaches use learning but focus only on learning policies for specific tasks by either using sample inefficient random exploration or by making impractical assumptions about full map availability. In this paper, we develop an adaptive exploration approach to trade off between exploration and exploitation in one single step for UAVs searching for areas of interest (AoIs) in unknown environments using Deep Reinforcement Learning (DRL). The proposed approach uses a map segmentation technique to decompose the environment map into smaller, tractable maps. Then, a simple information gain function is repeatedly computed to determine the best target region to search during each iteration of the process. DDQN and A2C algorithms are extended with a stack of LSTM layers and trained to generate optimal policies for the exploration and exploitation, respectively. We tested our approach in 3 different tasks against 4 baselines. The results demonstrate that our proposed approach is capable of navigating through randomly generated environments and covering more AoI in less time steps compared to the baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge