Jeffrey Giansiracusa

Algebraic Dynamical Systems in Machine Learning

Nov 06, 2023Abstract:We introduce an algebraic analogue of dynamical systems, based on term rewriting. We show that a recursive function applied to the output of an iterated rewriting system defines a formal class of models into which all the main architectures for dynamic machine learning models (including recurrent neural networks, graph neural networks, and diffusion models) can be embedded. Considered in category theory, we also show that these algebraic models are a natural language for describing the compositionality of dynamic models. Furthermore, we propose that these models provide a template for the generalisation of the above dynamic models to learning problems on structured or non-numerical data, including 'hybrid symbolic-numeric' models.

Quantitative analysis of phase transitions in two-dimensional XY models using persistent homology

Sep 22, 2021

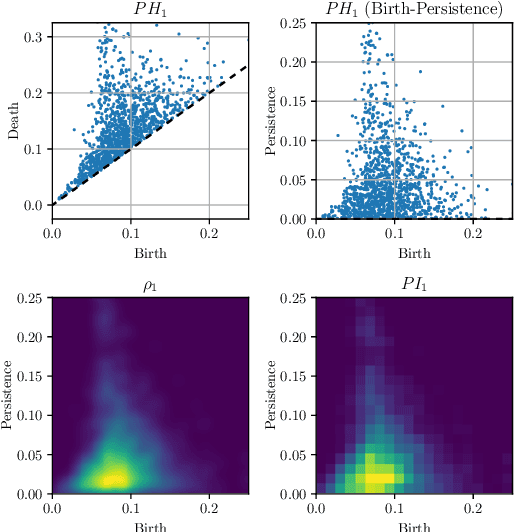

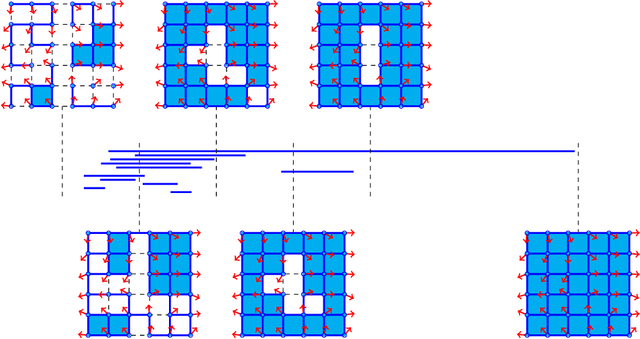

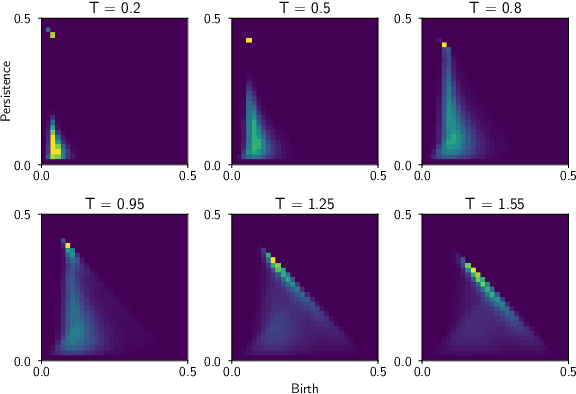

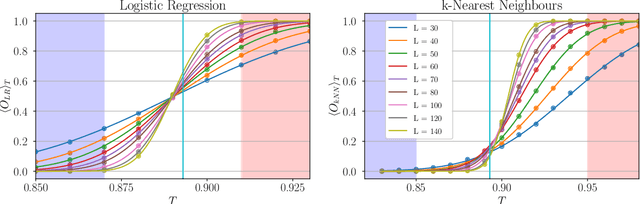

Abstract:We use persistent homology and persistence images as an observable of three different variants of the two-dimensional XY model in order to identify and study their phase transitions. We examine models with the classical XY action, a topological lattice action, and an action with an additional nematic term. In particular, we introduce a new way of computing the persistent homology of lattice spin model configurations and, by considering the fluctuations in the output of logistic regression and k-nearest neighbours models trained on persistence images, we develop a methodology to extract estimates of the critical temperature and the critical exponent of the correlation length. We put particular emphasis on finite-size scaling behaviour and producing estimates with quantifiable error. For each model we successfully identify its phase transition(s) and are able to get an accurate determination of the critical temperatures and critical exponents of the correlation length.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge