Jared Barton

Counterfactual Prediction Under Selective Confounding

Oct 21, 2023

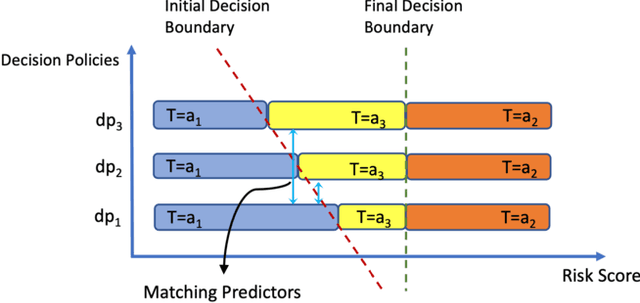

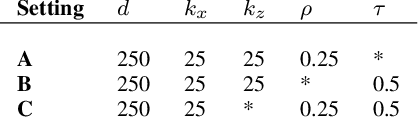

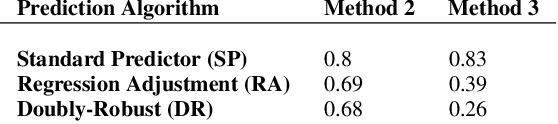

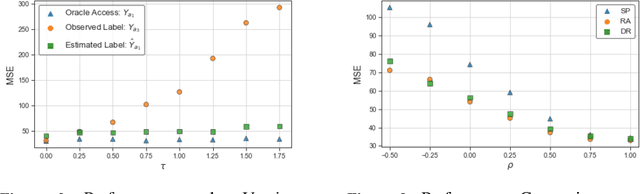

Abstract:This research addresses the challenge of conducting interpretable causal inference between a binary treatment and its resulting outcome when not all confounders are known. Confounders are factors that have an influence on both the treatment and the outcome. We relax the requirement of knowing all confounders under desired treatment, which we refer to as Selective Confounding, to enable causal inference in diverse real-world scenarios. Our proposed scheme is designed to work in situations where multiple decision-makers with different policies are involved and where there is a re-evaluation mechanism after the initial decision to ensure consistency. These assumptions are more practical to fulfill compared to the availability of all confounders under all treatments. To tackle the issue of Selective Confounding, we propose the use of dual-treatment samples. These samples allow us to employ two-step procedures, such as Regression Adjustment or Doubly-Robust, to learn counterfactual predictors. We provide both theoretical error bounds and empirical evidence of the effectiveness of our proposed scheme using synthetic and real-world child placement data. Furthermore, we introduce three evaluation methods specifically tailored to assess the performance in child placement scenarios. By emphasizing transparency and interpretability, our approach aims to provide decision-makers with a valuable tool. The source code repository of this work is located at https://github.com/sohaib730/CausalML.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge