Jan K. Argasiński

Stylometry recognizes human and LLM-generated texts in short samples

Jul 01, 2025

Abstract:The paper explores stylometry as a method to distinguish between texts created by Large Language Models (LLMs) and humans, addressing issues of model attribution, intellectual property, and ethical AI use. Stylometry has been used extensively to characterise the style and attribute authorship of texts. By applying it to LLM-generated texts, we identify their emergent writing patterns. The paper involves creating a benchmark dataset based on Wikipedia, with (a) human-written term summaries, (b) texts generated purely by LLMs (GPT-3.5/4, LLaMa 2/3, Orca, and Falcon), (c) processed through multiple text summarisation methods (T5, BART, Gensim, and Sumy), and (d) rephrasing methods (Dipper, T5). The 10-sentence long texts were classified by tree-based models (decision trees and LightGBM) using human-designed (StyloMetrix) and n-gram-based (our own pipeline) stylometric features that encode lexical, grammatical, syntactic, and punctuation patterns. The cross-validated results reached a performance of up to .87 Matthews correlation coefficient in the multiclass scenario with 7 classes, and accuracy between .79 and 1. in binary classification, with the particular example of Wikipedia and GPT-4 reaching up to .98 accuracy on a balanced dataset. Shapley Additive Explanations pinpointed features characteristic of the encyclopaedic text type, individual overused words, as well as a greater grammatical standardisation of LLMs with respect to human-written texts. These results show -- crucially, in the context of the increasingly sophisticated LLMs -- that it is possible to distinguish machine- from human-generated texts at least for a well-defined text type.

Three-Factor Learning in Spiking Neural Networks: An Overview of Methods and Trends from a Machine Learning Perspective

Apr 06, 2025Abstract:Three-factor learning rules in Spiking Neural Networks (SNNs) have emerged as a crucial extension to traditional Hebbian learning and Spike-Timing-Dependent Plasticity (STDP), incorporating neuromodulatory signals to improve adaptation and learning efficiency. These mechanisms enhance biological plausibility and facilitate improved credit assignment in artificial neural systems. This paper takes a view on this topic from a machine learning perspective, providing an overview of recent advances in three-factor learning, discusses theoretical foundations, algorithmic implementations, and their relevance to reinforcement learning and neuromorphic computing. In addition, we explore interdisciplinary approaches, scalability challenges, and potential applications in robotics, cognitive modeling, and AI systems. Finally, we highlight key research gaps and propose future directions for bridging the gap between neuroscience and artificial intelligence.

Pedestrian intention prediction in Adverse Weather Conditions with Spiking Neural Networks and Dynamic Vision Sensors

Jun 01, 2024

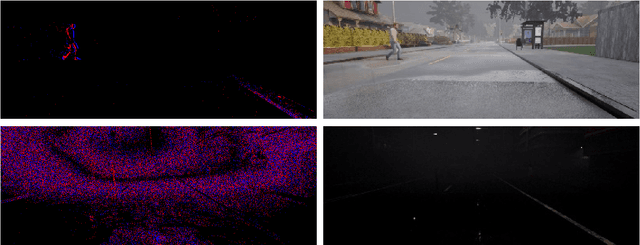

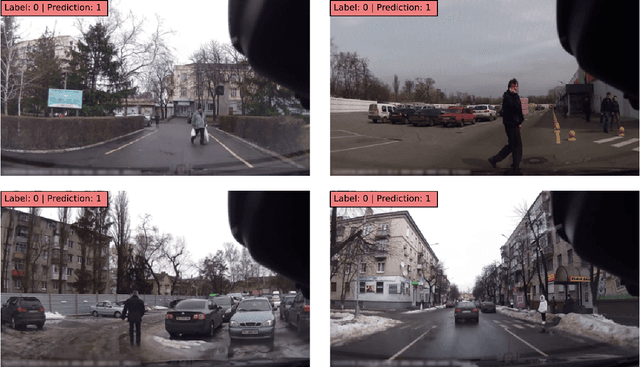

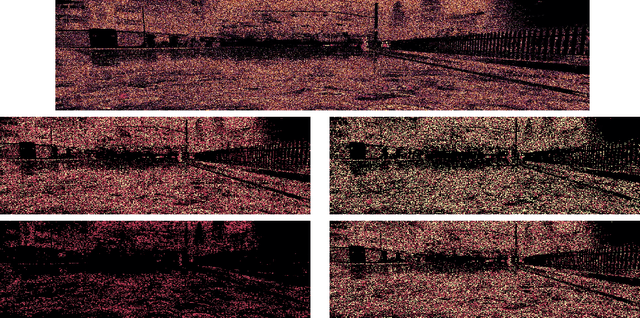

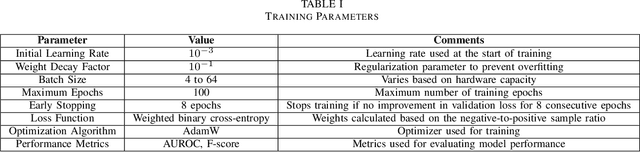

Abstract:This study examines the effectiveness of Spiking Neural Networks (SNNs) paired with Dynamic Vision Sensors (DVS) to improve pedestrian detection in adverse weather, a significant challenge for autonomous vehicles. Utilizing the high temporal resolution and low latency of DVS, which excels in dynamic, low-light, and high-contrast environments, we assess the efficiency of SNNs compared to traditional Convolutional Neural Networks (CNNs). Our experiments involved testing across diverse weather scenarios using a custom dataset from the CARLA simulator, mirroring real-world variability. SNN models, enhanced with Temporally Effective Batch Normalization, were trained and benchmarked against state-of-the-art CNNs to demonstrate superior accuracy and computational efficiency in complex conditions such as rain and fog. The results indicate that SNNs, integrated with DVS, significantly reduce computational overhead and improve detection accuracy in challenging conditions compared to CNNs. This highlights the potential of DVS combined with bio-inspired SNN processing to enhance autonomous vehicle perception and decision-making systems, advancing intelligent transportation systems' safety features in varying operational environments. Additionally, our research indicates that SNNs perform more efficiently in handling long perception windows and prediction tasks, rather than simple pedestrian detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge