James Ting-Ho Lo

Adaptively Solving the Local-Minimum Problem for Deep Neural Networks

Dec 25, 2020

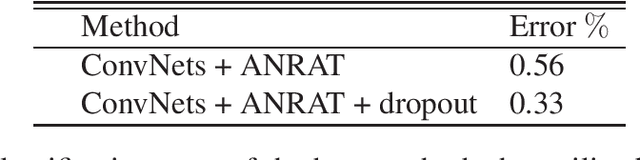

Abstract:This paper aims to overcome a fundamental problem in the theory and application of deep neural networks (DNNs). We propose a method to solve the local minimum problem in training DNNs directly. Our method is based on the cross-entropy loss criterion's convexification by transforming the cross-entropy loss into a risk averting error (RAE) criterion. To alleviate numerical difficulties, a normalized RAE (NRAE) is employed. The convexity region of the cross-entropy loss expands as its risk sensitivity index (RSI) increases. Making the best use of the convexity region, our method starts training with an extensive RSI, gradually reduces it, and switches to the RAE as soon as the RAE is numerically feasible. After training converges, the resultant deep learning machine is expected to be inside the attraction basin of a global minimum of the cross-entropy loss. Numerical results are provided to show the effectiveness of the proposed method.

Low-Order Model of Biological Neural Networks

Dec 12, 2020

Abstract:A biologically plausible low-order model (LOM) of biological neural networks is a recurrent hierarchical network of dendritic nodes/trees, spiking/nonspiking neurons, unsupervised/ supervised covariance/accumulative learning mechanisms, feedback connections, and a scheme for maximal generalization. These component models are motivated and necessitated by making LOM learn and retrieve easily without differentiation, optimization, or iteration, and cluster, detect and recognize multiple/hierarchical corrupted, distorted, and occluded temporal and spatial patterns.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge