Jalal Al-Afandi

MimosaNet: An Unrobust Neural Network Preventing Model Stealing

Jul 02, 2019

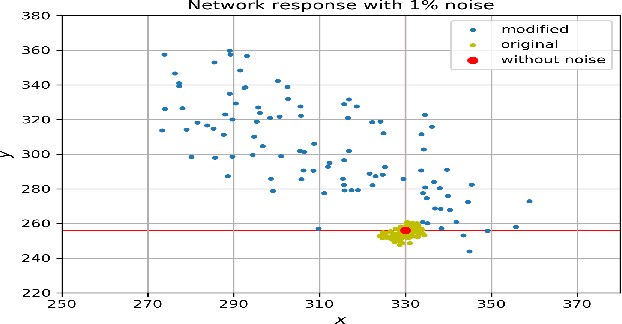

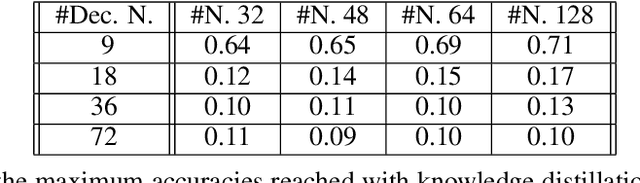

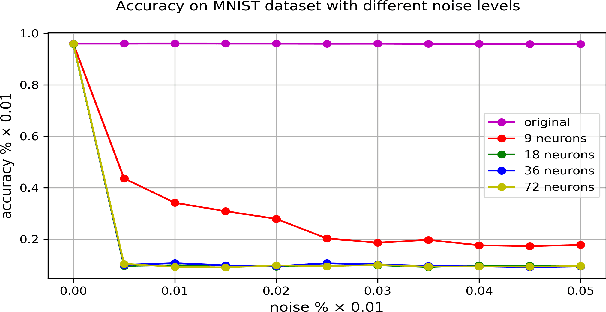

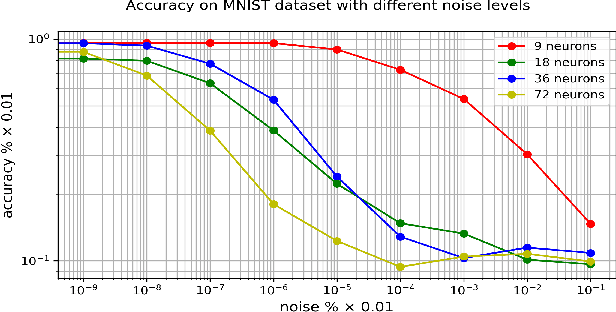

Abstract:Deep Neural Networks are robust to minor perturbations of the learned network parameters and their minor modifications do not change the overall network response significantly. This allows space for model stealing, where a malevolent attacker can steal an already trained network, modify the weights and claim the new network his own intellectual property. In certain cases this can prevent the free distribution and application of networks in the embedded domain. In this paper, we propose a method for creating an equivalent version of an already trained fully connected deep neural network that can prevent network stealing: namely, it produces the same responses and classification accuracy, but it is extremely sensitive to weight changes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge