Jakramate Bootkrajang

Boosting Ridge Regression for High Dimensional Data Classification

Mar 25, 2020

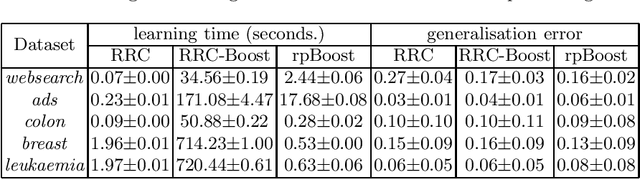

Abstract:Ridge regression is a well established regression estimator which can conveniently be adapted for classification problems. One compelling reason is probably the fact that ridge regression emits a closed-form solution thereby facilitating the training phase. However in the case of high-dimensional problems, the closed-form solution which involves inverting the regularised covariance matrix is rather expensive to compute. The high computational demand of such operation also renders difficulty in constructing ensemble of ridge regressions. In this paper, we consider learning an ensemble of ridge regressors where each regressor is trained in its own randomly projected subspace. Subspace regressors are later combined via adaptive boosting methodology. Experiments based on five high-dimensional classification problems demonstrated the effectiveness of the proposed method in terms of learning time and in some cases improved predictive performance can be observed.

Boosting in the presence of label noise

Sep 26, 2013

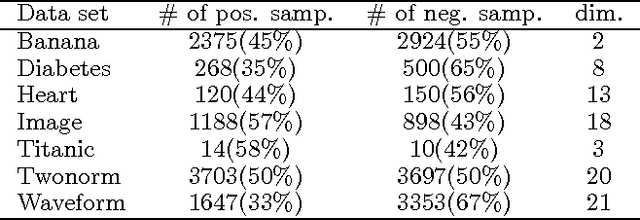

Abstract:Boosting is known to be sensitive to label noise. We studied two approaches to improve AdaBoost's robustness against labelling errors. One is to employ a label-noise robust classifier as a base learner, while the other is to modify the AdaBoost algorithm to be more robust. Empirical evaluation shows that a committee of robust classifiers, although converges faster than non label-noise aware AdaBoost, is still susceptible to label noise. However, pairing it with the new robust Boosting algorithm we propose here results in a more resilient algorithm under mislabelling.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge