Jaeeun Jang

Artificial Association Neural Networks

Nov 22, 2021

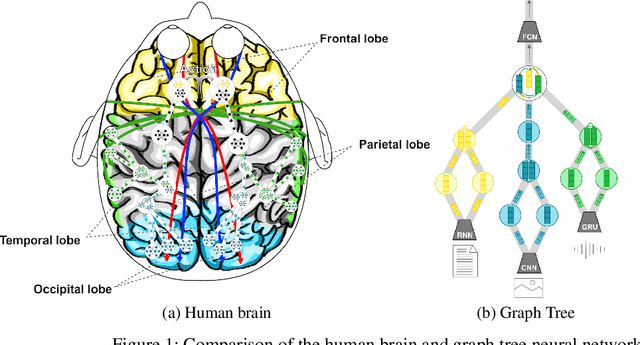

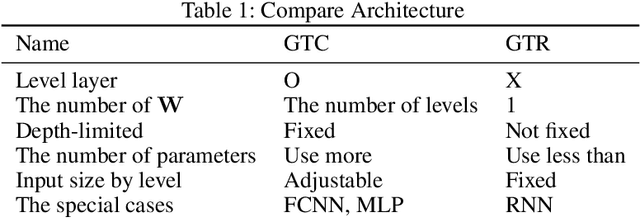

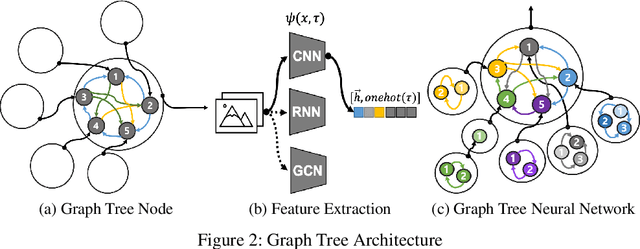

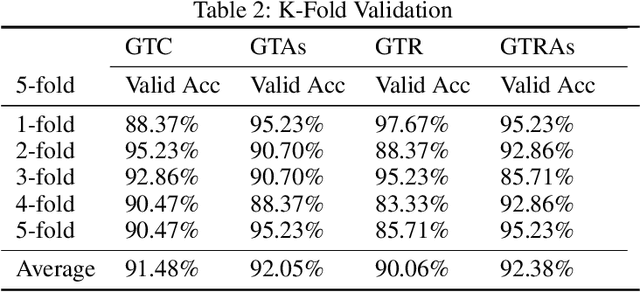

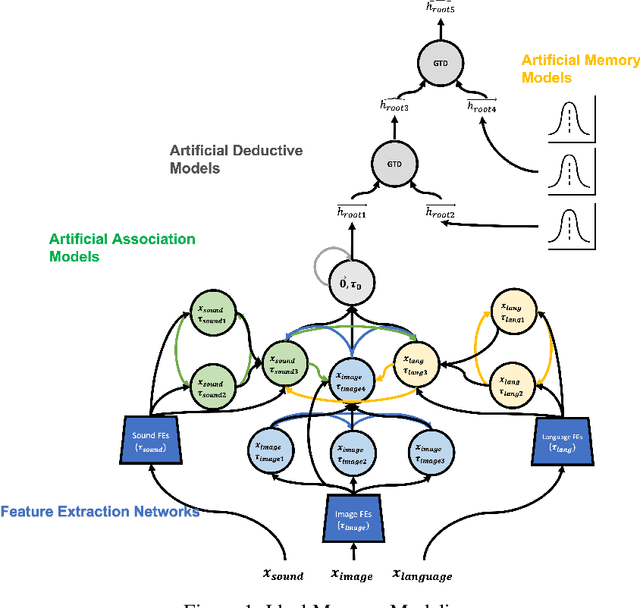

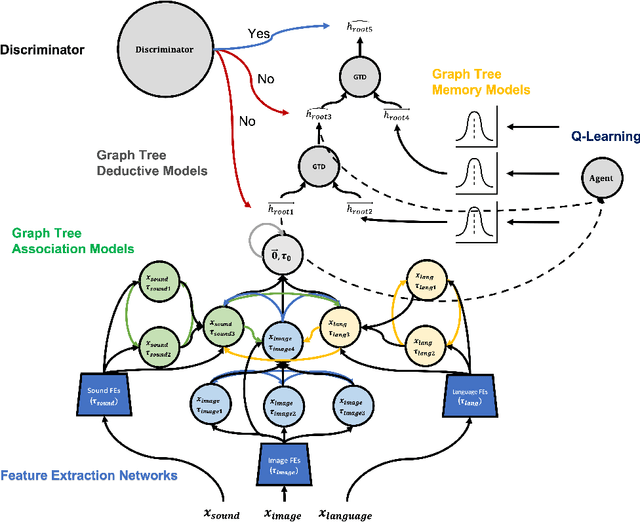

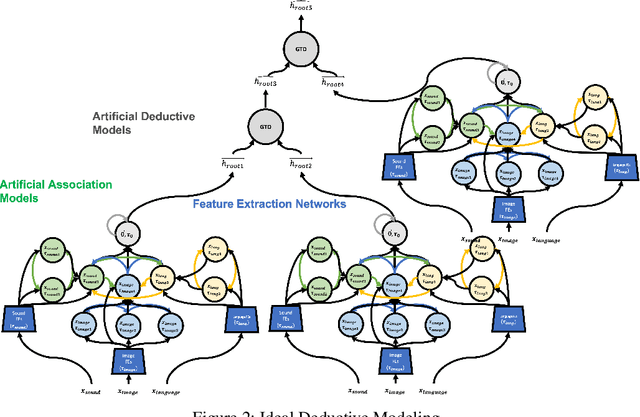

Abstract:In the field of deep learning, various architectures have been developed. However, most studies are limited to specific tasks or datasets due to their fixed layer structure. This paper does not express the structure delivering information as a network model but as a data structure called an association tree(AT). And we propose two artificial association networks(AANs) designed to solve the problems of existing networks by analyzing the structure of human neural networks. Defining the starting and ending points of the path in a single graph is difficult, and a tree cannot express the relationship among sibling nodes. On the contrary, an AT can express leaf and root nodes as the starting and ending points of the path and the relationship among sibling nodes. Instead of using fixed sequence layers, we create an AT for each data and train AANs according to the tree's structure. AANs are data-driven learning in which the number of convolutions varies according to the depth of the tree. Moreover, AANs can simultaneously learn various types of datasets through the recursive learning. Depth-first convolution (DFC) encodes the interaction result from leaf nodes to the root node in a bottom-up approach, and depth-first deconvolution (DFD) decodes the interaction result from the root node to the leaf nodes in a top-down approach. We conducted three experiments. The first experiment verified whether it could be processed by combining AANs and feature extraction networks. In the second, we compared the performance of networks that separately learned image, sound, and tree, graph structure datasets with the performance simultaneously learned by connecting these networks. In the third, we verified whether the output of AANs can embed all data in the AT. As a result, AATs learned without significant performance degradation.

Associational Memory Networks

Nov 19, 2021

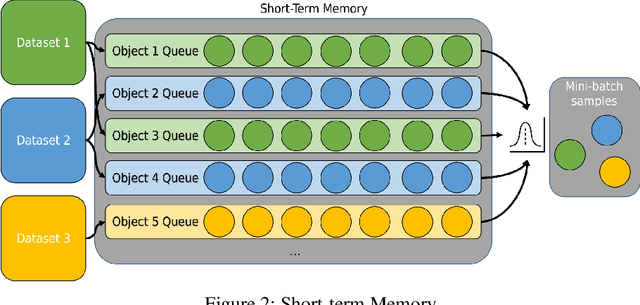

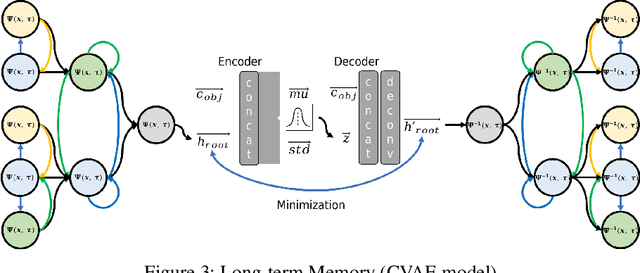

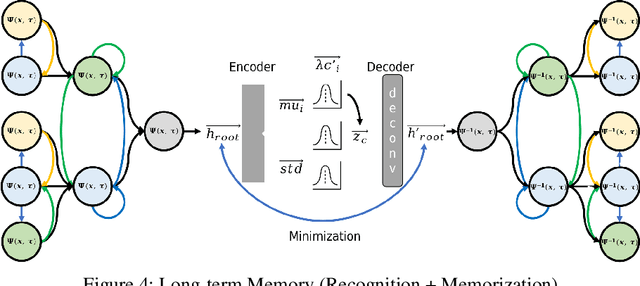

Abstract:We introduce associational memory networks(AMNs) that memorize and remember any data. This neural network has two memories. One consists of a queue-structured short-term memory to solve the class imbalance problem and long-term memory to store the distribution of objects, introducing the contents of storing and generating various datasets.

Imagine Networks

Nov 17, 2021

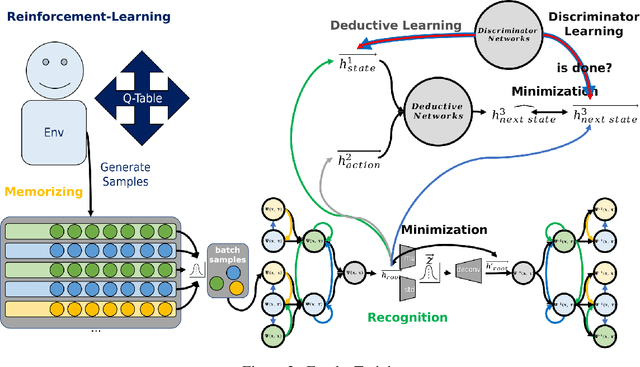

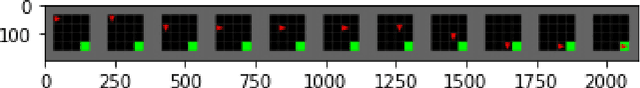

Abstract:In this paper, we introduce an imagine network that can simulate itself through artificial association networks. Association, deduction, and memory networks are learned, and a network is created by combining the discriminator and reinforcement learning models. This model can learn various datasets or data samples generated in environments and generate new data samples.

Associational Deductive Networks

Nov 17, 2021

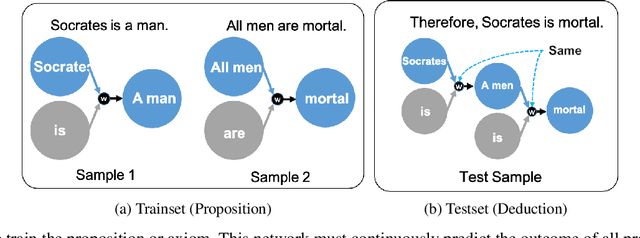

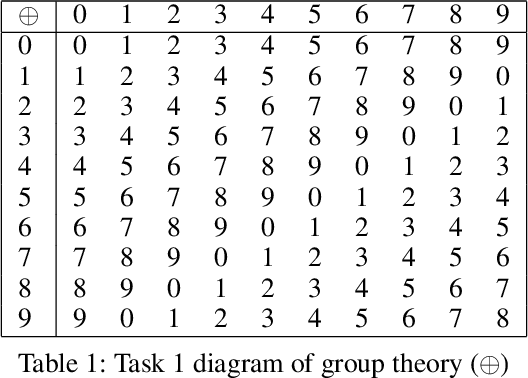

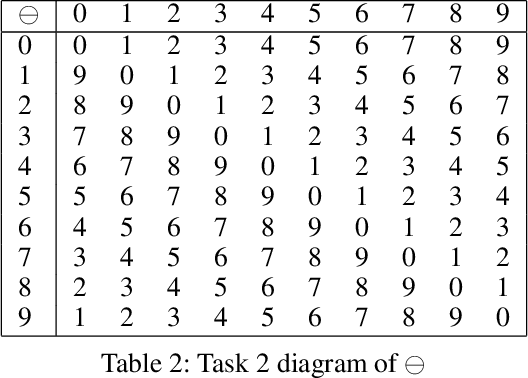

Abstract:we introduce associational deductive networks(ADNs), a network that performs deductive reasoning. To have high-dimensional thinking, combining various axioms and putting the results back into another axiom is necessary to produce new relationships and results. For example, it would be given two propositions: "Socrates is a man." and "All men are mortals." and two propositions could be used to infer the new proposition, "Therefore Socrates is mortal.". To evaluate, we used MNIST Dataset, a handwritten numerical image dataset, to apply it to the group theory and show the results of performing deductive learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge