Jae-Hong Lee

Attribution Mask: Filtering Out Irrelevant Features By Recursively Focusing Attention on Inputs of DNNs

Feb 15, 2021

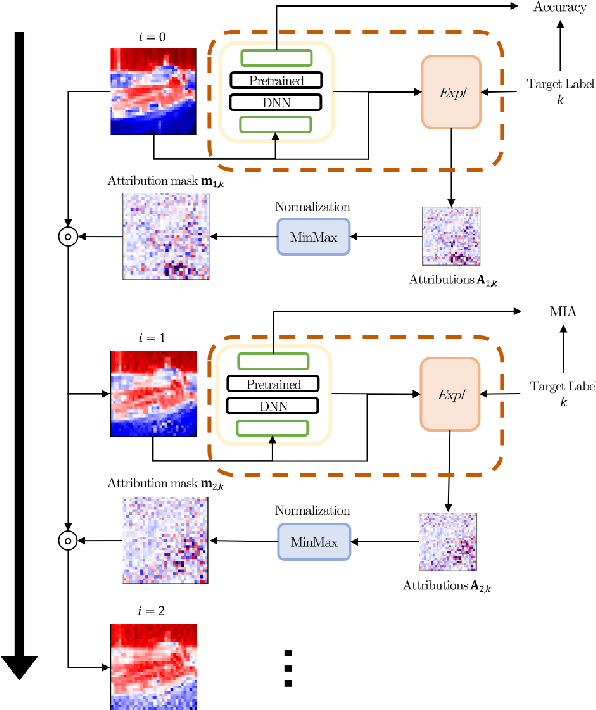

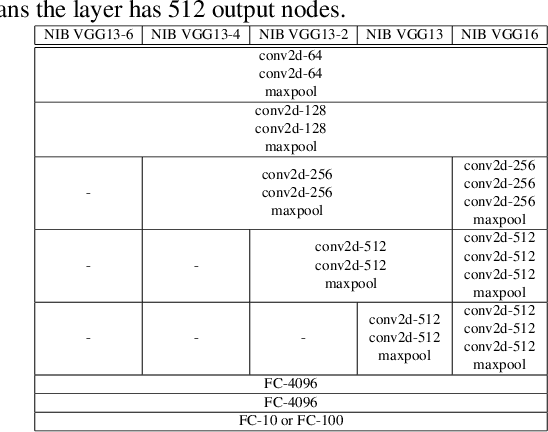

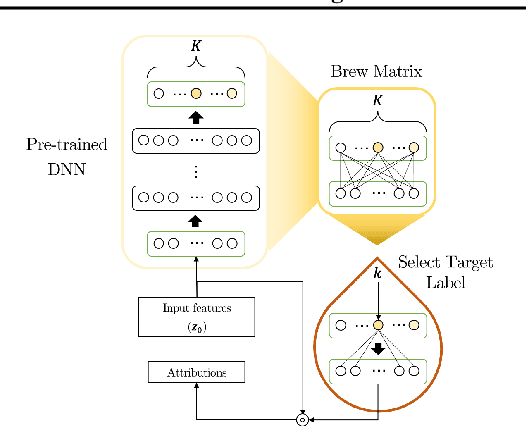

Abstract:Attribution methods calculate attributions that visually explain the predictions of deep neural networks (DNNs) by highlighting important parts of the input features. In particular, gradient-based attribution (GBA) methods are widely used because they can be easily implemented through automatic differentiation. In this study, we use the attributions that filter out irrelevant parts of the input features and then verify the effectiveness of this approach by measuring the classification accuracy of a pre-trained DNN. This is achieved by calculating and applying an \textit{attribution mask} to the input features and subsequently introducing the masked features to the DNN, for which the mask is designed to recursively focus attention on the parts of the input related to the target label. The accuracy is enhanced under a certain condition, i.e., \textit{no implicit bias}, which can be derived based on our theoretical insight into compressing the DNN into a single-layer neural network. We also provide Gradient\,*\,Sign-of-Input (GxSI) to obtain the attribution mask that further improves the accuracy. As an example, on CIFAR-10 that is modified using the attribution mask obtained from GxSI, we achieve the accuracy ranging from 99.8\% to 99.9\% without additional training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge