Hongwen Hu

MA-CDMR: An Intelligent Cross-domain Multicast Routing Method based on Multiagent Deep Reinforcement Learning in Multi-domain SDWN

Sep 11, 2024

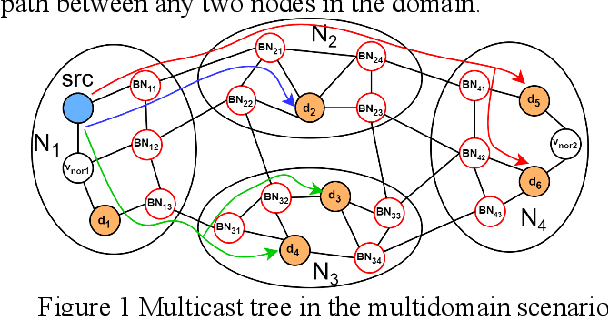

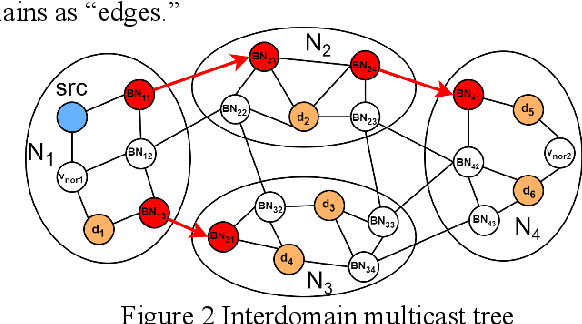

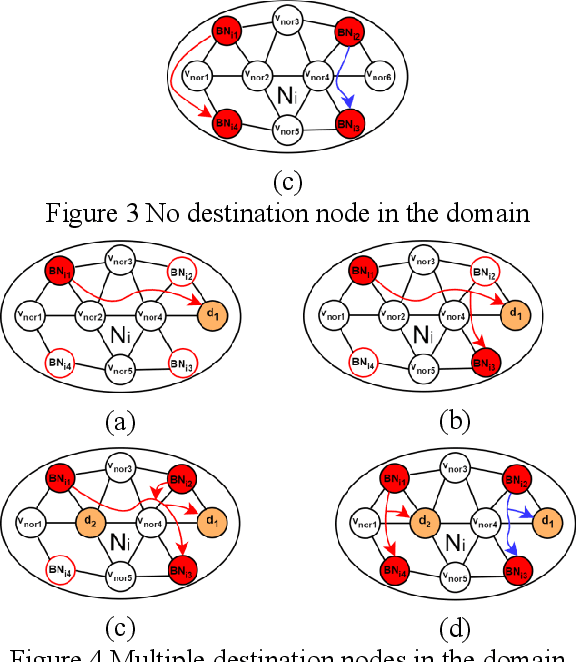

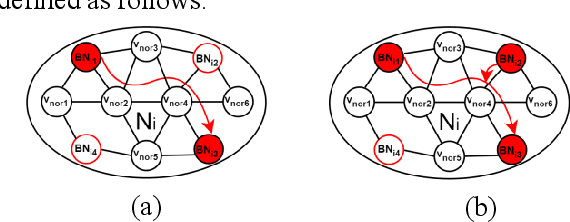

Abstract:The cross-domain multicast routing problem in a software-defined wireless network with multiple controllers is a classic NP-hard optimization problem. As the network size increases, designing and implementing cross-domain multicast routing paths in the network requires not only designing efficient solution algorithms to obtain the optimal cross-domain multicast tree but also ensuring the timely and flexible acquisition and maintenance of global network state information. However, existing solutions have a limited ability to sense the network traffic state, affecting the quality of service of multicast services. In addition, these methods have difficulty adapting to the highly dynamically changing network states and have slow convergence speeds. To this end, this paper aims to design and implement a multiagent deep reinforcement learning based cross-domain multicast routing method for SDWN with multicontroller domains. First, a multicontroller communication mechanism and a multicast group management module are designed to transfer and synchronize network information between different control domains of the SDWN, thus effectively managing the joining and classification of members in the cross-domain multicast group. Second, a theoretical analysis and proof show that the optimal cross-domain multicast tree includes an interdomain multicast tree and an intradomain multicast tree. An agent is established for each controller, and a cooperation mechanism between multiple agents is designed to effectively optimize cross-domain multicast routing and ensure consistency and validity in the representation of network state information for cross-domain multicast routing decisions. Third, a multiagent reinforcement learning-based method that combines online and offline training is designed to reduce the dependence on the real-time environment and increase the convergence speed of multiple agents.

DHRL-FNMR: An Intelligent Multicast Routing Approach Based on Deep Hierarchical Reinforcement Learning in SDN

May 30, 2023

Abstract:The optimal multicast tree problem in the Software-Defined Networking (SDN) multicast routing is an NP-hard combinatorial optimization problem. Although existing SDN intelligent solution methods, which are based on deep reinforcement learning, can dynamically adapt to complex network link state changes, these methods are plagued by problems such as redundant branches, large action space, and slow agent convergence. In this paper, an SDN intelligent multicast routing algorithm based on deep hierarchical reinforcement learning is proposed to circumvent the aforementioned problems. First, the multicast tree construction problem is decomposed into two sub-problems: the fork node selection problem and the construction of the optimal path from the fork node to the destination node. Second, based on the information characteristics of SDN global network perception, the multicast tree state matrix, link bandwidth matrix, link delay matrix, link packet loss rate matrix, and sub-goal matrix are designed as the state space of intrinsic and meta controllers. Then, in order to mitigate the excessive action space, our approach constructs different action spaces at the upper and lower levels. The meta-controller generates an action space using network nodes to select the fork node, and the intrinsic controller uses the adjacent edges of the current node as its action space, thus implementing four different action selection strategies in the construction of the multicast tree. To facilitate the intelligent agent in constructing the optimal multicast tree with greater speed, we developed alternative reward strategies that distinguish between single-step node actions and multi-step actions towards multiple destination nodes.

Intelligent multicast routing method based on multi-agent deep reinforcement learning in SDWN

May 12, 2023

Abstract:Multicast communication technology is widely applied in wireless environments with a high device density. Traditional wireless network architectures have difficulty flexibly obtaining and maintaining global network state information and cannot quickly respond to network state changes, thus affecting the throughput, delay, and other QoS requirements of existing multicasting solutions. Therefore, this paper proposes a new multicast routing method based on multiagent deep reinforcement learning (MADRL-MR) in a software-defined wireless networking (SDWN) environment. First, SDWN technology is adopted to flexibly configure the network and obtain network state information in the form of traffic matrices representing global network links information, such as link bandwidth, delay, and packet loss rate. Second, the multicast routing problem is divided into multiple subproblems, which are solved through multiagent cooperation. To enable each agent to accurately understand the current network state and the status of multicast tree construction, the state space of each agent is designed based on the traffic and multicast tree status matrices, and the set of AP nodes in the network is used as the action space. A novel single-hop action strategy is designed, along with a reward function based on the four states that may occur during tree construction: progress, invalid, loop, and termination. Finally, a decentralized training approach is combined with transfer learning to enable each agent to quickly adapt to dynamic network changes and accelerate convergence. Simulation experiments show that MADRL-MR outperforms existing algorithms in terms of throughput, delay, packet loss rate, etc., and can establish more intelligent multicast routes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge