Hiya Roy

Image inpainting using frequency domain priors

Dec 03, 2020

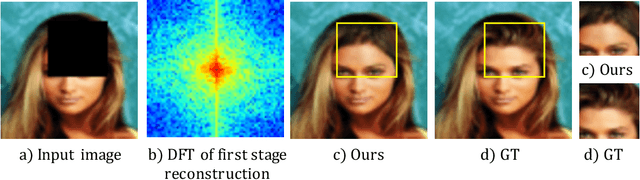

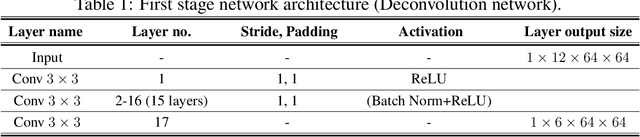

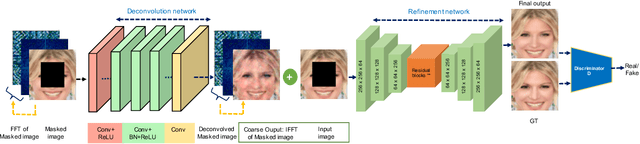

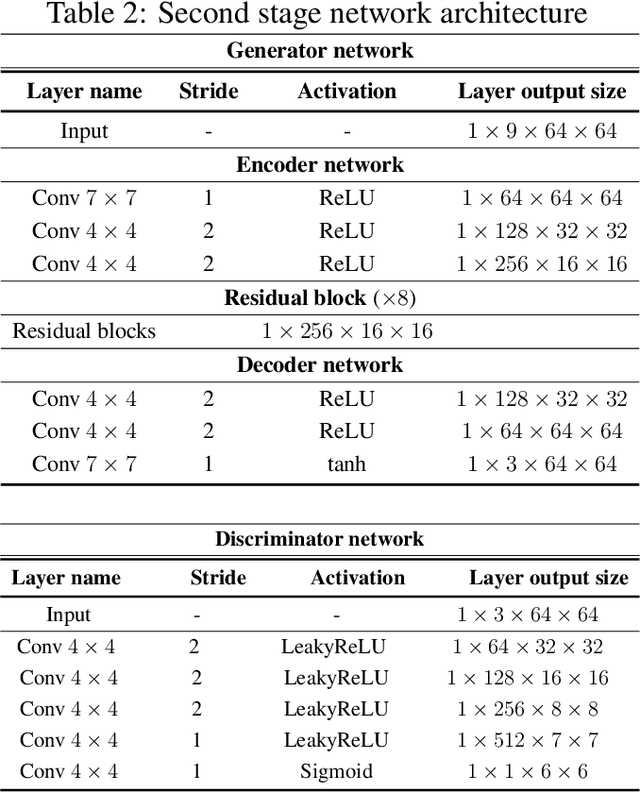

Abstract:In this paper, we present a novel image inpainting technique using frequency domain information. Prior works on image inpainting predict the missing pixels by training neural networks using only the spatial domain information. However, these methods still struggle to reconstruct high-frequency details for real complex scenes, leading to a discrepancy in color, boundary artifacts, distorted patterns, and blurry textures. To alleviate these problems, we investigate if it is possible to obtain better performance by training the networks using frequency domain information (Discrete Fourier Transform) along with the spatial domain information. To this end, we propose a frequency-based deconvolution module that enables the network to learn the global context while selectively reconstructing the high-frequency components. We evaluate our proposed method on the publicly available datasets CelebA, Paris Streetview, and DTD texture dataset, and show that our method outperforms current state-of-the-art image inpainting techniques both qualitatively and quantitatively.

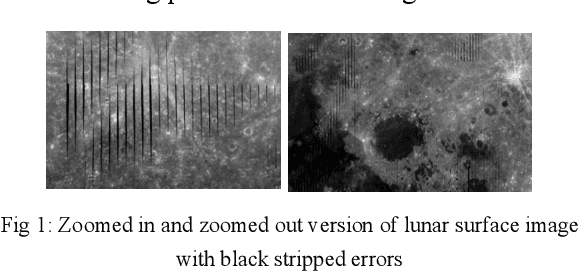

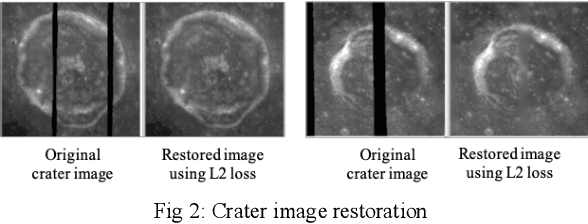

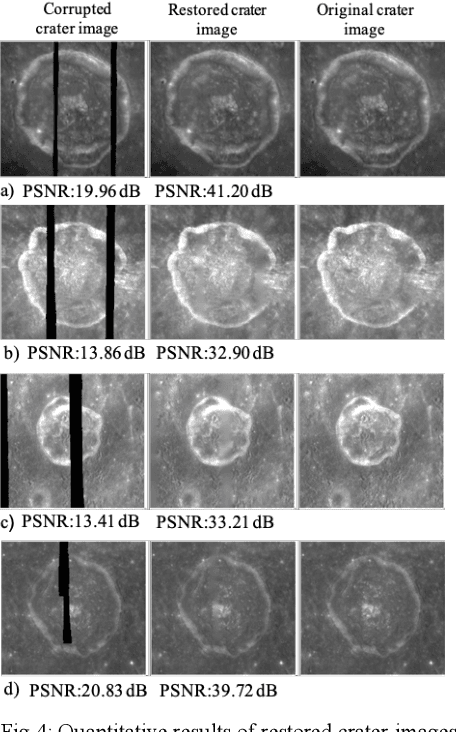

Lunar surface image restoration using U-net based deep neural networks

Apr 14, 2019

Abstract:Image restoration is a technique that reconstructs a feasible estimate of the original image from the noisy observation. In this paper, we present a U-Net based deep neural network model to restore the missing pixels on the lunar surface image in a context-aware fashion, which is often known as image inpainting problem. We use the grayscale image of the lunar surface captured by Multiband Imager (MI) onboard Kaguya satellite for our experiments and the results show that our method can reconstruct the lunar surface image with good visual quality and improved PSNR values.

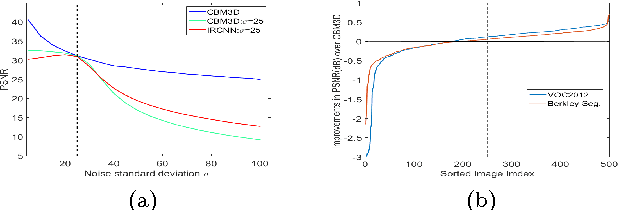

Can fully convolutional networks perform well for general image restoration problems?

Apr 13, 2017

Abstract:We present a fully convolutional network(FCN) based approach for color image restoration. FCNs have recently shown remarkable performance for high-level vision problem like semantic segmentation. In this paper, we investigate if FCN models can show promising performance for low-level problems like image restoration as well. We propose a fully convolutional model, that learns a direct end-to-end mapping between the corrupted images as input and the desired clean images as output. Our proposed method takes inspiration from domain transformation techniques but presents a data-driven task specific approach where filters for novel basis projection, task dependent coefficient alterations, and image reconstruction are represented as convolutional networks. Experimental results show that our FCN model outperforms traditional sparse coding based methods and demonstrates competitive performance compared to the state-of-the-art methods for image denoising. We further show that our proposed model can solve the difficult problem of blind image inpainting and can produce reconstructed images of impressive visual quality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge