György Turán

Iterative Graph Neural Network Enhancement via Frequent Subgraph Mining of Explanations

Mar 12, 2024

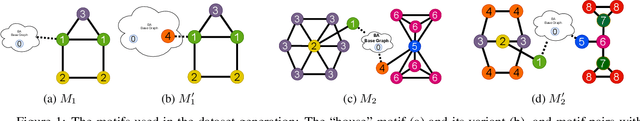

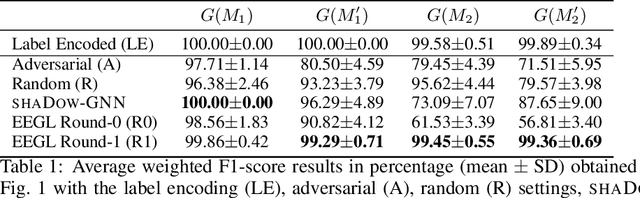

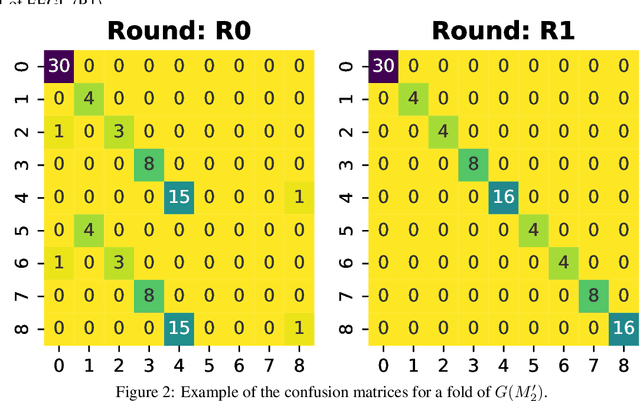

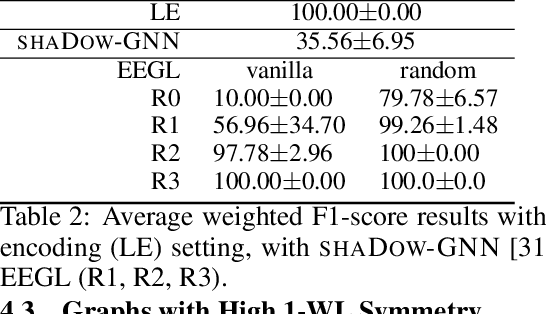

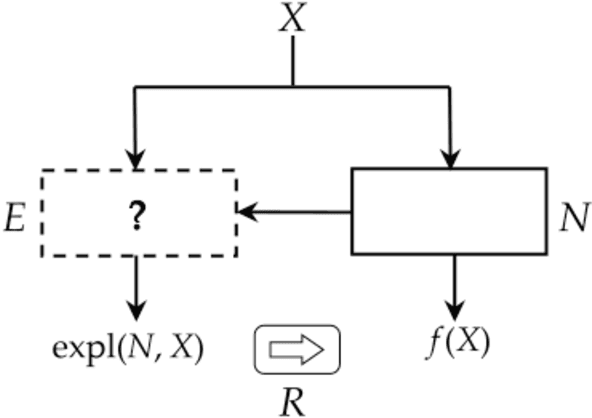

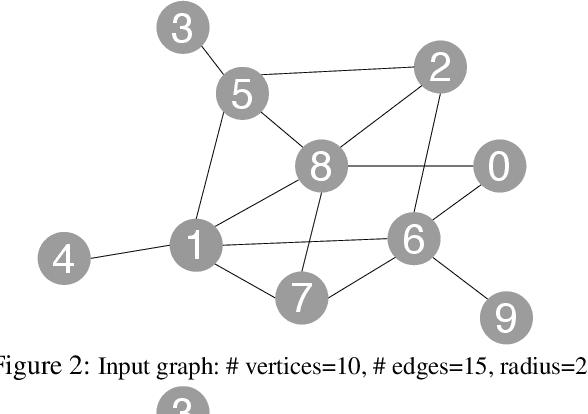

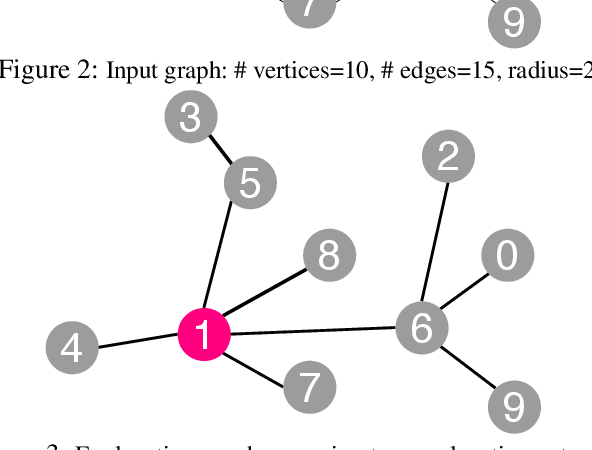

Abstract:We formulate an XAI-based model improvement approach for Graph Neural Networks (GNNs) for node classification, called Explanation Enhanced Graph Learning (EEGL). The goal is to improve predictive performance of GNN using explanations. EEGL is an iterative self-improving algorithm, which starts with a learned "vanilla" GNN, and repeatedly uses frequent subgraph mining to find relevant patterns in explanation subgraphs. These patterns are then filtered further to obtain application-dependent features corresponding to the presence of certain subgraphs in the node neighborhoods. Giving an application-dependent algorithm for such a subgraph-based extension of the Weisfeiler-Leman (1-WL) algorithm has previously been posed as an open problem. We present experimental evidence, with synthetic and real-world data, which show that EEGL outperforms related approaches in predictive performance and that it has a node-distinguishing power beyond that of vanilla GNNs. We also analyze EEGL's training dynamics.

Explanation from Specification

Dec 13, 2020

Abstract:Explainable components in XAI algorithms often come from a familiar set of models, such as linear models or decision trees. We formulate an approach where the type of explanation produced is guided by a specification. Specifications are elicited from the user, possibly using interaction with the user and contributions from other areas. Areas where a specification could be obtained include forensic, medical, and scientific applications. Providing a menu of possible types of specifications in an area is an exploratory knowledge representation and reasoning task for the algorithm designer, aiming at understanding the possibilities and limitations of efficiently computable modes of explanations. Two examples are discussed: explanations for Bayesian networks using the theory of argumentation, and explanations for graph neural networks. The latter case illustrates the possibility of having a representation formalism available to the user for specifying the type of explanation requested, for example, a chemical query language for classifying molecules. The approach is motivated by a theory of explanation in the philosophy of science, and it is related to current questions in the philosophy of science on the role of machine learning.

Measuring an Artificial Intelligence System's Performance on a Verbal IQ Test For Young Children

Sep 11, 2015

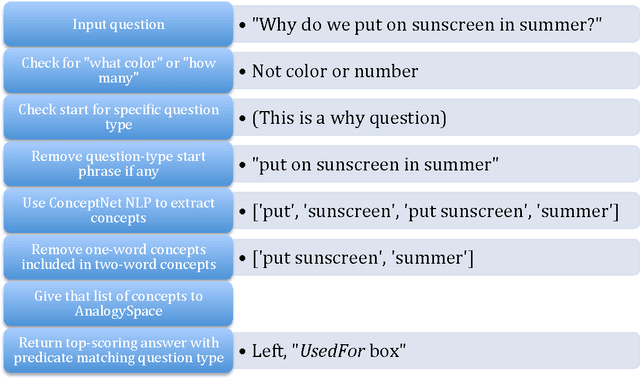

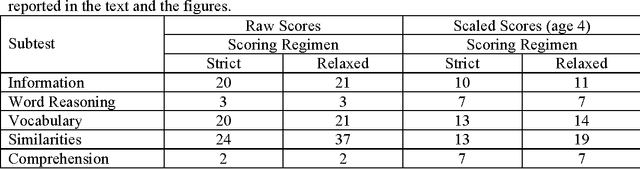

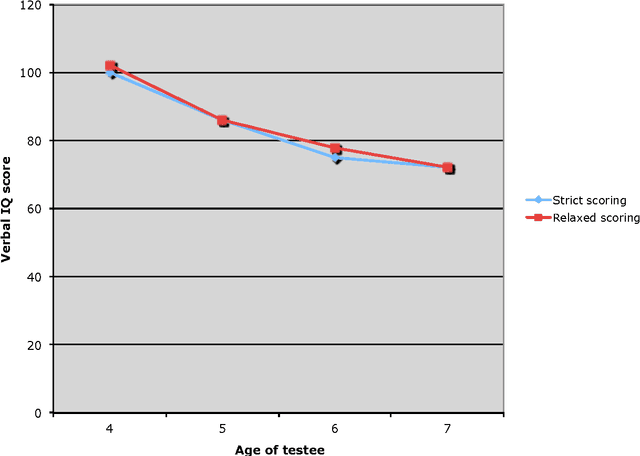

Abstract:We administered the Verbal IQ (VIQ) part of the Wechsler Preschool and Primary Scale of Intelligence (WPPSI-III) to the ConceptNet 4 AI system. The test questions (e.g., "Why do we shake hands?") were translated into ConceptNet 4 inputs using a combination of the simple natural language processing tools that come with ConceptNet together with short Python programs that we wrote. The question answering used a version of ConceptNet based on spectral methods. The ConceptNet system scored a WPPSI-III VIQ that is average for a four-year-old child, but below average for 5 to 7 year-olds. Large variations among subtests indicate potential areas of improvement. In particular, results were strongest for the Vocabulary and Similarities subtests, intermediate for the Information subtest, and lowest for the Comprehension and Word Reasoning subtests. Comprehension is the subtest most strongly associated with common sense. The large variations among subtests and ordinary common sense strongly suggest that the WPPSI-III VIQ results do not show that "ConceptNet has the verbal abilities a four-year-old." Rather, children's IQ tests offer one objective metric for the evaluation and comparison of AI systems. Also, this work continues previous research on Psychometric AI.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge