Gopinath Ganapathy

RAD-PHI2: Instruction Tuning PHI-2 for Radiology

Mar 12, 2024

Abstract:Small Language Models (SLMs) have shown remarkable performance in general domain language understanding, reasoning and coding tasks, but their capabilities in the medical domain, particularly concerning radiology text, is less explored. In this study, we investigate the application of SLMs for general radiology knowledge specifically question answering related to understanding of symptoms, radiological appearances of findings, differential diagnosis, assessing prognosis, and suggesting treatments w.r.t diseases pertaining to different organ systems. Additionally, we explore the utility of SLMs in handling text-related tasks with respect to radiology reports within AI-driven radiology workflows. We fine-tune Phi-2, a SLM with 2.7 billion parameters using high-quality educational content from Radiopaedia, a collaborative online radiology resource. The resulting language model, RadPhi-2-Base, exhibits the ability to address general radiology queries across various systems (e.g., chest, cardiac). Furthermore, we investigate Phi-2 for instruction tuning, enabling it to perform specific tasks. By fine-tuning Phi-2 on both general domain tasks and radiology-specific tasks related to chest X-ray reports, we create Rad-Phi2. Our empirical results reveal that Rad-Phi2 Base and Rad-Phi2 perform comparably or even outperform larger models such as Mistral-7B-Instruct-v0.2 and GPT-4 providing concise and precise answers. In summary, our work demonstrates the feasibility and effectiveness of utilizing SLMs in radiology workflows both for knowledge related queries as well as for performing specific tasks related to radiology reports thereby opening up new avenues for enhancing the quality and efficiency of radiology practice.

Retrieval Augmented Chest X-Ray Report Generation using OpenAI GPT models

May 05, 2023

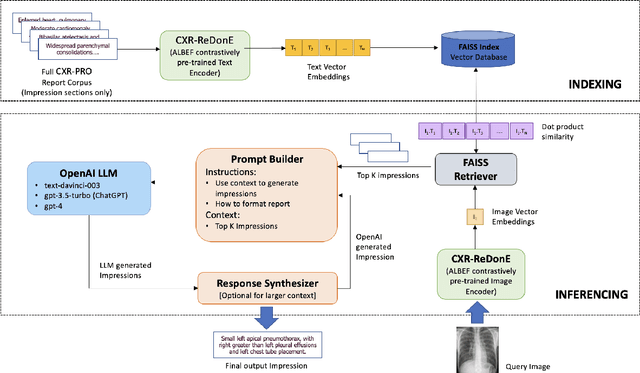

Abstract:We propose Retrieval Augmented Generation (RAG) as an approach for automated radiology report writing that leverages multimodally aligned embeddings from a contrastively pretrained vision language model for retrieval of relevant candidate radiology text for an input radiology image and a general domain generative model like OpenAI text-davinci-003, gpt-3.5-turbo and gpt-4 for report generation using the relevant radiology text retrieved. This approach keeps hallucinated generations under check and provides capabilities to generate report content in the format we desire leveraging the instruction following capabilities of these generative models. Our approach achieves better clinical metrics with a BERTScore of 0.2865 ({\Delta}+ 25.88%) and Semb score of 0.4026 ({\Delta}+ 6.31%). Our approach can be broadly relevant for different clinical settings as it allows to augment the automated radiology report generation process with content relevant for that setting while also having the ability to inject user intents and requirements in the prompts as part of the report generation process to modulate the content and format of the generated reports as applicable for that clinical setting.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge