Glen B. Mbeng

Quantum Approximate Optimization Algorithm applied to the binary perceptron

Dec 19, 2021

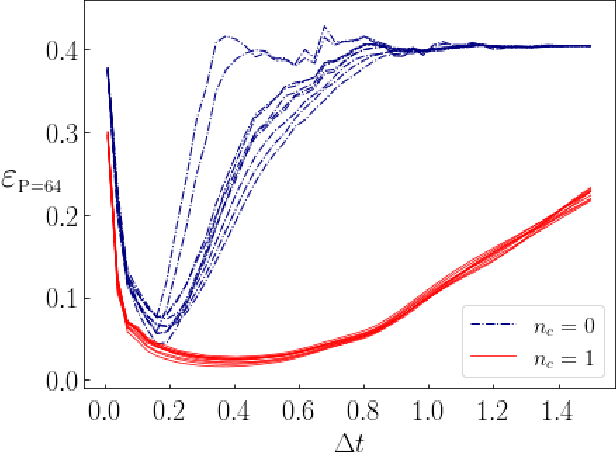

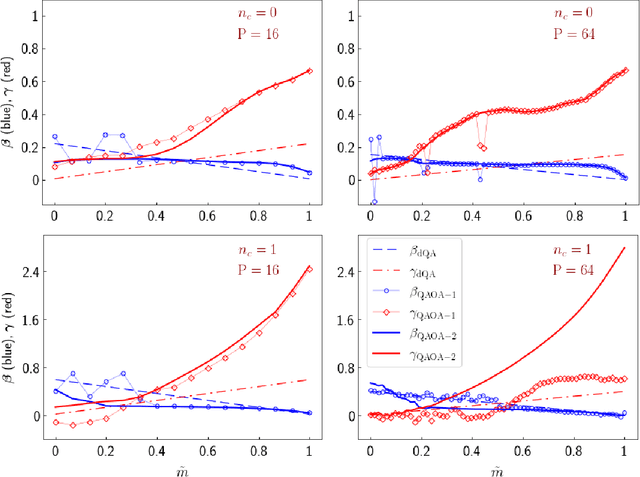

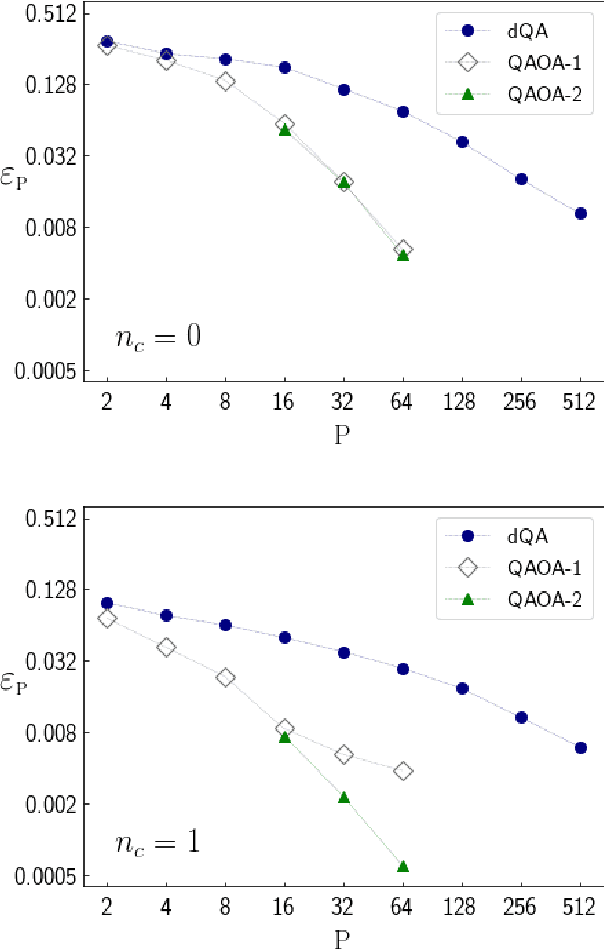

Abstract:We apply digitized Quantum Annealing (QA) and Quantum Approximate Optimization Algorithm (QAOA) to a paradigmatic task of supervised learning in artificial neural networks: the optimization of synaptic weights for the binary perceptron. At variance with the usual QAOA applications to MaxCut, or to quantum spin-chains ground state preparation, the classical Hamiltonian is characterized by highly non-local multi-spin interactions. Yet, we provide evidence for the existence of optimal smooth solutions for the QAOA parameters, which are transferable among typical instances of the same problem, and we prove numerically an enhanced performance of QAOA over traditional QA. We also investigate on the role of the QAOA optimization landscape geometry in this problem, showing that the detrimental effect of a gap-closing transition encountered in QA is also negatively affecting the performance of our implementation of QAOA.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge