Gengyuan Cai

An Enhanced Hierarchical Planning Framework for Multi-Robot Autonomous Exploration

Oct 25, 2024

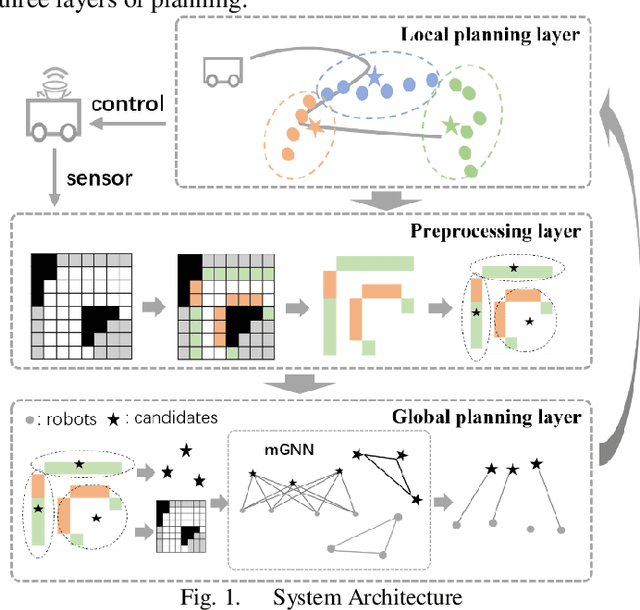

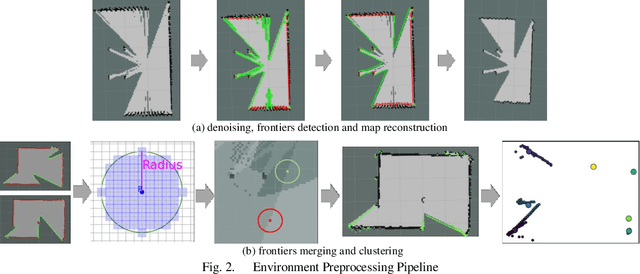

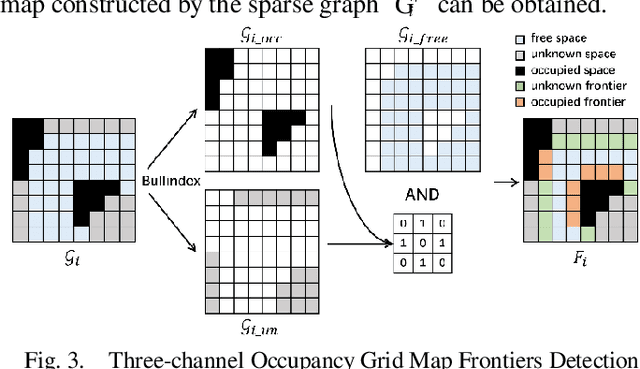

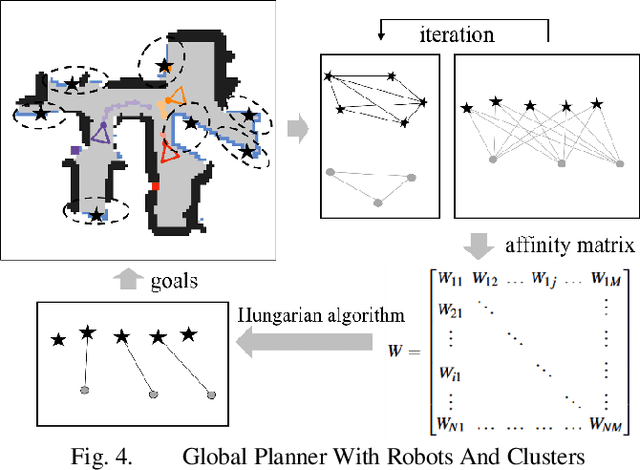

Abstract:The autonomous exploration of environments by multi-robot systems is a critical task with broad applications in rescue missions, exploration endeavors, and beyond. Current approaches often rely on either greedy frontier selection or end-to-end deep reinforcement learning (DRL) methods, yet these methods are frequently hampered by limitations such as short-sightedness, overlooking long-term implications, and convergence difficulties stemming from the intricate high-dimensional learning space. To address these challenges, this paper introduces an innovative integration strategy that combines the low-dimensional action space efficiency of frontier-based methods with the far-sightedness and optimality of DRL-based approaches. We propose a three-tiered planning framework that first identifies frontiers in free space, creating a sparse map representation that lightens data transmission burdens and reduces the DRL action space's dimensionality. Subsequently, we develop a multi-graph neural network (mGNN) that incorporates states of potential targets and robots, leveraging policy-based reinforcement learning to compute affinities, thereby superseding traditional heuristic utility values. Lastly, we implement local routing planning through subsequence search, which avoids exhaustive sequence traversal. Extensive validation across diverse scenarios and comprehensive simulation results demonstrate the effectiveness of our proposed method. Compared to baseline approaches, our framework achieves environmental exploration with fewer time steps and a notable reduction of over 30% in data transmission, showcasing its superiority in terms of efficiency and performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge