Farhana Faruqe

Competency Model Approach to AI Literacy: Research-based Path from Initial Framework to Model

Aug 12, 2021

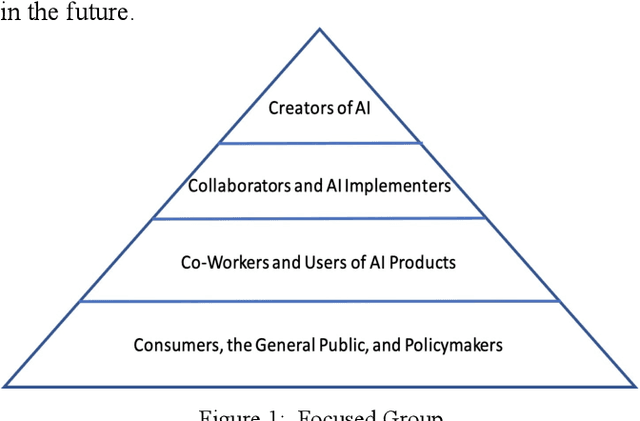

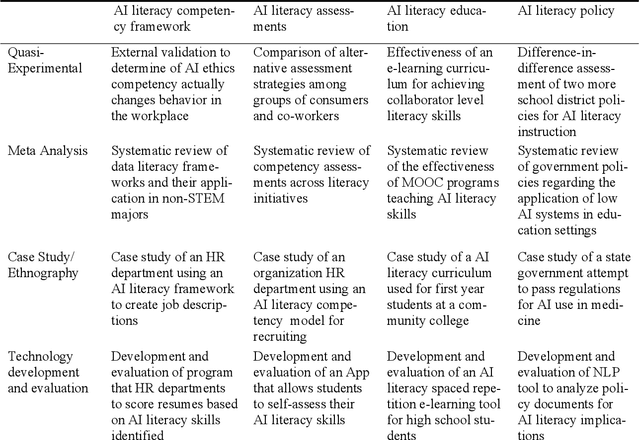

Abstract:The recent developments in Artificial Intelligence (AI) technologies challenge educators and educational institutions to respond with curriculum and resources that prepare students of all ages with the foundational knowledge and skills for success in the AI workplace. Research on AI Literacy could lead to an effective and practical platform for developing these skills. We propose and advocate for a pathway for developing AI Literacy as a pragmatic and useful tool for AI education. Such a discipline requires moving beyond a conceptual framework to a multi-level competency model with associated competency assessments. This approach to an AI Literacy could guide future development of instructional content as we prepare a range of groups (i.e., consumers, co-workers, collaborators, and creators). We propose here a research matrix as an initial step in the development of a roadmap for AI Literacy research, which requires a systematic and coordinated effort with the support of publication outlets and research funding, to expand the areas of competency and assessments.

Monitoring Trust in Human-Machine Interactions for Public Sector Applications

Oct 16, 2020

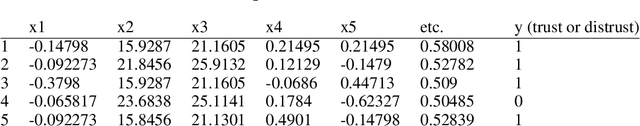

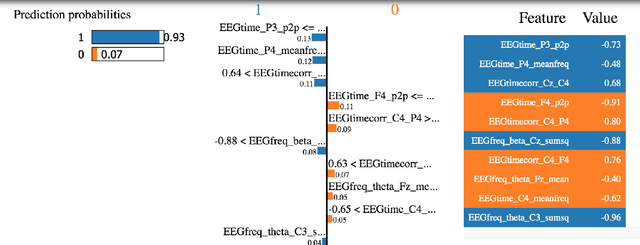

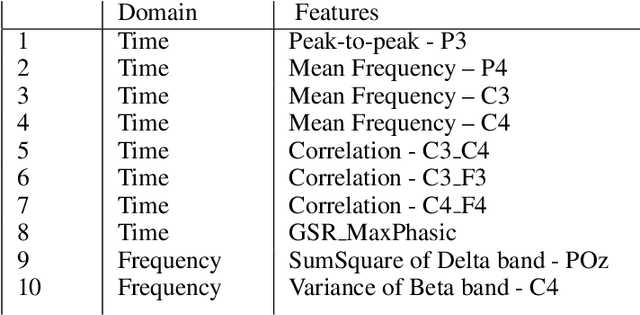

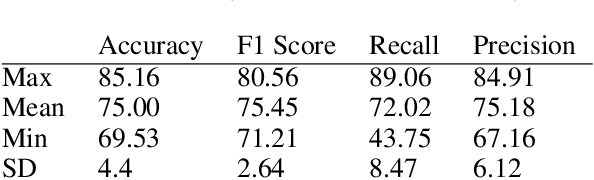

Abstract:The work reported here addresses the capacity of psychophysiological sensors and measures using Electroencephalogram (EEG) and Galvanic Skin Response (GSR) to detect levels of trust for humans using AI-supported Human-Machine Interaction (HMI). Improvements to the analysis of EEG and GSR data may create models that perform as well, or better than, traditional tools. A challenge to analyzing the EEG and GSR data is the large amount of training data required due to a large number of variables in the measurements. Researchers have routinely used standard machine-learning classifiers like artificial neural networks (ANN), support vector machines (SVM), and K-nearest neighbors (KNN). Traditionally, these have provided few insights into which features of the EEG and GSR data facilitate the more and least accurate predictions - thus making it harder to improve the HMI and human-machine trust relationship. A key ingredient to applying trust-sensor research results to practical situations and monitoring trust in work environments is the understanding of which key features are contributing to trust and then reducing the amount of data needed for practical applications. We used the Local Interpretable Model-agnostic Explanations (LIME) model as a process to reduce the volume of data required to monitor and enhance trust in HMI systems - a technology that could be valuable for governmental and public sector applications. Explainable AI can make HMI systems transparent and promote trust. From customer service in government agencies and community-level non-profit public service organizations to national military and cybersecurity institutions, many public sector organizations are increasingly concerned to have effective and ethical HMI with services that are trustworthy, unbiased, and free of unintended negative consequences.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge