Enrico Panai

An Audit Framework for Adopting AI-Nudging on Children

Apr 25, 2023

Abstract:This is an audit framework for AI-nudging. Unlike the static form of nudging usually discussed in the literature, we focus here on a type of nudging that uses large amounts of data to provide personalized, dynamic feedback and interfaces. We call this AI-nudging (Lanzing, 2019, p. 549; Yeung, 2017). The ultimate goal of the audit outlined here is to ensure that an AI system that uses nudges will maintain a level of moral inertia and neutrality by complying with the recommendations, requirements, or suggestions of the audit (in other words, the criteria of the audit). In the case of unintended negative consequences, the audit suggests risk mitigation mechanisms that can be put in place. In the case of unintended positive consequences, it suggests some reinforcement mechanisms. Sponsored by the IBM-Notre Dame Tech Ethics Lab

The Ghost in the Machine has an American accent: value conflict in GPT-3

Mar 15, 2022

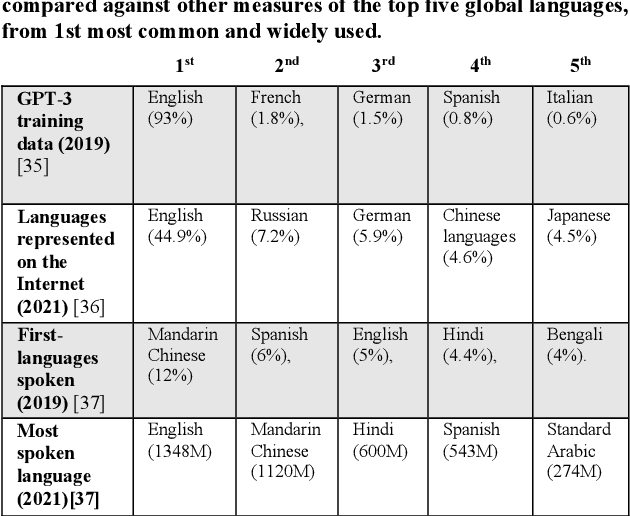

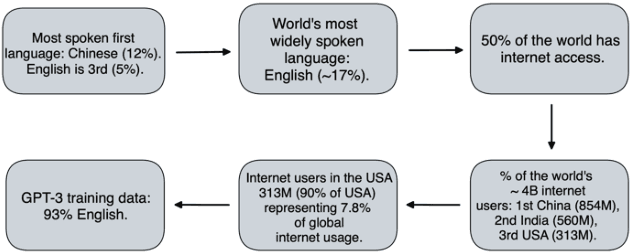

Abstract:The alignment problem in the context of large language models must consider the plurality of human values in our world. Whilst there are many resonant and overlapping values amongst the world's cultures, there are also many conflicting, yet equally valid, values. It is important to observe which cultural values a model exhibits, particularly when there is a value conflict between input prompts and generated outputs. We discuss how the co-creation of language and cultural value impacts large language models (LLMs). We explore the constitution of the training data for GPT-3 and compare that to the world's language and internet access demographics, as well as to reported statistical profiles of dominant values in some Nation-states. We stress tested GPT-3 with a range of value-rich texts representing several languages and nations; including some with values orthogonal to dominant US public opinion as reported by the World Values Survey. We observed when values embedded in the input text were mutated in the generated outputs and noted when these conflicting values were more aligned with reported dominant US values. Our discussion of these results uses a moral value pluralism (MVP) lens to better understand these value mutations. Finally, we provide recommendations for how our work may contribute to other current work in the field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge