Ege Zorlutuna

Going Forward-Forward in Distributed Deep Learning

Mar 30, 2024

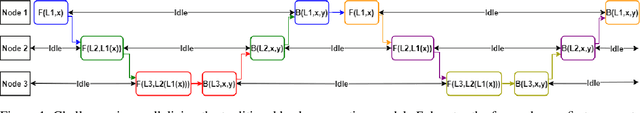

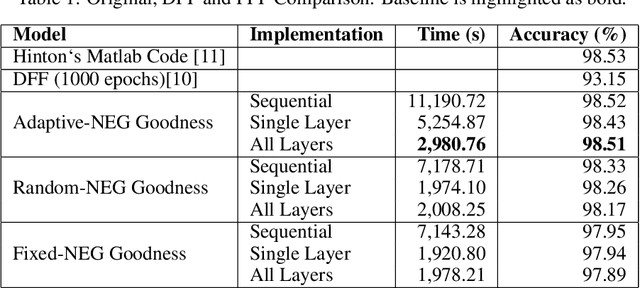

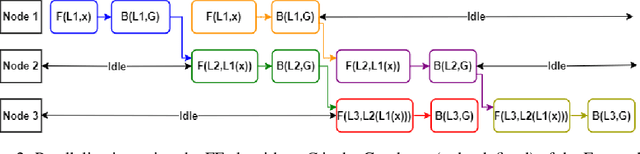

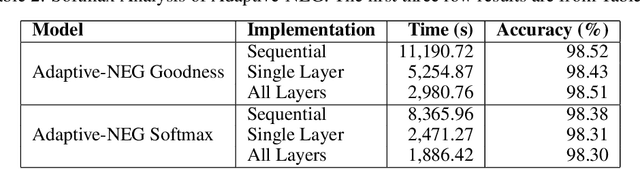

Abstract:This paper introduces a new approach in distributed deep learning, utilizing Geoffrey Hinton's Forward-Forward (FF) algorithm to enhance the training of neural networks in distributed computing environments. Unlike traditional methods that rely on forward and backward passes, the FF algorithm employs a dual forward pass strategy, significantly diverging from the conventional backpropagation process. This novel method aligns more closely with the human brain's processing mechanisms, potentially offering a more efficient and biologically plausible approach to neural network training. Our research explores the implementation of the FF algorithm in distributed settings, focusing on its capability to facilitate parallel training of neural network layers. This parallelism aims to reduce training times and resource consumption, thereby addressing some of the inherent challenges in current distributed deep learning systems. By analyzing the effectiveness of the FF algorithm in distributed computing, we aim to demonstrate its potential as a transformative tool in distributed deep learning systems, offering improvements in training efficiency. The integration of the FF algorithm into distributed deep learning represents a significant step forward in the field, potentially revolutionizing the way neural networks are trained in distributed environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge