Dong Hyeon Mok

Reasoning-Driven Design of Single Atom Catalysts via a Multi-Agent Large Language Model Framework

Feb 25, 2026Abstract:Large language models (LLMs) are becoming increasingly applied beyond natural language processing, demonstrating strong capabilities in complex scientific tasks that traditionally require human expertise. This progress has extended into materials discovery, where LLMs introduce a new paradigm by leveraging reasoning and in-context learning, capabilities absent from conventional machine learning approaches. Here, we present a Multi-Agent-based Electrocatalyst Search Through Reasoning and Optimization (MAESTRO) framework in which multiple LLMs with specialized roles collaboratively discover high-performance single atom catalysts for the oxygen reduction reaction. Within an autonomous design loop, agents iteratively reason, propose modifications, reflect on results and accumulate design history. Through in-context learning enabled by this iterative process, MAESTRO identified design principles not explicitly encoded in the LLMs' background knowledge and successfully discovered catalysts that break conventional scaling relations between reaction intermediates. These results highlight the potential of multi-agent LLM frameworks as a powerful strategy to generate chemical insight and discover promising catalysts.

Generative Language Model for Catalyst Discovery

Jul 19, 2024

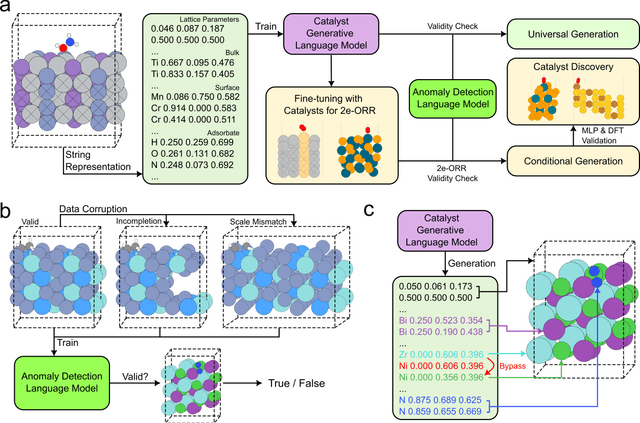

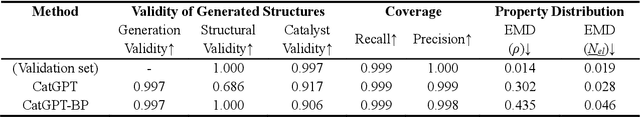

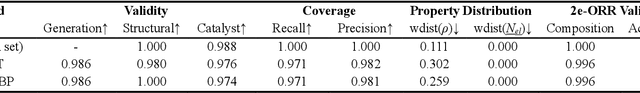

Abstract:Discovery of novel and promising materials is a critical challenge in the field of chemistry and material science, traditionally approached through methodologies ranging from trial-and-error to machine learning-driven inverse design. Recent studies suggest that transformer-based language models can be utilized as material generative models to expand chemical space and explore materials with desired properties. In this work, we introduce the Catalyst Generative Pretrained Transformer (CatGPT), trained to generate string representations of inorganic catalyst structures from a vast chemical space. CatGPT not only demonstrates high performance in generating valid and accurate catalyst structures but also serves as a foundation model for generating desired types of catalysts by fine-tuning with sparse and specified datasets. As an example, we fine-tuned the pretrained CatGPT using a binary alloy catalyst dataset designed for screening two-electron oxygen reduction reaction (2e-ORR) catalyst and generate catalyst structures specialized for 2e-ORR. Our work demonstrates the potential of language models as generative tools for catalyst discovery.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge