Donald E Brown

Improving mathematical questioning in teacher training

Dec 06, 2021

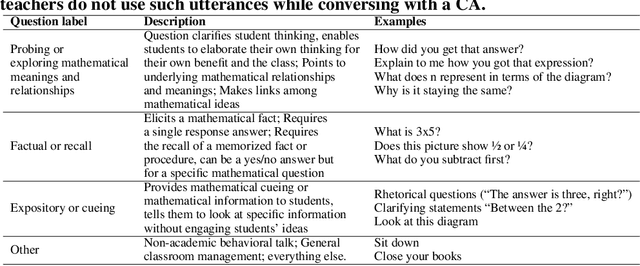

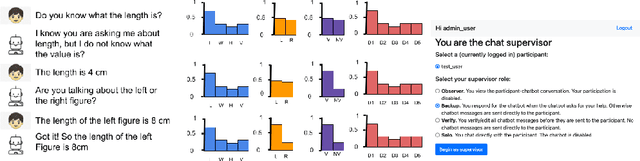

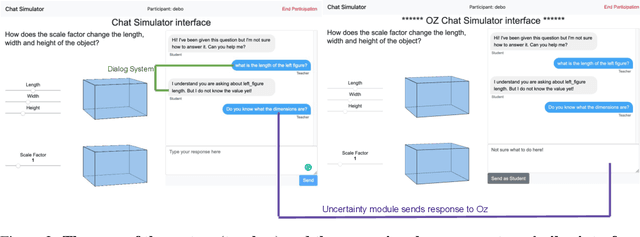

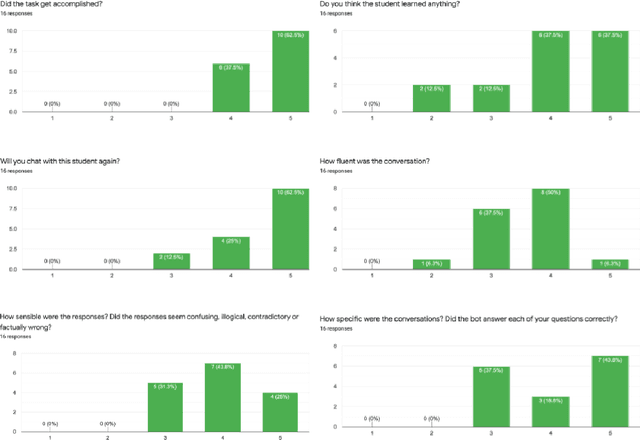

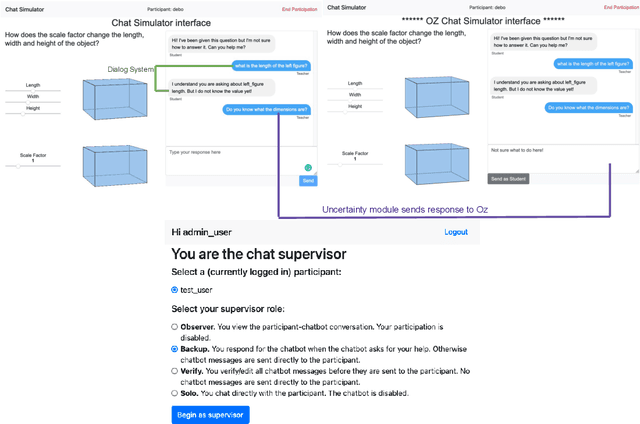

Abstract:High-fidelity, AI-based simulated classroom systems enable teachers to rehearse effective teaching strategies. However, dialogue-oriented open-ended conversations such as teaching a student about scale factors can be difficult to model. This paper builds a text-based interactive conversational agent to help teachers practice mathematical questioning skills based on the well-known Instructional Quality Assessment. We take a human-centered approach to designing our system, relying on advances in deep learning, uncertainty quantification, and natural language processing while acknowledging the limitations of conversational agents for specific pedagogical needs. Using experts' input directly during the simulation, we demonstrate how conversation success rate and high user satisfaction can be achieved.

Evaluation of mathematical questioning strategies using data collected through weak supervision

Dec 02, 2021

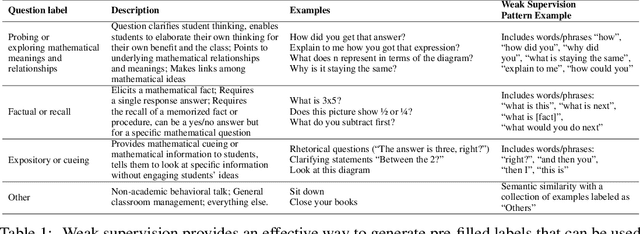

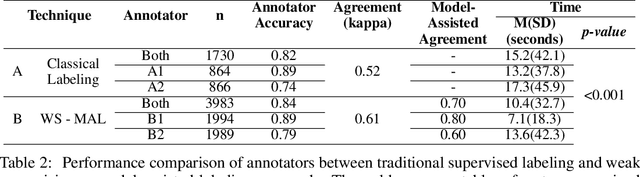

Abstract:A large body of research demonstrates how teachers' questioning strategies can improve student learning outcomes. However, developing new scenarios is challenging because of the lack of training data for a specific scenario and the costs associated with labeling. This paper presents a high-fidelity, AI-based classroom simulator to help teachers rehearse research-based mathematical questioning skills. Using a human-in-the-loop approach, we collected a high-quality training dataset for a mathematical questioning scenario. Using recent advances in uncertainty quantification, we evaluated our conversational agent for usability and analyzed the practicality of incorporating a human-in-the-loop approach for data collection and system evaluation for a mathematical questioning scenario.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge