Djones Lettnin

Hey AI, Generate Me a Hardware Code! Agentic AI-based Hardware Design & Verification

Jul 03, 2025Abstract:Modern Integrated Circuits (ICs) are becoming increasingly complex, and so is their development process. Hardware design verification entails a methodical and disciplined approach to the planning, development, execution, and sign-off of functionally correct hardware designs. This tedious process requires significant effort and time to ensure a bug-free tape-out. The field of Natural Language Processing has undergone a significant transformation with the advent of Large Language Models (LLMs). These powerful models, often referred to as Generative AI (GenAI), have revolutionized how machines understand and generate human language, enabling unprecedented advancements in a wide array of applications, including hardware design verification. This paper presents an agentic AI-based approach to hardware design verification, which empowers AI agents, in collaboration with Humain-in-the-Loop (HITL) intervention, to engage in a more dynamic, iterative, and self-reflective process, ultimately performing end-to-end hardware design and verification. This methodology is evaluated on five open-source designs, achieving over 95% coverage with reduced verification time while demonstrating superior performance, adaptability, and configurability.

Saarthi: The First AI Formal Verification Engineer

Feb 23, 2025

Abstract:Recently, Devin has made a significant buzz in the Artificial Intelligence (AI) community as the world's first fully autonomous AI software engineer, capable of independently developing software code. Devin uses the concept of agentic workflow in Generative AI (GenAI), which empowers AI agents to engage in a more dynamic, iterative, and self-reflective process. In this paper, we present a similar fully autonomous AI formal verification engineer, Saarthi, capable of verifying a given RTL design end-to-end using an agentic workflow. With Saarthi, verification engineers can focus on more complex problems, and verification teams can strive for more ambitious goals. The domain-agnostic implementation of Saarthi makes it scalable for use across various domains such as RTL design, UVM-based verification, and others.

Verifying Non-friendly Formal Verification Designs: Can We Start Earlier?

Oct 24, 2024

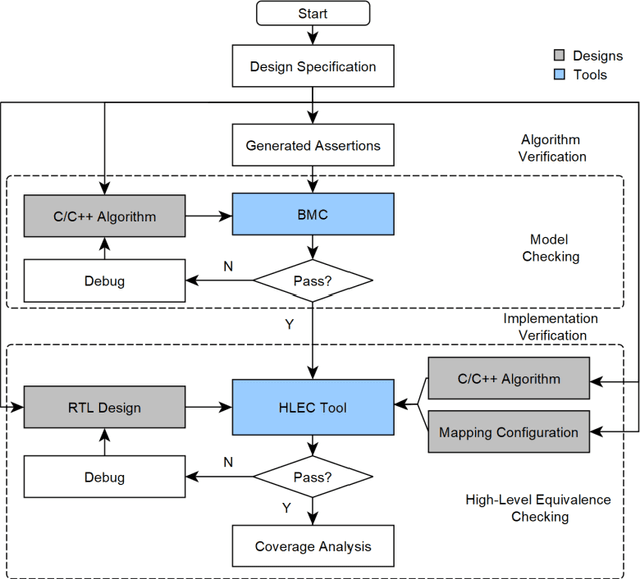

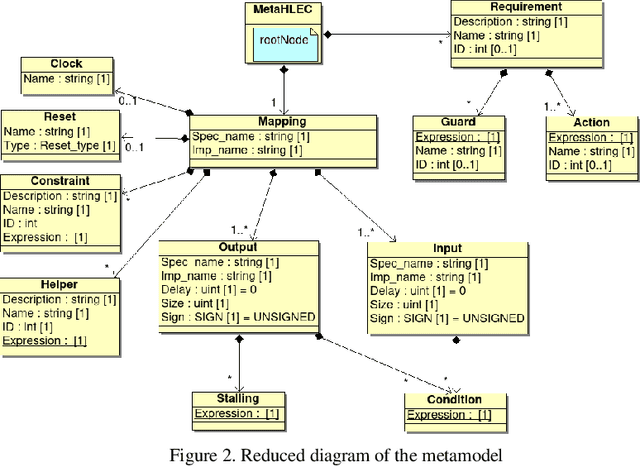

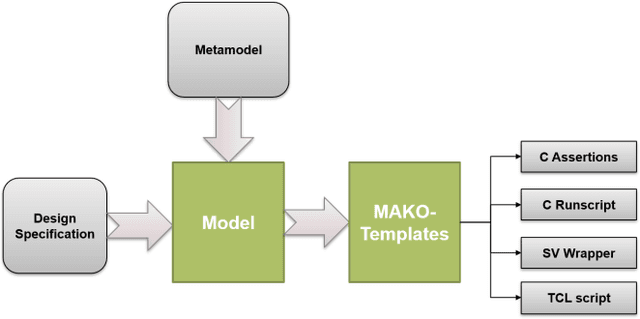

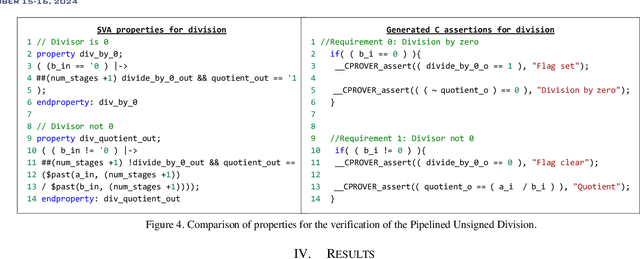

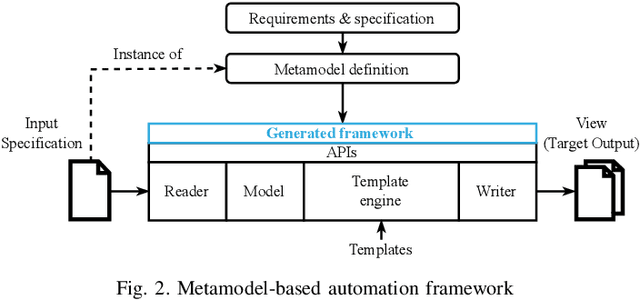

Abstract:The design of Systems on Chips (SoCs) is becoming more and more complex due to technological advancements. Missed bugs can cause drastic failures in safety-critical environments leading to the endangerment of lives. To overcome these drastic failures, formal property verification (FPV) has been applied in the industry. However, there exist multiple hardware designs where the results of FPV are not conclusive even for long runtimes of model-checking tools. For this reason, the use of High-level Equivalence Checking (HLEC) tools has been proposed in the last few years. However, the procedure for how to use it inside an industrial toolchain has not been defined. For this reason, we proposed an automated methodology based on metamodeling techniques which consist of two main steps. First, an untimed algorithmic description written in C++ is verified in an early stage using generated assertions; the advantage of this step is that the assertions at the software level run in seconds and we can start our analysis with conclusive results about our algorithm before starting to write the RTL (Register Transfer Level) design. Second, this algorithmic description is verified against its sequential design using HLEC and the respective metamodel parameters. The results show that the presented methodology can find bugs early related to the algorithmic description and prepare the setup for the HLEC verification. This helps to reduce the verification efforts to set up the tool and write the properties manually which is always error-prone. The proposed framework can help teams working on datapaths to verify and make decisions in an early stage of the verification flow.

FuzzWiz -- Fuzzing Framework for Efficient Hardware Coverage

Oct 23, 2024

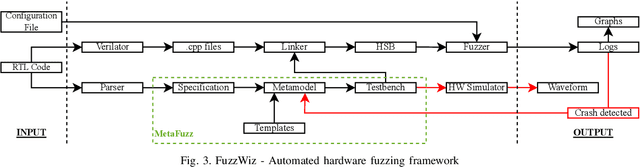

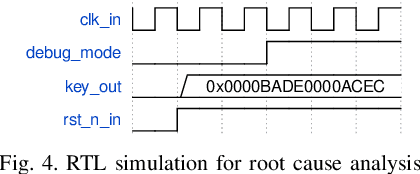

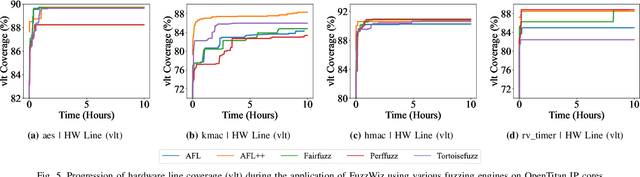

Abstract:Ever-increasing design complexity of System-on-Chips (SoCs) led to significant verification challenges. Unlike software, bugs in hardware design are vigorous and eternal i.e., once the hardware is fabricated, it cannot be repaired with any patch. Despite being one of the powerful techniques used in verification, the dynamic random approach cannot give confidence to complex Register Transfer Leve (RTL) designs during the pre-silicon design phase. In particular, achieving coverage targets and exposing bugs is a complicated task with random simulations. In this paper, we leverage an existing testing solution available in the software world known as fuzzing and apply it to hardware verification in order to achieve coverage targets in quick time. We created an automated hardware fuzzing framework FuzzWiz using metamodeling and Python to achieve coverage goals faster. It includes parsing the RTL design module, converting it into C/C++ models, creating generic testbench with assertions, fuzzer-specific compilation, linking, and fuzzing. Furthermore, it is configurable and provides the debug flow if any crash is detected during the fuzzing process. The proposed framework is applied on four IP blocks from Google's OpenTitan chip with various fuzzing engines to show its scalability and compatibility. Our benchmarking results show that we could achieve around 90% of the coverage 10 times faster than traditional simulation regression based approach.

Efficient Stimuli Generation using Reinforcement Learning in Design Verification

May 30, 2024Abstract:The increasing design complexity of System-on-Chips (SoCs) has led to significant verification challenges, particularly in meeting coverage targets within a timely manner. At present, coverage closure is heavily dependent on constrained random and coverage driven verification methodologies where the randomized stimuli are bounded to verify certain scenarios and to reach coverage goals. This process is said to be exhaustive and to consume a lot of project time. In this paper, a novel methodology is proposed to generate efficient stimuli with the help of Reinforcement Learning (RL) to reach the maximum code coverage of the Design Under Verification (DUV). Additionally, an automated framework is created using metamodeling to generate a SystemVerilog testbench and an RL environment for any given design. The proposed approach is applied to various designs and the produced results proves that the RL agent provides effective stimuli to achieve code coverage faster in comparison with baseline random simulations. Furthermore, various RL agents and reward schemes are analyzed in our work.

Improving Simulation Regression Efficiency using a Machine Learning-based Method in Design Verification

May 24, 2024Abstract:The verification throughput is becoming a major challenge bottleneck, since the complexity and size of SoC designs are still ever increasing. Simply adding more CPU cores and running more tests in parallel will not scale anymore. This paper discusses various methods of improving verification throughput: ranking and the new machine learning (ML) based technology introduced by Cadence i.e. Xcelium ML. Both methods aim at getting comparable coverage in less CPU time by applying more efficient stimulus. Ranking selects specific seeds that simply turned out to come up with the largest coverage in previous simulations, while Xcelium ML generates optimized patterns as a result of finding correlations between randomization points and achieved coverage of previous regressions. Quantified results as well as pros & cons of each approach are discussed in this paper at the example of three actual industry projects. Both Xcelium ML and Ranking methods gave comparable compression & speedup factors around 3 consistently. But the optimized ML based regressions simulated new random scenarios occasionally producing a coverage regain of more than 100%. Finally, a methodology is proposed to use Xcelium ML efficiently throughout the product development.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge