Deokjun Eom

Variational Neural Temporal Point Process

Feb 17, 2022Abstract:A temporal point process is a stochastic process that predicts which type of events is likely to happen and when the event will occur given a history of a sequence of events. There are various examples of occurrence dynamics in the daily life, and it is important to train the temporal dynamics and solve two different prediction problems, time and type predictions. Especially, deep neural network based models have outperformed the statistical models, such as Hawkes processes and Poisson processes. However, many existing approaches overfit to specific events, instead of learning and predicting various event types. Therefore, such approaches could not cope with the modified relationships between events and fail to predict the intensity functions of temporal point processes very well. In this paper, to solve these problems, we propose a variational neural temporal point process (VNTPP). We introduce the inference and the generative networks, and train a distribution of latent variable to deal with stochastic property on deep neural network. The intensity functions are computed using the distribution of latent variable so that we can predict event types and the arrival times of the events more accurately. We empirically demonstrate that our model can generalize the representations of various event types. Moreover, we show quantitatively and qualitatively that our model outperforms other deep neural network based models and statistical processes on synthetic and real-world datasets.

Improved Predictive Deep Temporal Neural Networks with Trend Filtering

Oct 16, 2020

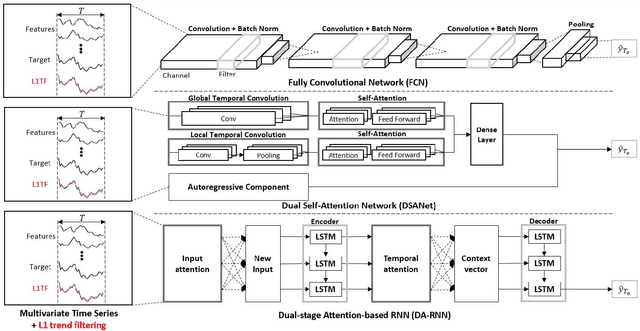

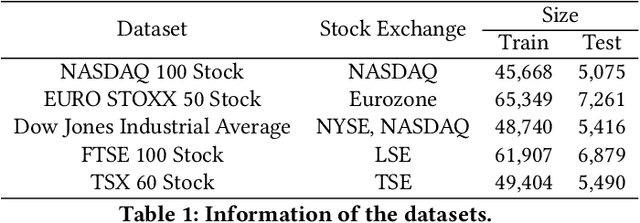

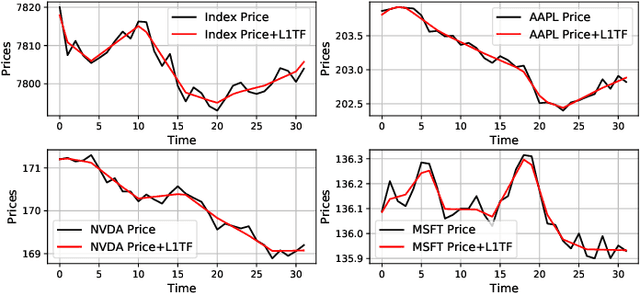

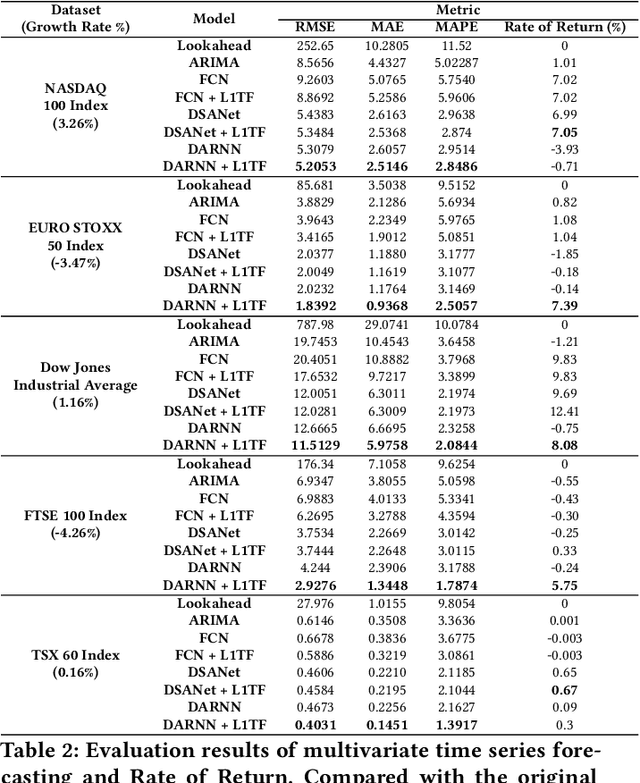

Abstract:Forecasting with multivariate time series, which aims to predict future values given previous and current several univariate time series data, has been studied for decades, with one example being ARIMA. Because it is difficult to measure the extent to which noise is mixed with informative signals within rapidly fluctuating financial time series data, designing a good predictive model is not a simple task. Recently, many researchers have become interested in recurrent neural networks and attention-based neural networks, applying them in financial forecasting. There have been many attempts to utilize these methods for the capturing of long-term temporal dependencies and to select more important features in multivariate time series data in order to make accurate predictions. In this paper, we propose a new prediction framework based on deep neural networks and a trend filtering, which converts noisy time series data into a piecewise linear fashion. We reveal that the predictive performance of deep temporal neural networks improves when the training data is temporally processed by a trend filtering. To verify the effect of our framework, three deep temporal neural networks, state of the art models for predictions in time series finance data, are used and compared with models that contain trend filtering as an input feature. Extensive experiments on real-world multivariate time series data show that the proposed method is effective and significantly better than existing baseline methods.

* 8 pages, 4 figures, 3 tables, ICAIF 2020: ACM International Conference on AI in Finance

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge