David E. Tafler

Explaining with Attribute-based and Relational Near Misses: An Interpretable Approach to Distinguishing Facial Expressions of Pain and Disgust

Aug 27, 2023

Abstract:Explaining concepts by contrasting examples is an efficient and convenient way of giving insights into the reasons behind a classification decision. This is of particular interest in decision-critical domains, such as medical diagnostics. One particular challenging use case is to distinguish facial expressions of pain and other states, such as disgust, due to high similarity of manifestation. In this paper, we present an approach for generating contrastive explanations to explain facial expressions of pain and disgust shown in video sequences. We implement and compare two approaches for contrastive explanation generation. The first approach explains a specific pain instance in contrast to the most similar disgust instance(s) based on the occurrence of facial expressions (attributes). The second approach takes into account which temporal relations hold between intervals of facial expressions within a sequence (relations). The input to our explanation generation approach is the output of an interpretable rule-based classifier for pain and disgust.We utilize two different similarity metrics to determine near misses and far misses as contrasting instances. Our results show that near miss explanations are shorter than far miss explanations, independent from the applied similarity metric. The outcome of our evaluation indicates that pain and disgust can be distinguished with the help of temporal relations. We currently plan experiments to evaluate how the explanations help in teaching concepts and how they could be enhanced by further modalities and interaction.

Explanation as a process: user-centric construction of multi-level and multi-modal explanations

Oct 07, 2021

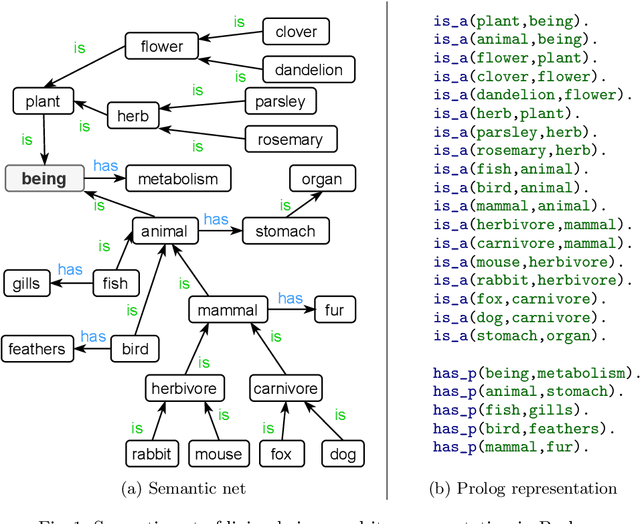

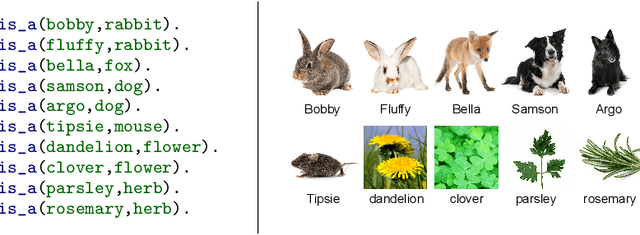

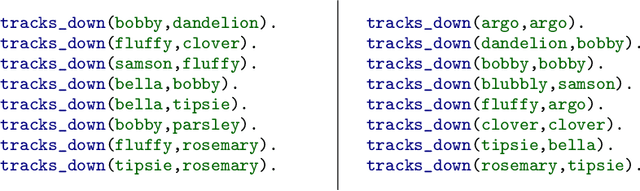

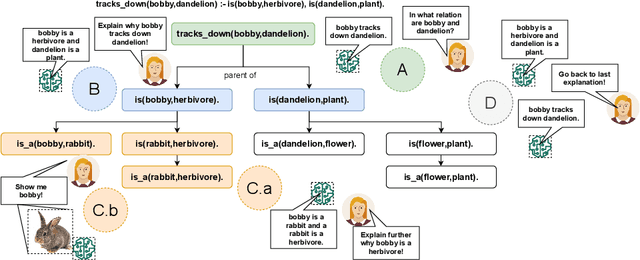

Abstract:In the last years, XAI research has mainly been concerned with developing new technical approaches to explain deep learning models. Just recent research has started to acknowledge the need to tailor explanations to different contexts and requirements of stakeholders. Explanations must not only suit developers of models, but also domain experts as well as end users. Thus, in order to satisfy different stakeholders, explanation methods need to be combined. While multi-modal explanations have been used to make model predictions more transparent, less research has focused on treating explanation as a process, where users can ask for information according to the level of understanding gained at a certain point in time. Consequently, an opportunity to explore explanations on different levels of abstraction should be provided besides multi-modal explanations. We present a process-based approach that combines multi-level and multi-modal explanations. The user can ask for textual explanations or visualizations through conversational interaction in a drill-down manner. We use Inductive Logic Programming, an interpretable machine learning approach, to learn a comprehensible model. Further, we present an algorithm that creates an explanatory tree for each example for which a classifier decision is to be explained. The explanatory tree can be navigated by the user to get answers of different levels of detail. We provide a proof-of-concept implementation for concepts induced from a semantic net about living beings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge