David Cohn

Provable Approximations for Constrained $\ell_p$ Regression

Feb 27, 2019

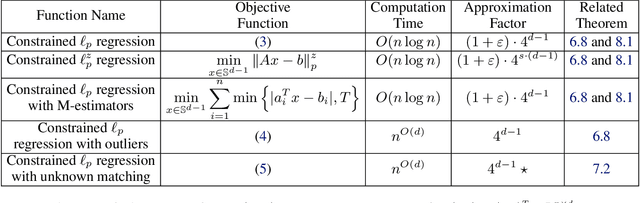

Abstract:The $\ell_p$ linear regression problem is to minimize $f(x)=||Ax-b||_p$ over $x\in\mathbb{R}^d$, where $A\in\mathbb{R}^{n\times d}$, $b\in \mathbb{R}^n$, and $p>0$. To avoid overfitting and bound $||x||_2$, the constrained $\ell_p$ regression minimizes $f(x)$ over every unit vector $x\in\mathbb{R}^d$. This makes the problem non-convex even for the simplest case $d=p=2$. Instead, ridge regression is used to minimize the Lagrange form $f(x)+\lambda ||x||_2$ over $x\in\mathbb{R}^d$, which yields a convex problem in the price of calibrating the regularization parameter $\lambda>0$. We provide the first provable constant factor approximation algorithm that solves the constrained $\ell_p$ regression directly, for every constant $p,d\geq 1$. Using core-sets, its running time is $O(n \log n)$ including extensions for streaming and distributed (big) data. In polynomial time, it can handle outliers, $p\in (0,1)$ and minimize $f(x)$ over every $x$ and permutation of rows in $A$. Experimental results are also provided, including open source and comparison to existing software.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge