David Aparicio

Automated test generation to evaluate tool-augmented LLMs as conversational AI agents

Sep 24, 2024Abstract:Tool-augmented LLMs are a promising approach to create AI agents that can have realistic conversations, follow procedures, and call appropriate functions. However, evaluating them is challenging due to the diversity of possible conversations, and existing datasets focus only on single interactions and function-calling. We present a test generation pipeline to evaluate LLMs as conversational AI agents. Our framework uses LLMs to generate diverse tests grounded on user-defined procedures. For that, we use intermediate graphs to limit the LLM test generator's tendency to hallucinate content that is not grounded on input procedures, and enforces high coverage of the possible conversations. Additionally, we put forward ALMITA, a manually curated dataset for evaluating AI agents in customer support, and use it to evaluate existing LLMs. Our results show that while tool-augmented LLMs perform well in single interactions, they often struggle to handle complete conversations. While our focus is on customer support, our method is general and capable of AI agents for different domains.

Intent Detection at Scale: Tuning a Generic Model using Relevant Intents

Sep 15, 2023

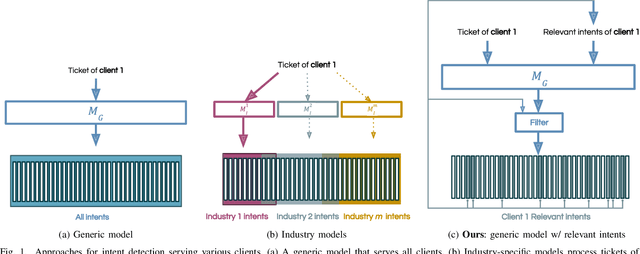

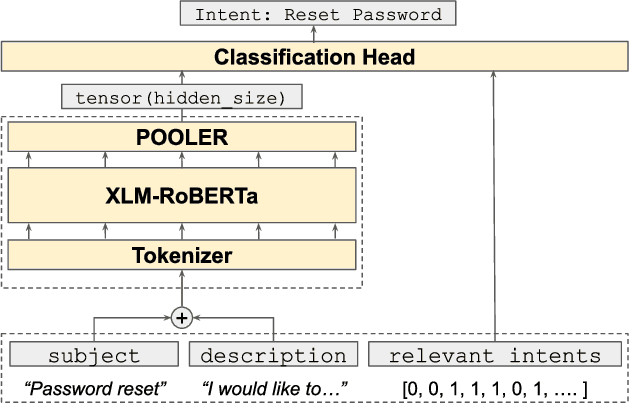

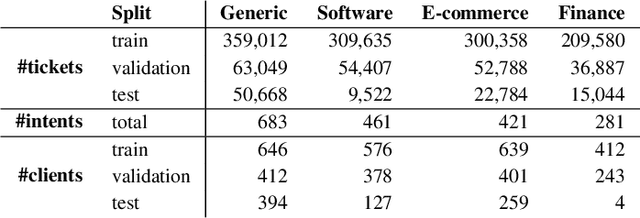

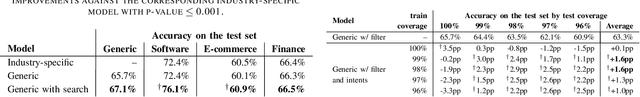

Abstract:Accurately predicting the intent of customer support requests is vital for efficient support systems, enabling agents to quickly understand messages and prioritize responses accordingly. While different approaches exist for intent detection, maintaining separate client-specific or industry-specific models can be costly and impractical as the client base expands. This work proposes a system to scale intent predictions to various clients effectively, by combining a single generic model with a per-client list of relevant intents. Our approach minimizes training and maintenance costs while providing a personalized experience for clients, allowing for seamless adaptation to changes in their relevant intents. Furthermore, we propose a strategy for using the clients relevant intents as model features that proves to be resilient to changes in the relevant intents of clients -- a common occurrence in production environments. The final system exhibits significantly superior performance compared to industry-specific models, showcasing its flexibility and ability to cater to diverse client needs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge