Daniel R. Harper

Representations and Strategies for Transferable Machine Learning Models in Chemical Discovery

Jun 20, 2021

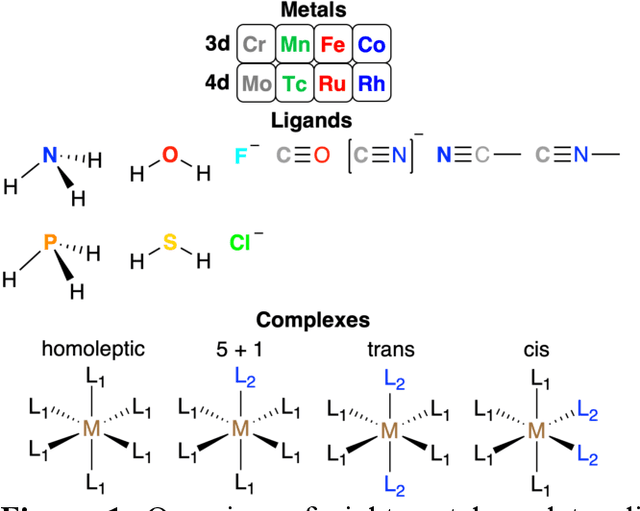

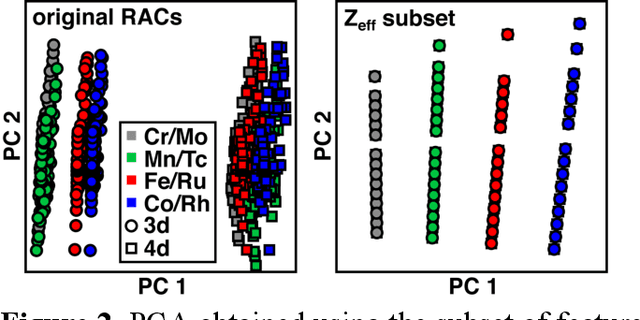

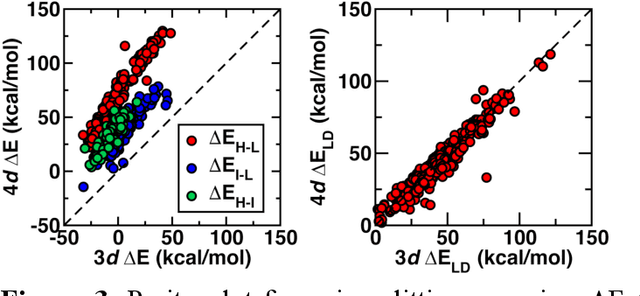

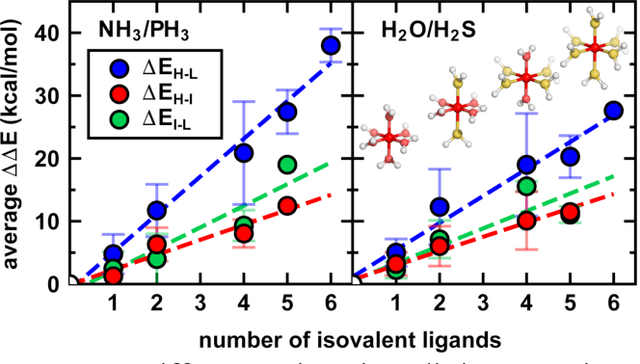

Abstract:Strategies for machine-learning(ML)-accelerated discovery that are general across materials composition spaces are essential, but demonstrations of ML have been primarily limited to narrow composition variations. By addressing the scarcity of data in promising regions of chemical space for challenging targets like open-shell transition-metal complexes, general representations and transferable ML models that leverage known relationships in existing data will accelerate discovery. Over a large set (ca. 1000) of isovalent transition-metal complexes, we quantify evident relationships for different properties (i.e., spin-splitting and ligand dissociation) between rows of the periodic table (i.e., 3d/4d metals and 2p/3p ligands). We demonstrate an extension to graph-based revised autocorrelation (RAC) representation (i.e., eRAC) that incorporates the effective nuclear charge alongside the nuclear charge heuristic that otherwise overestimates dissimilarity of isovalent complexes. To address the common challenge of discovery in a new space where data is limited, we introduce a transfer learning approach in which we seed models trained on a large amount of data from one row of the periodic table with a small number of data points from the additional row. We demonstrate the synergistic value of the eRACs alongside this transfer learning strategy to consistently improve model performance. Analysis of these models highlights how the approach succeeds by reordering the distances between complexes to be more consistent with the periodic table, a property we expect to be broadly useful for other materials domains.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge