Daniel N. Kaslovsky

Non-Asymptotic Analysis of Tangent Space Perturbation

Dec 06, 2013

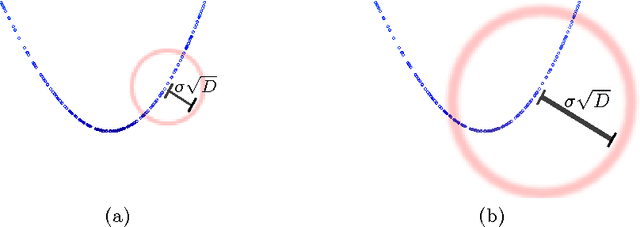

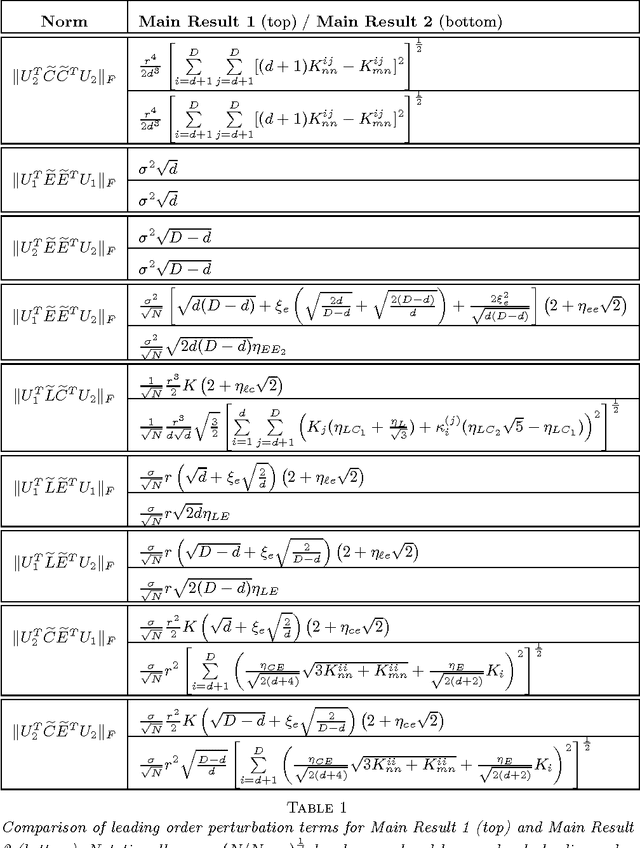

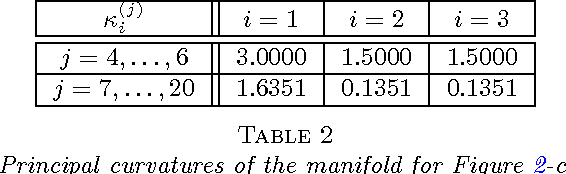

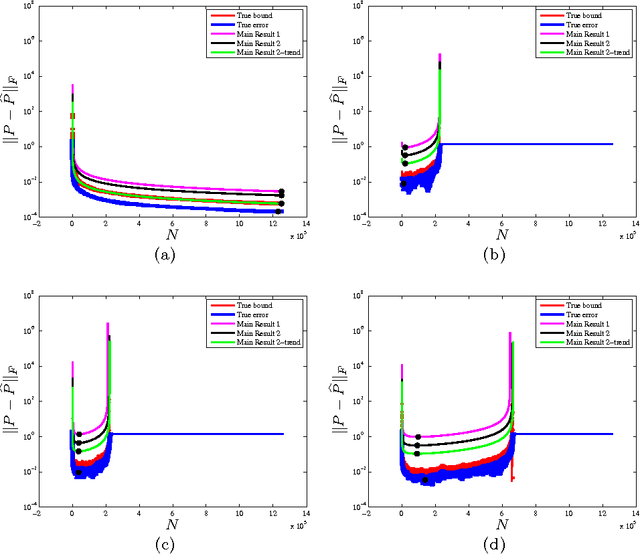

Abstract:Constructing an efficient parameterization of a large, noisy data set of points lying close to a smooth manifold in high dimension remains a fundamental problem. One approach consists in recovering a local parameterization using the local tangent plane. Principal component analysis (PCA) is often the tool of choice, as it returns an optimal basis in the case of noise-free samples from a linear subspace. To process noisy data samples from a nonlinear manifold, PCA must be applied locally, at a scale small enough such that the manifold is approximately linear, but at a scale large enough such that structure may be discerned from noise. Using eigenspace perturbation theory and non-asymptotic random matrix theory, we study the stability of the subspace estimated by PCA as a function of scale, and bound (with high probability) the angle it forms with the true tangent space. By adaptively selecting the scale that minimizes this bound, our analysis reveals an appropriate scale for local tangent plane recovery. We also introduce a geometric uncertainty principle quantifying the limits of noise-curvature perturbation for stable recovery. With the purpose of providing perturbation bounds that can be used in practice, we propose plug-in estimates that make it possible to directly apply the theoretical results to real data sets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge