D. T. Braithwaite

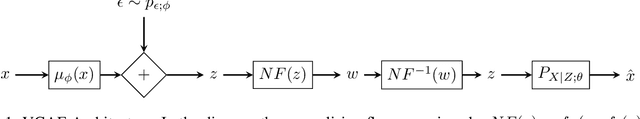

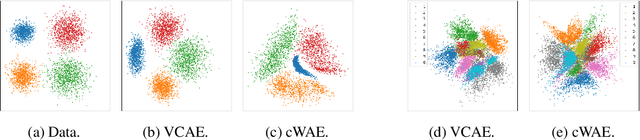

Variance Constrained Autoencoding

May 08, 2020

Abstract:Recent state-of-the-art autoencoder based generative models have an encoder-decoder structure and learn a latent representation with a pre-defined distribution that can be sampled from. Implementing the encoder networks of these models in a stochastic manner provides a natural and common approach to avoid overfitting and enforce a smooth decoder function. However, we show that for stochastic encoders, simultaneously attempting to enforce a distribution constraint and minimising an output distortion leads to a reduction in generative and reconstruction quality. In addition, attempting to enforce a latent distribution constraint is not reasonable when performing disentanglement. Hence, we propose the variance-constrained autoencoder (VCAE), which only enforces a variance constraint on the latent distribution. Our experiments show that VCAE improves upon Wasserstein Autoencoder and the Variational Autoencoder in both reconstruction and generative quality on MNIST and CelebA. Moreover, we show that VCAE equipped with a total correlation penalty term performs equivalently to FactorVAE at learning disentangled representations on 3D-Shapes while being a more principled approach.

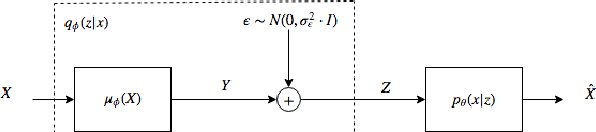

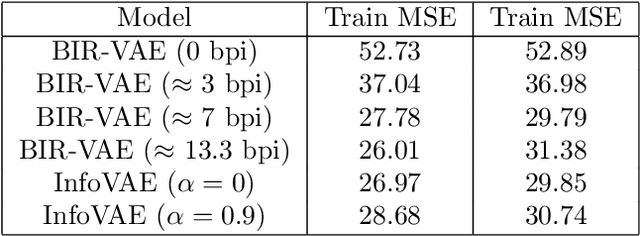

Bounded Information Rate Variational Autoencoders

Jul 25, 2018

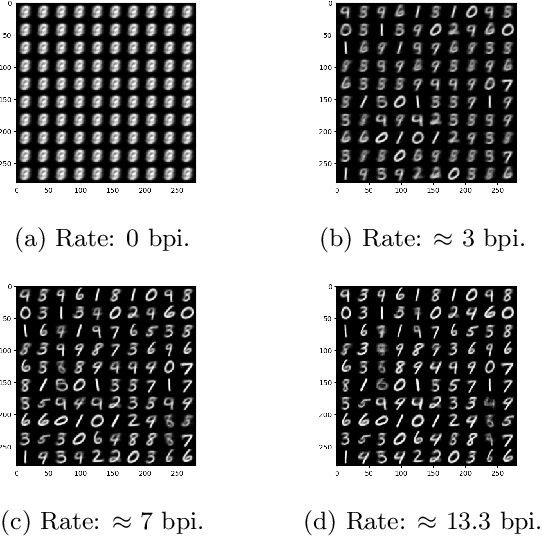

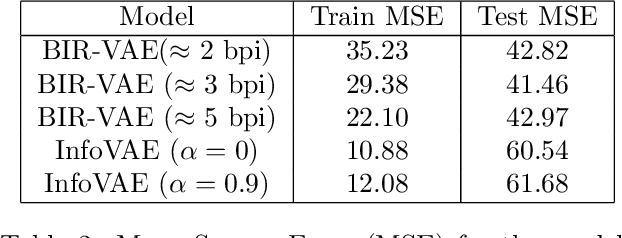

Abstract:This paper introduces a new member of the family of Variational Autoencoders (VAE) that constrains the rate of information transferred by the latent layer. The latent layer is interpreted as a communication channel, the information rate of which is bound by imposing a pre-set signal-to-noise ratio. The new constraint subsumes the mutual information between the input and latent variables, combining naturally with the likelihood objective of the observed data as used in a conventional VAE. The resulting Bounded-Information-Rate Variational Autoencoder (BIR-VAE) provides a meaningful latent representation with an information resolution that can be specified directly in bits by the system designer. The rate constraint can be used to prevent overtraining, and the method naturally facilitates quantisation of the latent variables at the set rate. Our experiments confirm that the BIR-VAE has a meaningful latent representation and that its performance is at least as good as state-of-the-art competing algorithms, but with lower computational complexity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge