Corey M. Hudson

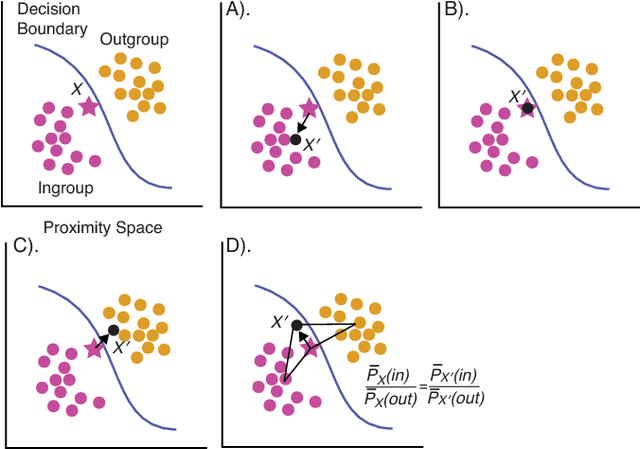

Explicating feature contribution using Random Forest proximity distances

Jul 17, 2018

Abstract:In Random Forests, proximity distances are a metric representation of data into decision space. By observing how changes in input map to the movement of instances in this space we are able to determine the independent contribution of each feature to the decision-making process. For binary feature vectors, this process is fully specified. As these changes in input move particular instances nearer to the in-group or out-group, the independent contribution of each feature can be uncovered. Using this technique, we are able to calculate the contribution of each feature in determining how black-box decisions were made. This allows explication of the decision-making process, audit of the classifier, and post-hoc analysis of errors in classification.

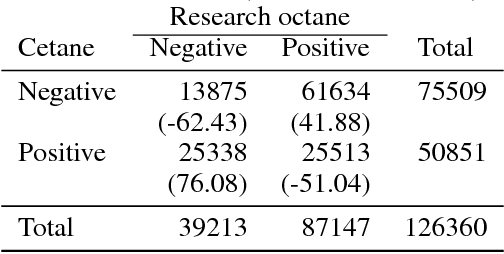

Probability Series Expansion Classifier that is Interpretable by Design

Oct 27, 2017

Abstract:This work presents a new classifier that is specifically designed to be fully interpretable. This technique determines the probability of a class outcome, based directly on probability assignments measured from the training data. The accuracy of the predicted probability can be improved by measuring more probability estimates from the training data to create a series expansion that refines the predicted probability. We use this work to classify four standard datasets and achieve accuracies comparable to that of Random Forests. Because this technique is interpretable by design, it is capable of determining the combinations of features that contribute to a particular classification probability for individual cases as well as the weightings of each of combination of features.

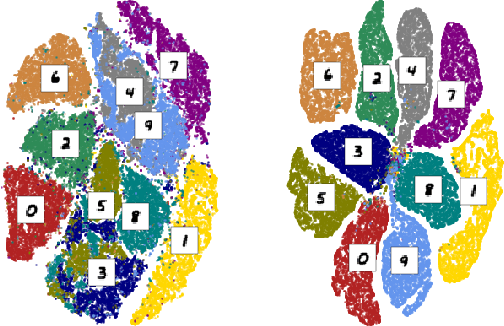

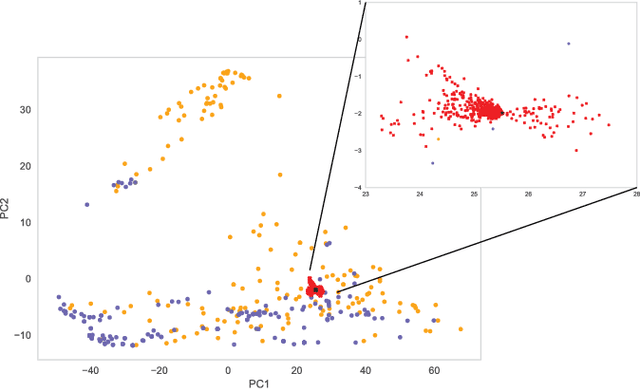

Mapping chemical performance on molecular structures using locally interpretable explanations

Nov 22, 2016

Abstract:In this work, we present an application of Locally Interpretable Machine-Agnostic Explanations to 2-D chemical structures. Using this framework we are able to provide a structural interpretation for an existing black-box model for classifying biologically produced fuel compounds with regard to Research Octane Number. This method of "painting" locally interpretable explanations onto 2-D chemical structures replicates the chemical intuition of synthetic chemists, allowing researchers in the field to directly accept, reject, inform and evaluate decisions underlying inscrutably complex quantitative structure-activity relationship models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge