Cham Tat Jen

A Diffusion Process on Riemannian Manifold for Visual Tracking

Mar 24, 2013

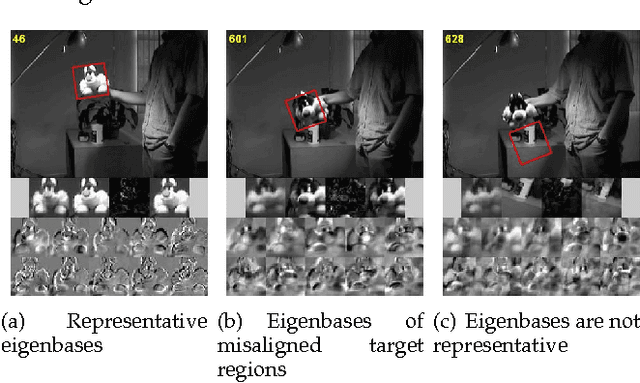

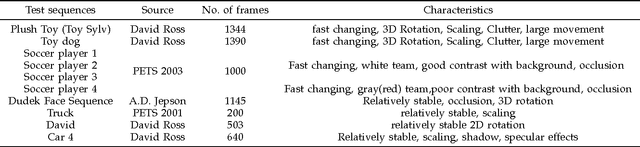

Abstract:Robust visual tracking for long video sequences is a research area that has many important applications. The main challenges include how the target image can be modeled and how this model can be updated. In this paper, we model the target using a covariance descriptor, as this descriptor is robust to problems such as pixel-pixel misalignment, pose and illumination changes, that commonly occur in visual tracking. We model the changes in the template using a generative process. We introduce a new dynamical model for the template update using a random walk on the Riemannian manifold where the covariance descriptors lie in. This is done using log-transformed space of the manifold to free the constraints imposed inherently by positive semidefinite matrices. Modeling template variations and poses kinetics together in the state space enables us to jointly quantify the uncertainties relating to the kinematic states and the template in a principled way. Finally, the sequential inference of the posterior distribution of the kinematic states and the template is done using a particle filter. Our results shows that this principled approach can be robust to changes in illumination, poses and spatial affine transformation. In the experiments, our method outperformed the current state-of-the-art algorithm - the incremental Principal Component Analysis method, particularly when a target underwent fast poses changes and also maintained a comparable performance in stable target tracking cases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge