Carlos Theran

Health Disparities through Generative AI Models: A Comparison Study Using A Domain Specific large language model

Oct 23, 2023Abstract:Health disparities are differences in health outcomes and access to healthcare between different groups, including racial and ethnic minorities, low-income people, and rural residents. An artificial intelligence (AI) program called large language models (LLMs) can understand and generate human language, improving health communication and reducing health disparities. There are many challenges in using LLMs in human-doctor interaction, including the need for diverse and representative data, privacy concerns, and collaboration between healthcare providers and technology experts. We introduce the comparative investigation of domain-specific large language models such as SciBERT with a multi-purpose LLMs BERT. We used cosine similarity to analyze text queries about health disparities in exam rooms when factors such as race are used alone. Using text queries, SciBERT fails when it doesn't differentiate between queries text: "race" alone and "perpetuates health disparities." We believe clinicians can use generative AI to create a draft response when communicating asynchronously with patients. However, careful attention must be paid to ensure they are developed and implemented ethically and equitably.

Time Series Analysis of Blockchain-Based Cryptocurrency Price Changes

Feb 19, 2022

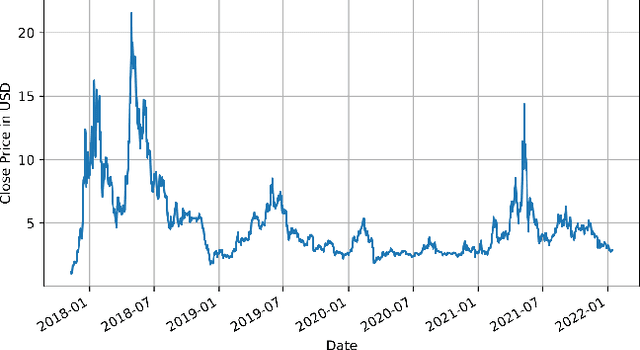

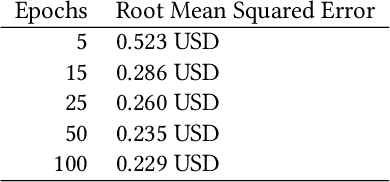

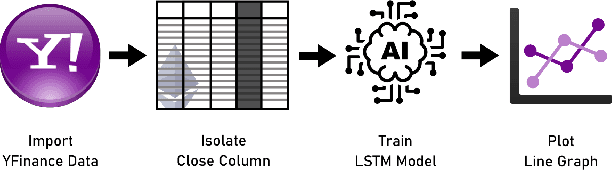

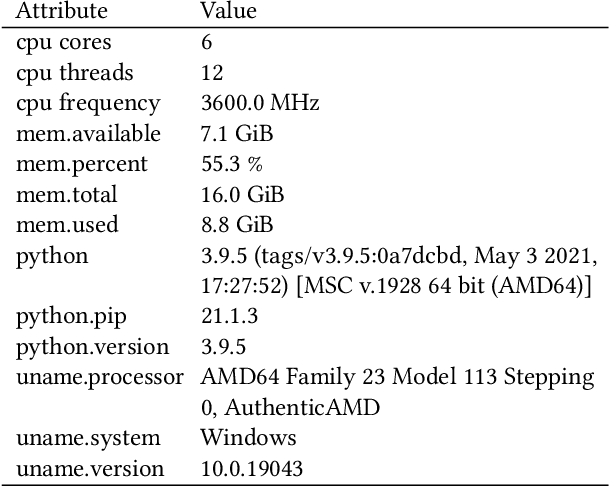

Abstract:In this paper we apply neural networks and Artificial Intelligence (AI) to historical records of high-risk cryptocurrency coins to train a prediction model that guesses their price. This paper's code contains Jupyter notebooks, one of which outputs a timeseries graph of any cryptocurrency price once a CSV file of the historical data is inputted into the program. Another Jupyter notebook trains an LSTM, or a long short-term memory model, to predict a cryptocurrency's closing price. The LSTM is fed the close price, which is the price that the currency has at the end of the day, so it can learn from those values. The notebook creates two sets: a training set and a test set to assess the accuracy of the results. The data is then normalized using manual min-max scaling so that the model does not experience any bias; this also enhances the performance of the model. Then, the model is trained using three layers -- an LSTM, dropout, and dense layer-minimizing the loss through 50 epochs of training; from this training, a recurrent neural network (RNN) is produced and fitted to the training set. Additionally, a graph of the loss over each epoch is produced, with the loss minimizing over time. Finally, the notebook plots a line graph of the actual currency price in red and the predicted price in blue. The process is then repeated for several more cryptocurrencies to compare prediction models. The parameters for the LSTM, such as number of epochs and batch size, are tweaked to try and minimize the root mean square error.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge